Mediocratopia: 4

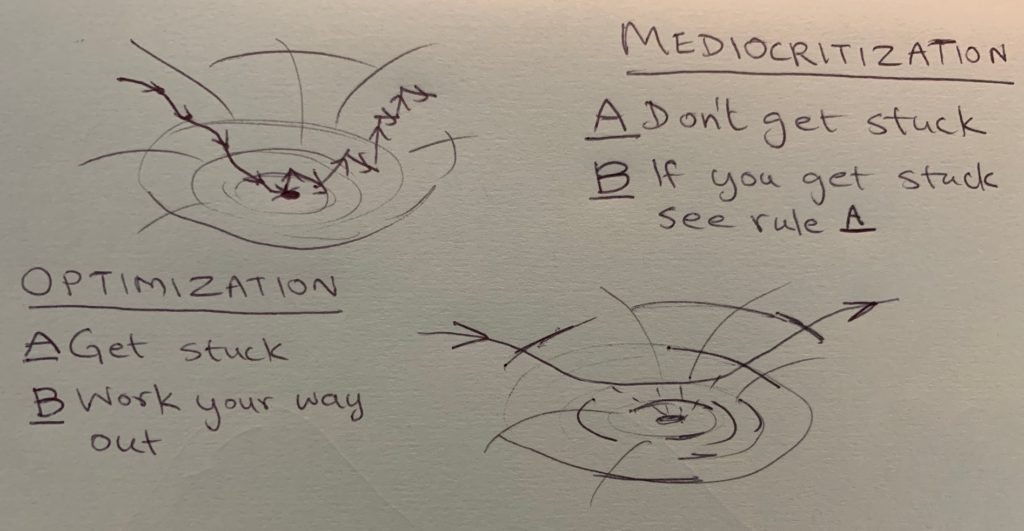

You've probably heard of optimization, that nihilistic process of descending into valleys or ascending up hills till you get stuck, having an existential crisis, and then flailing randomly to climb out (or down) again. Mediocritization is the opposite of that: never getting stuck in the first place. Here's a picture.

The cartoon on the left is optimization. The descent is a relatively orderly process ("gradient descent" takes you in the local steepest incline direction). The getting-out-again part is necessarily disorderly. You must inject randomness. The cartoon on the right is mediocritization: don't get stuck.

When people talk of "global" optimization, they usually mean that over a long period, you flail less wildly to get out of valleys because the chances that you've already found the deepest valley get higher as you explore more. This process goes by names like "annealing schedule".

Global or local, the thing about optimization is that it likes being stuck at the bottoms of valleys or the tops of hills, so long as it knows it is the deepest valley or highest hill. The thing about mediocritization is that it does not like either condition. Mediocritizers likes to live on slopes rather than tops or bottoms. The reason is subtle: on a slope, there is always a way to tell directions apart. The environment is different in different directions. It is anisotropic. Mediocritization is an environmental anisotropy maintaining process (not a satisficing process as naive optimizers tend to assume).

Anisotropy is information in disguise. Optimizers get stuck at the bottoms of valleys or tops of hills because the world is locally flat. No direction is any different from any other. There are no meaningful decisions to make relative to the external world because it is the same in all directions, or isotropic. This is why you need to inject randomness to break out (mathematically, the gradient goes to zero, so can no longer serve as a directional discriminant).

Generalizing, in mediocritization, you always want to have a way available to continue the game that is better than random. This means you need some anisotropic pattern of information in the environment to act on.

Three examples of mediocritization:

- When Tiger Woods was king of the hill (a position he just regained after a long time), his closest competitors performed worse by about a stroke on average. Apparently, when Tiger is in good form, there's no point trying too hard. See this paper by Jennifer Brown..

- My buddy Jason Ho, who just had this entertaining profile written about him, is on the surface, a caricature of an optimizer techbro. But look again: he trained hard and placed second in an amateur body-building competition, and then moved on to newer challenges rather than obsessing over getting to #1.

- When I was in grad school, and occasionally hit by mild panic at the thought of somebody scooping me on the research I was working on, I came up with a coping technique I called "+1". For any problem, I'd always take some time to identify and write down the next problem I would work on if somebody else scooped me on the current one. That way, I'd hit the ground running if I was scooped.

Carsean moral of the 3 stories: optimization is how you play to win finite games, but mediocritization is how you play to continue the game.

8 Comments

"Sliding down the surface of things" as Bret Easton Ellis would express it.

Tiger tried way too hard against Rocco Mediate in sudden death in his second most recent major win. That could be his whole problem, which seems to have gotten past.

I optimized your image for more contrast.

https://imgur.com/gallery/YYTa5V8

Such an interesting contrast.

How would this work when taking into account variables such as time, situational factors, approach resilience, developmental Psychology?

Wouldn't you be able to end up with the conclusion that mediocritisation vs. optimisation is an interplay rather than a fixed modus operandi?

More I think about this, more I think there's something really off about it; systems can't leave local maxima because there's nothing better within their view, and systems that find global maxima cannot leave those either, because there is nothing better at all.

It's only when you change the reward function that the normal questions of over-optimisation occur.

And in that context, anisotropy of the reward function would be there whenever it was needed; the moment there is a job to do, and as the reward function shifts the system would keep getting anisotropy back, unless you're already at the new optimum anyway. So to make that distinction meaningful you'd need to keep trying to do optimisation-ish things on the same function.

So in that sense, if we compare these algorithms on an unchanging reward landscape, where both are designed to run for ever, while the optimisation process solves the jigsaw once, then scrambles it and begins again, the mediocrization process just veers away from the correct jigsaw and just starts trying to fit land pieces into the sky again.

If that was true, then the summary of mediocrisation could be "if you think you're starting being too correct, spend some time being wrong instead". Instead, your examples suggest more "if you start loosing learning progress, (in the sense of gradient magnitude decreasing) weaken or shift your assumptions".

The latter is likely to happen frequently in any approximately exponentially converging system, you'll start off making great progress, then hit diminishing returns and start adding a new criteria. This is probably best described as methodological dilettantism; always get bored and start fiddling with other options when the going gets hard.

I also feel like there's something about estimated curvature here too; if you want to avoid maxima, or just try to find situations in which the direction of improvement remains robust under noise, (so a gently sloping valley and a tilted plane might be equally interesting in the sense of representing clear forward progess).

What is a Carsean moral? Google only leads to this post.

Carse—https://en.wikipedia.org/wiki/Finite_and_Infinite_Games

This kind of matches with school of the "oblique function" in architecture, brainchild of architect Claude Parent and philosopher Paul Virilio (See http://ufba-fbua.com/wp-content/uploads/2012/05/2010_William-Layzell_Oblique-Function.pdf for a rather nice overview in english or https://boiteaoutils.blogspot.com/2010/09/oblique-function-by-claude-parent-and.html).

In essence, the "oblique architecture" aims to move beyond the quasi isotropic vertical/horizontal architecture towards a more anisotropic way of building and shaping -- which in turn can serve a inspiration on how to look at (and shape) idea space -- or playing fields that encourages infinite games over finite games.

Interestingly, Virilio himself thought that mankind and human consicuousness are moving more and more towards a dynamic that needs to be supported by oblicque (anisotropic) architecture and landscaping:

"In effect, the vertical-horizontal stationary position no longer corresponding to mankind’s own dynamic, architecture will henceforth have to express itself in the inclined plane, in order to situate itself on the new plane of human consciousness. Failing that, all

architecture projects will rapidly become unusable."

- Paul Virilio