Social Media Consciousness

The most amazing consequence of the recent transition to social media consciousness is nothing.

My first essay for Ribbonfarm was a piece about changing subjective consciousness over the past few hundred years, focusing on what I called scholastic-industrial consciousness, which I claimed has largely displaced pre-literate forms of consciousness.

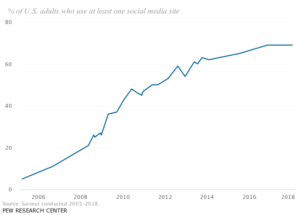

Three and a half years later, I’m interested in examining a different, much more recent kind of consciousness change: the transition to social media consciousness. Ironically, when I was writing in January of 2015, social media adoption had already begun to plateau in the United States. Between 2006 and 2015, two thirds of the adult population of the United States began using social media.

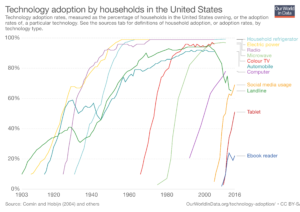

The slope of the adoption curve over time for social media looks about the same as for other technologies introduced over the past century or so, such as radio, television, telephones, and refrigerators.

During the period that these other technologies were being adopted, major social changes were taking place. The world of 1903 was very different form the world of 1985. It was easy to tell causal stories (whether accurate or not) about how technology was changing humanity.

The world of 2005, however, looks very similar to the world of 2018, with the exception of the ubiquity of social media and the rectangles through which we reach it. People still find it fun and lucrative to tell causal stories about how technology is changing humanity, of course. But when we are not engaged in this hobby, it is difficult to find hard evidence of a serious before-and-after effect. For instance, one of the most popular causal stories is that social media has caused increased mental illness, such as anxiety and depression. Writing in 2001 (updating in 2009), Hubert Dreyfus (On The Internet, p. 3) cites a 1998 study that concluded that using the internet caused people to experience more depressive symptoms and more loneliness. “When people were given access to the World Wide Web, they found themselves feeling isolated and depressed,” says Dreyfus. “This surprising discovery shows that the Internet user's disembodiment has profound and unexpected effects.” I’ve heard many such stories about the pernicious effects of social media. However, when using standard diagnostic criteria, there has been no change in rates of depression and anxiety between 1990 and 2010, a time period that would presumably capture the supposedly “profound effects” of internet and social media use. (See, e.g., “Challenging the myth of an “epidemic” of common mental disorders: Trends in the global prevalence of anxiety and depression between 1990 and 2010.,” Baxter et al, 2014).

The authors above did find that some studies using questionnaires about general well-being found an increase in psychological distress over the relevant time period, but the questionnaires most likely to produce a positive result were ones that included somatic symptoms (e.g. heart palpitations), which could be associated with increasing obesity over the same time period. Also, none of these studies picked out a specific uptick during internet or social media adoption, as opposed to the rest of the time period.

Psychologically, people seem to be doing about the same. But haven’t suicide rates increased since the advent of social media? The answer is yes, in the United States, a little, but the inflection point is the year 2000, not 2006. 2000 was a local minimum in the age-adjusted suicide rate in the United States, probably the lowest in the past century. The rate has risen from that local minimum to a level that is normal for the past 80 years (the last time there was a big spike or change in the United States suicide rate was during the Great Depression). The rise has not been concentrated in adolescents, the greatest users of social media, but rather is greatest for adults in their twenties through fifties. So it would be strange to attribute the change in suicide rate to social media.

Looking at facets of life that changed dramatically over short periods during the 20th century - marriage, divorce, fertility, urbanization, wealth, even suicide (around the financial panic of 1908 and the Great Depression) - it’s difficult to see a mark in the 21st century from social media. I haven’t seen any obvious discontinuities in time trends of indicators such that social media presents itself as a likely cause. Yes, a higher percentage of couples met on the internet, but once together, they seem to behave according to trends established long before. Everything seems shockingly the same as it was before social media, at least in the outside world. It’s only in the world newly revealed by the rectangles that change seems to have occurred.

So whatever the change social media causes in consciousness, it must leave humans outwardly largely as it found them. Their interior experiences may be different in content and character, but other than spending more time staring at screens (as an outsider would report), Social Media Humans act pretty much the same.

I suspect that there are multiple forms of social media consciousness. Each platform offers tools that open up a slightly different world, and different kinds of people are drawn to and continue to use each platform. Perhaps there is such a thing as Facebook consciousness and Twitter consciousness, though of course many people use both. Also, people use each platform in vastly different ways, paying attention to different streams of information, interacting in different ways. I think it’s likely that there exists a specifically social social media consciousness that I have no way of understanding, because I am not a very social person. All I can hope to capture here is the essential reduction of a sort of intellectual dilettante social media consciousness, that probably describes less than 5% of the population, but on the other hand probably describes a high proportion of Ribbonfarm readers.

Earlier I mentioned Hubert Dreyfus’ book On The Internet. He published the first edition in 2001, boldly claiming, among other things, that something like Google could never work. In his 2009 edition, Dreyfus happily admits he was wrong (in fact, very much to his credit, his whole teaching style and personal philosophy is based around taking risks and being open to being wrong in front of students), but doubles down on such propositions as “distance learning has failed.” As a teacher, I would have also predicted this before the success of such projects as Lambda School. My own experiences with classroom teaching emphasized bodily presence, as Dreyfus does, using the body to communicate in-the-moment reactions to students and relating student comments and questions to each other. Further, my few experiences with online teaching were boring and lame. Yet somehow, new forms of “distance learning” are managing to look better than traditional universities. I wouldn’t have predicted it, and I’m sure that Professor Dreyfus would be delighted to be proven wrong, and fascinated to see why.The Risky Body in Cyberspace

I think that Dreyfus’ misunderstanding rests on several misunderstandings about the world revealed by social media technology, which we used to call “cyberspace.” Misunderstandings that were common and easy to make before the past decade or so:- Cyberspace is disembodied.

- Cyberspace is anonymous.

- Cyberspace is safe and free from consequences.

A few years ago, Second Life seemed like the future; now, the closest thing to Second Life is massively multiplayer video game worlds. I don’t know much about them; sometimes I see screenshots or references to characters, but by and large, that world seems separate from the real world, which includes the real world as revealed by social media. I will leave the phenomenology of MMPORGs to those familiar with the matter. However, as social media, the pseudo-embodied approach, in which an analogue of the body travels through three-dimensional space and expresses moods, has fallen out of fashion. In its place, there is pure text, text and images, or video. (My own social media world is made almost entirely of text, with some images, but I’m grateful for the video part, because it’s the only way I can watch Hubert Dreyfus teach a class.)

What I think is this: your body in cyberspace is just your regular body. You don’t get a different body. Your actual body (of which the eyes and fingers and amygdala are subsystems) gets a grip on the world of cyberspace through the interface of rectangle and software, and presses up against the bodies of others through this medium. In the core case of Twitter, the body is not observed visually by others unless one shows it (in carefully-chosen glimpses); certainly, the body is not smelled or touched. The state of the body (again, including the brain or mind or whatever) can only be sensed through the text and images it chooses to show and respond to. Through these brief sentences, each participant gradually gets a sense of some number of others, and of “the others” as a public in general. Perhaps this understanding is impoverished in some ways compared to the understanding that would be obtained by someone in the same room, but I think it is enriched in other ways. For instance, I would have to be very lucky to meet a person in meatspace whose thoughts I found as interesting as those in my Twitter feed, and if I did get that lucky, it would take us a long time to work through our epistemic distance. I would have to show my body during this time, risking unwanted attention or jealousy or disgust, and I would have to experience the other person’s immediate emotional reaction to whatever I said. In person, I find I am so busy trying not to offend people or hurt their feelings that I rarely get to talk about anything interesting. In this way, the text-based interface is enriched from my perspective. In person, we wear clothes rather than go around naked; there are aspects of the body that it’s nice to be able to bracket and leave out of the interaction, such as the appearance of one’s genitals, buttocks, and breasts, or the state of one’s hair (my hair is quite messy, right now). I think it’s possible for the entire appearance of the body to be bracketed, as with clothing. The internet is a type of garment.

That is to say, the body doesn’t disappear in cyberspace. It is merely, to different degrees, bracketed, covered over, in certain of its aspects. It’s still there, typing, having emotional reactions, longing, being involved, being mad.

So cyberspace isn’t disembodied; the body is merely revealed and clothed in a different way, specific to the social setting. (Just as social settings vary in meatspace, they vary in cyberspace.) And, as is obvious from the telephone example, cyberspace is far from anonymous. Even when the name of the cyberspace entity doesn’t correspond to one’s government name, the identity can still be perfectly real, with praise or slights to the online identity felt as deeply (if not more) as those toward one’s government identity. I have written before that the idea of a single unified identity is a gross oversimplification of human social reality; each person has many selves, depending on social relationships and setting. Similarly, many online identities can be real to a person, with varying levels of energy and commitment devoted to them at different times.

As for cyberspace being risk-free and safe from consequences, it’s tempting to merely snort-laugh, but this was a serious enough thing to think in 2001 (and even 2009) that one could devote a whole chapter to it, as Dreyfus does. He says (p. 70):to trust someone you have to make yourself vulnerable to him or her and they have to be vulnerable to you. Part of trust is based on the experience that the other does not take advantage of one's vulnerability. You have to be in the same room with someone who could physically hurt or publicly humiliate you and observe that they do not do so, in order to trust them and make yourself vulnerable to them in other ways.As we moderns have been made aware during various spectacles, it is not necessary to be in the same room with someone in order to be hurt or humiliated by them. An online identity can become so real and so occupied that it is vulnerable to harm from purely online sources; the online world, now, is the real world, in a way it wasn’t in 2001. Dreyfus imagines, following Kierkegaard, a tension between the fun of playful experimentation, and the lack of meaning without commitment. Here I quote Dreyfus at length, because here he transitions into what I think is a core aspect of social media consciousness, as opposed to its predecessor, movie consciousness (p. 87):

The idea that the internet “captures everything but the risk” is something that seemed possible in 2001; now, since what we think of as the internet is largely composed of a drama of real people having their lives ruined or sometimes made better, it’s hard to imagine. The internet is real to us, now, in part because it obviously has consequences.Kierkegaard would surely argue that, while the Internet, like the public sphere and the press, does not prohibit unconditional commitments, in the end, it undermines them. Like a simulator, the Net manages to capture everything but the risk. Our imaginations can be drawn in, as they are in playing games and watching movies, and no doubt, if we are sufficiently involved to feel we are taking risks, such simulations can help us acquire skills, but in so far as games work by temporarily capturing our imaginations in limited domains, they cannot simulate serious commitments in the real world. Imagined commitments hold us only when our imaginations are captivated by the simulations before our ears and eyes. And that is what computer games and the Net offer us. But the risks are only imaginary and have no long-term consequences. The temptation is to live in a world of stimulating images and simulated commitments and thus to lead a simulated life. As Kierkegaard says of the present age, “it transforms the task itself into an unreal feat of artifice, and reality into a theatre.”

(Citations omitted, emphasis in original.)

In theory, one could write up a phenomenology of every piece of technology: refrigerator consciousness, for instance. How does in-home refrigeration change how you relate to food, or feel about decay, or think about animals? Probably there is something interesting in every one. The transition that seems most salient to me, however, is the transition from movie consciousness, which began to dominate early in the 20th century and inform all aspects of life, fantasy, and even memory, to social media consciousness, which is informed by movie consciousness but represents a departure from it. The transition from movie consciousness to social media consciousness represents a move in the direction of greater involvement, greater complexity, and greater risk than was previously experienced. In many senses, social media consciousness is more of a direct involvement with reality than movie consciousness.

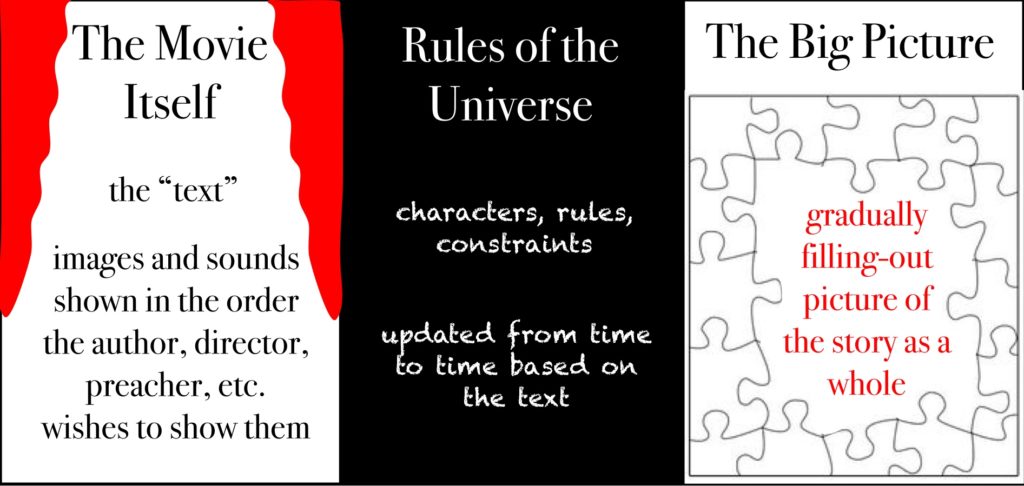

The reason movie consciousness could transition so smoothly into social media consciousness is that they are very similar, and are built on top of very old mental capacities. The best explanation of these capacities is that of Nick Lowe in his 2000 book The Classical Plot and the Invention of Western Narrative. In perceiving all kinds of narratives, Lowe says, from Greek tragedies to detective TV shows, we really take a three-part view of the ongoing situation. He envisions a triptych (my depiction, below): the first part is the movie screen, which is the text itself. This may be the text of a novel, or the carefully-chosen visual and audio tracks of a movie, or a sermon, or a campfire story. This “screen” changes rapidly. The second part is a sort of chalkboard, with notes about the rules of the universe. It is filled in quickly at the beginning, with typically only small revisions as the narrative progresses (though these slight revisions may be of great consequence). Finally, the third part is a sort of puzzle, in which the ongoing big-picture best guess of what’s going on with the story is presented. As Lowe describes it, the outer edges are filled in early and keep getting filled in in a relatively orderly way, but with the constant possibility of revision - even potentially scrapping a whole model that had been almost filled in (though this is rare).

When we interact with movies and other narratives, and even with sports and games, this three-part process is occurring. With a vocal, expressive crowd, you can feel it happening along with others in a social way. (I have heard rumors that the theater in Shakespeare’s time, and the pulpit in Paul’s time, were much more raucous places than their modern equivalents, though modern equivalents vary by culture, time, and participants.)

In movie culture, which includes books, radio programs, television, and all forms of media narrative that are carefully crafted beforehand (which I mean to include games, even though the precise outcome of the game is not known), this mode of cognition is essentially passive, and the development of mental models (frames two and three) is merely for the pleasure of it, not for any real-world consequence.

In social media world, the three-part view still exists, but it is turned onto the world itself, rather than any particular narrative or narrative world. Nick Lowe explains (p. 27) that he’s talking about closed worlds:For instance, narrative worlds are close in time - they have a beginning and an end.[W]hether at a conscious level or a subliminal, we are performing two higher-level cognitive operations all the time we read. First, we are making a constant series of checks and comparisons between our timelike and our timeless models of the story. And second, we are continuously refining the hologram by extrapolation - inductively and deductively projecting conclusions about the story from the narrative rules supplied.

Now, this process of extrapolation is ultimately made possible by a property of narrative whose implications for plotting are central to my model. In the coding of a story into a narrative text, the universe of the story is necessarily presented as a closed system. The degree of closure will vary widely, according to the needs of the particular text. But the levels on which such closure operates are more or less constant across all genres, modes, and cultures of narrative, because they are intrinsic to the narrative process itself.

This mental apparatus, however, was presumably developed for open-world exploration, that is, interacting with other people, through whatever medium. There is continuity between movie consciousness and social media consciousness, but there is also continuity between forms of consciousness I’d imagined to be extinct in 2015 and that of modernity.

Narratives are created to be absorbing when taken in in the three-part cognitive model described above. But what about regular reality? Where would that three-part apparatus come from, except as a way to deal with regular reality? What’s surprising is not that people can become absorbed in the “fake world” of social media. What’s surprising, to me at least, is that people can find the actual world itself, as revealed through social media, as exciting a target for narrative interaction as movies, if not more so.City Trees and Wilderness Trees

What I see as the essential difference between pre-made narratives and ongoing social media narrative engagement is that the former is tame, and the latter is wild. I will illustrate what I mean by this with some photos of plants.First, think about the city plants that you see. Most of these are probably trees, flowers, shrubs, and lawns. A city tree is usually tended so that it looks like the idea of a tree: symmetrical, lush, dead parts removed, etc. The dead parts of city plants get removed, because they are considered unattractive, and because they pose a fire hazard. City plants generally depend on humans for their reproduction. It’s a safe life, to call back to Dreyfus’ interpretation of Kierkegaard. I grew up in the woods and spend a lot of time in the wilderness, but when I think of a tree, I think of a city tree. (I suspect that this is a result of my own underlying movie consciousness; city trees look like the idea of trees, as portrayed in movies.)

In the wilderness, however, trees are all over the place. Not only every scrap of dirt, but every rock, trickle of water, leaf, cone, ray of sunlight, etc. is up for grabs. Each organism, in seasonal waves along with its conspecifics, takes the world as it is, and fights for everything. Nothing is given. Nothing is tidy. Especially in ecologies featuring plants with “fire-embracing life histories,” in which many plants benefit from fire, wild plants don’t self-prune, and you end up with weird-looking asymmetrical monstrosities that appear half dead and half alive, instead of tidy, symmetrical “trees.” Everybody is simultaneously trying to use everything else, including each other. Nothing is safe. It’s at once beautiful, alien, and profoundly tiring. I love the wilderness, but the order and safety of the city, being built for the comfort of beings like me at all levels, is lovely to come back to.

Movie consciousness foregrounded safe, tidy worlds, revealed in movies and other narrative media, and distinct from the “real world.” Social media consciousness, on the other hand, foregrounds a wild, unsafe, risky world in which everything is eating everything else constantly, and everything changes from minute to minute. This is not to say that the creators of books and movies did not do this; however, I argue, they did it on a longer time scale, and fewer people were involved in the process. One director performs an homage to another in a work that takes months to make; meanwhile, on social media, one sentence-long quotation might be popularized, parodied, associated with images, associated with past texts, elaborated on, mocked, and made obsolete within hours. Not just texts, but whole identities, are vulnerable to this process. Social media requires the shielding of the body (the garment role), precisely because it is so profoundly unsafe.

3 Comments

Excellent essay, Sarah. I wonder if we will see a decline in prescriptive experiences as more people become accustomed to the wildness of a social media consciousness. Of course, our entire education system would need to be upended if we truly want to give other people an unprescribed life experience.

It has occurred to me that we have a somewhat distorted idea of social risk over social media.

People, including me, seem more inclined to take expressive risks over social media and give voice to thoughts and emotions they might not in a face-to-face group (think lust or hatred). These risks "feel" safe and anonymous, but of course, they are not. They can be preserved for years and signal boosted to millions of people, potentially ruining careers or reputations, whereas a conversational misstep will rarely be remembered beyond a few people. We should take more risks in our face to face interactions and be more cautious on social media, but my brain, and I suspect a lot of brains, are not adapted to this reality.

Thank you for the wonderful post!

Do you think you could provide the source for this claims? ... "Psychologically, people seem to be doing about the same. ... in the United States ... the inflection point is the year 2000, not 2006. 2000 was a local minimum in the age-adjusted suicide rate in the United States, probably the lowest in the past century. The rate has risen from that local minimum to a level that is normal for the past 80 years"

I'd love to know where you drew this conclusion from. Thank you!