Justifiable AI

Can an artificial intelligence break the law? Suppose one did. Would you take it to court? Would you make it testify, to swear to tell the truth, the whole truth, and nothing but the truth? What if I told you that an AI can do at most two, and that the result will be ducks, dogs, or kangaroos?

There are many efforts to design AIs that can explain their reasoning. I suspect they are not going to work out. We have a hard enough time explaining the implications of regular science, and the stuff we call AI is basically pre-scientific. There's little theory or causation, only correlation. We truly don't know how they work. And yet we can't stop anthropomorphizing the damned things. Expecting a glorified syllogism to stand up on its hind legs and explain its corner cases is laughable. It's also beside the point, because there is probably a better way to accomplish society's goals.

There is widespread support for AIs that can show their work. The European Union is looking to implement a right to explanation over algorithmic decisions about its citizens. This is reasonable; the "it's just an algorithm" excuse carries obvious moral hazard. Two core principles of democratic government are equality of treatment and accountability. If I take mortgage applications from everyone but only lend money to white women, eventually I'll get in trouble. At the least I'd have to explain myself. If decision-making is outsourced to AI I may not be able to explain why it did what it did.

But there's a whiff of paradox to demanding human-scale relatability from machines designed to be superhuman. Imagine that you had AI that thinks out loud as it goes. You might get a satisfying narrative about why a specific decision was made, but there would be little basis to trust it. The explanations you demand will almost certainly be about decisions in the margin, so they won't necessarily shed light on other cases either. Worse, if an explanation could fully describe all the workings of the AI in a way a human could follow, that explanation would itself be a simpler version of the algorithm it's trying to explain. There'd be no need to mess around with oodles of gradients and weights and training data in the first place. [0]

Mathematicians have been struggling with something like this for decades. How can you trust a computer-generated proof that can only be checked by computer? One suspects a slug of question-begging, or a confusion about what "proof" really means. Or maybe that's the feel of humanity's toes curled on the edge of a chasm we can't cross alone.

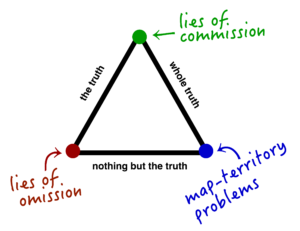

It's a pretty problem. A good place to start is by breaking down that courtroom promise: truth, whole-truth, or nothing-but-the-truth. This triplet is meant to head off the clever little ways truth can be manipulated, accidentally or not. I can "tell the truth" by saying at least one thing that reflects actual reality. That doesn't stop me from holding back other facts (hence the call for whole-truth), or mixing in falsehoods (nothing-but-the-truth). Like many things related to justice, guaranteeing all three at once is an ideal that's only rarely achieved, and then only in limited domains.

Arguably, the greatest success of AI-like software so far has been in spam filtering. I hesitate to write that because I don't want to hear your complaints about how much spam you get. Believe me, it would be a lot worse without those things on duty. But compared to the applications we're throwing AIs at now, spam filters operate over much simpler possibility spaces and for much (much) lower stakes.

For anything really complicated you only get to optimize for two sides of the truth trilemma. The corners of the trilemma represent three kinds of epistemic blindspot:- Truth + nothing-but leads to problems with recall, or lies of omission. (ducks)

- Truth + whole-truth can fail on precision, or lies of commission. (dogs)

- Whole-truth + nothing-but may be internally consistent and complete as possible, but can't guarantee agreement with the ground truth of reality. (kangaroos)

Ducking autocorrect

Truth + nothing-but-the-truth systems give out 100% correct answers aligned with reality, until they don't. Take spellcheckers. They are only as good as the statistics they are given. The word "ducking" is a hundred times less frequent than other words I could mention, but corporate prissiness leads to a lie of omission known as "ducking autocorrect". We type one thing but the software insists on changing it to something else. Duck / recall problems are the easiest to fix. You add the fucking word to your personal dictionary and move on. [1] If you want to change the default then your argument is with society, not the computer. These kinds of systems are inherently limited because they depend on strict classification & curation of their data. But for many purposes they work well.Let dreaming dogs lie

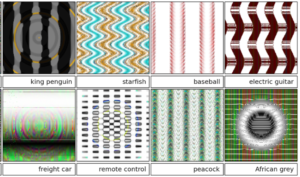

A system designed for truth + whole-truth opens the door to lies of commission, because they must be prepared to answer any question over a very large domain. But by allowing all possibilities (in order to capture the whole truth), they weaken their ability to filter out falsehood. For example, it's fairly easy to fool an AI image classifier by probing its responses to random images, or altering "good" images in subtle ways to mislead them. You can get one to swear blind that a funny pink squiggle is in fact a poodle, or that a tabby cat is a bowl of guac.These dog (aka precision) problems are harder than duck problems because of the underlying math. There are always vastly more fake images than real ones.

Imagine you have an AI trained to recognize different breeds of dogs. While you're at it, imagine it's a magical AI that runs infinitely fast. You're going to need magic because the total number of possible HD images is unthinkably huge. The number itself is millions of digits long. Every sequence of pictures you can imagine is in that set of possibilities: every dog in every lighting condition, but also every cat and rat that ever lived, every word ever written, every second of TV ever broadcast or ever will, a selfie of you with tomorrow's winning lottery ticket (plus every losing one), a movie of the real William Shakespeare writing Hamlet, and a dog doing the same. And then trillions of subtle variations on all of the above. And more.

Nearly all of the possible images are randomish noise. Only an itty bitty fraction are pictures of things we would recognize. A smaller fraction of that is of real things. Your magical AI sorts images into an even tinier number of pigeonholes, and every image has to go into at least one. Because of the pigeonhole principle, that means the AI is almost guaranteed to misclassify a huge number of fake images as good doggies and vice versa.

And just in case you're feeling superior at this point, read this paper about images tuned to fool both humans and AIs. Nature's creatures are just as vulnerable to pattern-matching bugs. In pre-computer days Nikolaas Tinbergen used painted sticks to hack the visual cortexes of birds. After lots of iteration he found that a red stick with three white stripes elicited a pecking/feeding response way stronger than the hatchlings' reaction to seeing their actual mothers.

We rarely think about these potential almost-images, these invisible clouds of Dog Matter. Sure, they "exist" in some thin philosophical sense, but they are effectively inaccessible from the real world. You can't process all possible images; the universe would burn out first. That means we can just pretend they're not really there, right? Right?

So this is where shit gets weird. It turns out that you can run an AI backward. Instead of tagging existing images based on labeled boxes of traits, you take a trait-box and use it to generate new images. These images by definition pass the AI's test for dogginess but to us are definitely not real dogs. There are deep connections between computation, information, and compression that are beyond the scope of this article, but the upshot is that any sufficiently complex model-based representation of a thing is also a generator of that thing. A recognizer of good doggies can be inverted to summon fake ones from the void.

This fact was not widely appreciated even a few years ago. Today, uncannily realistic fake videos (yes, including fake porn) are quickly becoming a problem.

Kangaroo-complete

Whole-truth + nothing-but is the most difficult of the three to achieve. You train on as much data as you can gather; you run endless simulations to discover lingering bugs. You maybe even use the fake-doggie phenomenon to your advantage, making two AIs continuously try to fool each other and learn how to be less fragile. After all that your door prize is the map-territory problem, whether the resulting model of reality really reflects actual reality. Here be hidden assumptions, accidental bias, and unknown-unknowns.Volvo recently announced that they were working on a peculiar wrinkle in their safety systems. Algorithms for collision avoidance, trained on the moose / deer / etc of the Arctic, were failing around kangaroos. The difficulty is that kangaroos jump. Everything else in the car AI's experience moves in predictable paths along the ground. Jumping messed with an assumption so deep that we humans seldom say it out loud, because we acquire it before we can speak. A roo standing still would appear to be small and close. The same roo in the air would fool the system's sense of perspective, making it seem larger and farther than it was. Objects in the real world do not instantly double in size and move twice as far away, but to the car they appeared to do so. It's a new species of optical illusion.

To solve this you can't just add "kangaroo" to the Big Dictionary Of Animals To Avoid. Their movements are too erratic --doubly so because animals in turn have a hard time dealing with the unnatural motion of cars. As far as I can tell this will require a serious rethink of the car's apprehension of objects in motion.

There is no known general solution to kangaroo problems. While AIs can do a scary number of things we can't, they are just as firmly lashed to the epistemic wheel as we are. Note that all through these examples we can barely get a handle on the gross external behavior of the AIs, let alone what's really going on inside.Eppure calcolano

And yet they compute. AIs are not going away, so we better learn to deal with them. One obstacle is that "explainable" is an overloaded term. Zachary Lipton recently made a good start on teasing out what we talk about when we talk about understanding AI.Decomposability: This means that each part, input, parameter, and calculation can be examined and understood separately. You can start with an overall algorithm, eg looks-like + talks-like + walks-like = animal, and then drill down into the implementation of looks-like, etc, and test each separately. Neural nets, especially the deep learning flavors, cannot be easily broken down like this.

Simulatability via small models: Neural nets may not be easy to take apart, but many are small enough to hold in your head. Freshman compsci students are first led through the venerable tf/idf algorithm, which can be explained in a paragraph. Then the teacher drops the ah-ha moment when they demonstrate how with a couple of twists tf/idf is equivalent to a simple Bayesian net. This is essentially the gateway drug to machine learning.

Simulatibility via short paths: Even though you can't possibly read through a dictionary with a trillion entries, you can find any item in it in forty steps or less. In the same way an AI's model may have millions of nodes, but arranged so that using the model to make a single inference only touches a small number of them. In theory a human could follow along.Algorithmic transparency: This one is a bit more technical, but this means that the algorithm's behavior is well-defined. It can be proven to do certain things, like arrive a unique answer, even with inputs we haven't tested yet. Deep learning models do not come with this assurance because (surprise!) we have no idea how they actually work. [2]

A statistical spellchecker, which is not even close to what we call AI these days, passes all four of these tests. Lipton notes that humans pass none. Presumably, somewhere in the middle is a sweet spot of predictive power and intuitive operation. But we don't know. The mathematicians we left scratching their heads a thousand words back can't help you either.Artificial humility

What we do know is that asking for "just so" narrative explanations from AI is not going to work. Testimony is a preliterate tradition with well-known failure modes even within our own species. Think about it this way: do you really want to unleash these things on the task of optimizing for convincing excuses?AI that can be grasped intuitively would be a good thing, if for no other reason than to help us build better ones. An inscrutable djinn is just as spooky & frustrating to scientists as it is to you. But the real issue is not that AIs must be explainable, but justifiable.

Even if was impossible to crack open the black boxes, you can still gain more assurance about them. The most obvious (and expensive) way is to have an odd number of AIs, each programmed differently and using different training data, that vote independently. Avionics does this all the time with important components whose behavior is not guaranteed. The idea is that failures are essentially random, and so the chances of diverse AIs being prey to the same bug is tiny. There has been good work on something called generative adversarial training, ie, having different AIs spar and improve each other. I'm not entirely convinced this will do the trick; there may not be that many ways to scan a cat.

When you quiz a technical person about all this, their usual gut reaction is to publish the data and stats. How can you argue with something that seems to work? But this just puts Descartes before the horse; you can't prove how something will behave in the big bad old world until you put it out in there. And purely statistical arguments are not compelling to most of the public, nor are they usually sufficient under the law.

Means and motive matter as much as ends. AIs don't operate in isolation. Somebody designs them, somebody gathers the data to train them, somebody decides how to use the answers they give. Those human-scale decisions are --or should be-- documented and understandable, especially for AIs operating in larger domains for higher stakes. It's natural to want to ask a programmer how you can trust an AI. More revealing is to ask why they do.

Notes

[0] I think this would be a good practice in general. If you can reduce an AI to pseudocode then you don't need that AI. The real danger of AI isn’t some take-over-the-world fantasy, but humans thoughtlessly using it everywhere, like how we used to paint clock faces with radium.[1] Words I had to teach my computer for this article: compsci, popsci, trilemma, simulatability, relatability, roo, imagespace, Plancks, Nikolaas, and randomish. Words it taught me: djinn, Arctic, and anthropomorphizing.

[2] Correctness over all inputs is not easy to guarantee even for regular programs. A bug in one of the most basic, widespread, literally textbook algorithms lay dormant for over twenty years before it triggered in the wild in 2006. Sleep tight.

10 Comments

"Your magical AI sorts images into an even tinier number of pigeonholes, and every image has to go into at least one. Because of the pigeonhole principle, that means the AI is almost guaranteed to misclassify a huge number of fake images as good doggies and vice versa."

What does the pigeonhole principle have to do with this? At my glance, mathematically there's nothing about the pigeonhole principle which says that an AI couldn't feasibly classify all random noise as random noise and all dogs as dogs. Indeed, the pigeonhole principle doesn't even apply, since we can fit more than one image in one pigeonhole, that is, there exist multiple images of dogs, whereas in the pigeonhole principle we assume two pigeons cannot be in the same hole.

"To do so requires the formal statement of the pigeonhole principle, which is "there does not exist an injective function whose codomain is smaller than its domain"."

https://en.wikipedia.org/wiki/Pigeonhole_principle

Neural nets however, aren't injective.

That's not quite what the PP says. If you have 2 pigeonholes and 3 pigeons, then at least one hole has multiple pigeons.

Your AI sorts images into 2 categories, and there are 10^6,500,000 images to sort. Yes, it is possible to imagine an AI perfectly sorting all the dogs and non-dogs correctly (leaving aside how you define "correctly" let alone check it). But it would have to be accurate to millions of digits of precision. That is as good as infinitely unlikely for our purposes.

I dont buy the hype about we cant understand AI's reasoning. Why cant you just add logging and track where it makes choices then just trace through the decision tree that way?

Take a good look at the picture of the "dog". According to the AI that (complements the one that) generated it, that is very definitely a picture of a dog. In fact, it is a doglike as a picture can be, based on a model of dog pictures that it developed based on statistical evaluation of its training corpus.

Brains, on the other hand, perceive it is clearly messed up. We evaluate dogness in a manner which, to the extent that we understand it at all, appears to be completely different. The average human being is incredibly bad at pixel-level statistical analysis of a corpus of thousands of training images. We're even worse at parallel processing; each "neuron" in a neural net is optimizing locally. There are thousands to millions of neurons with their own decision trees running in parallel and exchanging data with neighboring neurons.

We're already having problems with algorithms used in criminal justice. These algorithms are used in decisions about setting bail, sentencing, and granting probation, and they purport to assess how likely each defendant/convict is to be rearrested if released. They take into account factors such as the person's age, sex, history of arrest, extent of ties within the community, marital status, abuse of alcohol or other substances, education, etc. Naturally, the question of whether and when such assessments are racist has reached the courts several times. See, for example: https://www.wisbar.org/NewsPublications/InsideTrack/Pages/Article.aspx?Volume=9&Issue=14&ArticleID=25730

I would expect there are also issues with trading bots finding spoofing strategies whose application is prohibited by SEC regulations.

BTW it would be fun to run an ALife / Core War style competition to figure out if spoofing is stable, once order book access is enabled ( like Tit-For-Tat in game theoretic simulations ).

Late to the party - I agree with much of the gist of the post. However, I'd like to know what the confidence level of the AI predictions of "king penguin", "starfish", etc, was. My hunch is that the algorithm had low confidence that these were the correct predictions.

Typically the image classification algorithm will give supply confidence levels for each prediction. Given a clear, unobstructed picture of a person's face, and only a person's face, it might predict "human face" with confidence 0.95. Show it a picture of a dog and a kitten, and the kitten's image partially occluded, it might predict "dog - 0.7", "cat - 0.25". Show it a dog's paw and a bunch of other junk and you might get something like "dog - 0.25" "frog - 0.1" and a bunch of other unlikely stuff.

The way the classifier works, if it sees some random geometric pattern, it will absolutely make predictions, but it will usually have low confidence values for those predictions. If it is predicting "king penguin", I'd wager donuts to dimes that it predicted that with low confidence values. It's a feature of the last steps in the algorithm to reduce a many layered prediction to ONE PREDICTION... and these bizarre classifications are probably mostly due to forcing a classifier to make a choice from many ill fitting categories.

You can fool algorithms for sure, but this is a case of using a complex algorithm wrongly, IMO, not a case of fooling it.

Source: I do AI

I take it back! The link you shared,

http://www.evolvingai.org/fooling

explains that these are algorithmic attacks on the classifier, and that the classifier is classifying these as king penguin, etc, with high confidence.

What this tells me is:

(1) I'm wrong a lot

(2) Deep learning approaches that are going into production need to generate these attack images, add them to their training data, and iterate training until they have algos that are more resilient. And/or make sure some of the layers do things that prevent these attacks... what that is I don't know.