Glitches, uh, find a way

Core scientific theories often sound tautological, yet we keep them around because they lead to useful ideas. The prime theorem of biology is that life comes from life. Life coming from life doesn't mean that spontaneous generation is impossible. It had to have happened at least once. But it's so much less efficient than reproduction that it no longer has a chance. Before two amino acids can start rubbing together in just the right way on the road to making Life 2.0, some version 1.0 bastard comes along and eats it. That leads you to the idea of natural selection, evolution, dimorphic sex, and the rest of it.

The prime theorem of computing is that any computer can simulate any other. But for all of the talk about "artificial life", the two mechanisms are subtly different. Lifeforms compete by turning each other into food. Computerforms compete by turning each other into memes.The physical device you fondle and peck at and casually call "the computer" is not a single entity. It's more like a coral reef, a delicate snarl of nested habitats made up of critters obliviously playing host to and being hosted by other critters. The bacteria doesn't know it's in parasite in a fish's gut. The fish doesn't care that its shelter is made of living coral.

Here is the computer game Lemmings, written 30 years ago for the IBM PC, running unchanged in a modern browser.

The Lemmings game doesn't "know" that the DOS operating system it's running on is an imitation created in the early 21st century. Neither does DOSBox itself "know" that the CPU it's running on is a sham implemented in Javascript, whose interpreter has been in turn replaced with a just-in-time compiler, which spits out instructions for a virtual CPU, that get translated into "real" instructions for the "real" CPU, which is itself just an abstraction implemented in microcode.

The reef analogy breaks down at this point, because there is no way to get a fish to host coral instead of the other way around. But for computerforms that two-way potential is what defines them. Any "Turing-equivalent" computational thing, be it physical or virtual, is capable of simulating the behavior of any other Turing-equivalent thing. For eighty years now every single model of computation has been proven to be functionally identical [0].

That means it's possible to take a 90s-era 386 and simulate your modern computer on it, simulating Javascript simulating DOSBox simulating those hapless Lemmings. It would need a lot more memory and would run absurdly slow, but that's the only practical problem.

How that works comes down to what we mean by "computer". In a (very) basic sense, a computer is anything that has 1) a bunch of little cells that can represent numbers, 2) a machine that can move to a given cell and either read the number it holds or write a new one, and 3) a specially-crafted set of rules that govern the interactions between 1) and 2). Some children's games have been proven to be Turing-complete. Hell, Lemmings itself is Turing complete.

Imagine we have a workable (if tedious) kind of computer made of gears & balls or cleverly-arranged dominoes or something. You're not doing the math when you operate it, you're just mechanically following a numbered list of rules. In theory you could be replaced by a machine and the whole thing would operate by itself. The clever bit comes when you ask how the rules would be encoded in such a mechanism. After all, both the cells and the rules are really just numbers... and that was the insight of Goedel, Turing, and Church. Programs are data. Data are numbers. Numbers are rules. Rules are programs. All of them always carrying the potential to swap places. Computer A can always simulate computer B and vice-versa.

So a computerform's main survival trick is to swallow other computers whole and put them to work. As a computer memeifies other computers and absorbs their capabilities, it becomes more useful and therefore more fit for survival. That's why Apple put years into working out how to emulate the 68k on PowerPC, and later on emulating Power on the Intel chip. People won't buy a computer that can't run the programs they already own. In fact, the very first thing people asked when Rosetta was announced was whether the 68k emulator could run inside the PowerPC emulator running on Intel. The answer was no, but only because Apple didn't want the support headache.Read in tooth and claw

Absorption is the nicer scenario. The flip side is when a program forces an innocent computer to host it without its consent or even knowledge. This can happen in the damnedest of places because the bar for what makes a computer a computer is so low. People create Turing-equivalent systems all the time without meaning to, and produce another potential niche for sneaky programs to set up shop.Last year a particularly stubborn programmer tricked a GameBoy into treating the item inventory of Pokémon Yellow as a program execution stack. Both the item list and the GameBoy execution stack are just ordered lists of 8-bit numbers. When code is data and data is code, all you have to do is get the CPU to jump the tracks and start plowing through a sequence of bits you control. He "programmed" the computer by buying and storing game items in a particular order. The result is hardly believable: he tricked Pokémon Yellow into pretending to be two other editions of Pokémon, Tetris, Zelda, Super Mario Bros, and a video player. Game Over.

This isn't limited to fun game hacks. People have exploited the Turing-completeness of the routing of network packets, the memory subsystem (bypassing CPU entirely!), and even Wikipedia's article template.

The next step up in evil-geniushood is of course combining the techniques. First you trick a computer into running you, then cocoon your victim in a virtual prison while you take control of the underlying hardware. I had the privilege of working with one of the sneaky so-and-sos who wrote the SubVirt paper, and I was careful never to leave my computer alone with him."This new type of malware, which we call a virtual-machine based rootkit (VMBR), installs a virtual-machine monitor underneath an existing operating system and hoists the original operating system into a virtual machine. Virtual-machine based rootkits are hard to detect and remove because their state cannot be accessed by software running in the target system. Further, VMBRs support general-purpose malicious services by allowing such services to run in a separate operating system that is protected from the target system."

Subliminal computing

How can you even know that a computerform has been swallowed by another? Or more generally, that a system is doing what you think it's doing? Anything physical can be made virtual. But it's important to remember that everything virtual eventually becomes physical. That may be the only fact that saves us.A few weeks ago a friend asked how you could ever be sure whether the those voice-activated devices like Alexa and Google Home really only start parsing speech after they hear their wake word, or if they just listen all the time. There's a lot of ways to go about it: put the code in a debugger, watch for network traffic, or carefully time the responses. But there are also lots of ways to hide from that kind of probing. Ironically, the most robust method I can think of to keep Alexa honest is to put a microphone next to it.

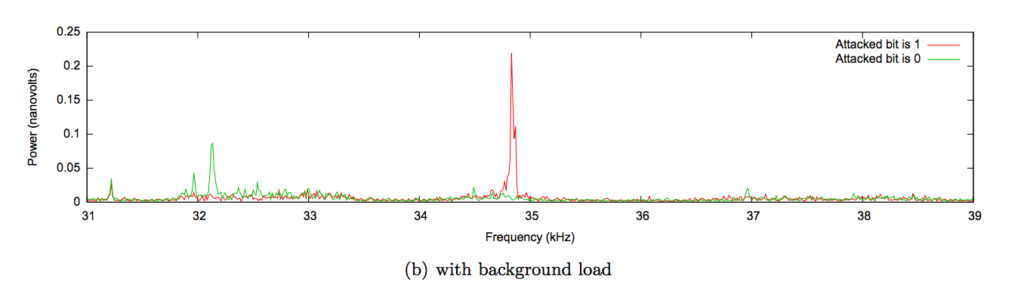

All electronics throw off EM and ultrasonic squeals as they operate. This has been used in the past to learn quite a lot about the inner workings of computers, even to the point of extracting private keys.

So put a mic next to an Alexa and speak the wake word. Then speak similar words, then regular conversation, play some TV, and so on. Record and analyze the pattern of squeals. If it's really behaving what they say it does --and I have no reason to think otherwise-- then it should squeal in distinctive patterns if it is awake versus not.

But there are even more vicious and interesting Turing hacks that can evade gross physical analysis. My first reaction after reading the "A2" hardware backdoor paper was something like that's it, I fucking give up!

Hardware backdoors are exactly what they sound like: a hidden way to get access to a computer without the usual permission, often created when the computer is. With previous methods the attacker's main problem wasn't hiding the backdoor but (so to speak) hiding the knob. The attacker would have to add a ton of extra digital logic to the chip to actively listen for a secret pattern of signals and then grant the attacker access. This extra logic consumes extra power and changes the timing of circuits, making it possible to tell infected chips apart from clean ones. It's not easy, but it is straightforward.

And that's the scary thing about the A2 hack. By adding a very small number of capacitors and gates, the researchers were able to create a parasitic analog-digital hybrid computer overlaid onto the existing digital one. The difference between an infected chip and clean chip could be as little as one gate out of billions, and the observable behavior pretty much identical.

Analog computers are a mostly-forgotten alternative to digital. They are like a theremin versus a piano: powerful and nuanced but tricky to use. Edward Lorenz first plotted his strange attractors on an analog system, which would have been infeasible on the digital ones of the 60s. In the end digital computers won because they are easier to program and get consistent output from.

But digital computers live in an analog reality, constantly bludgeoning messy real-world measurements into clean ones and zeros. Wires laid down close together can interfere with each other electrically and a lot of work is put into suppressing those effects. For example, the transistors that store your data do so by flipping between two states: high voltage and low. A reading above (say) 3 volts is strictly interpreted as a one and anything lower as a zero, and any nuance is deliberately ignored. It's in that blindspot that analog computation can happen.

More to the point, analog computers are great at efficient signal processing. Because they embrace the fuzz & ambiguity they are a lot more simple, circuit-wise, and thus easier to hide. They don't have to actively look for trigger signals, they just kind of shift their nature in the presence of the right signal.

Think of all those adventure tropes about the secret cave with the map on the floor that only shows where the treasure is once a year at dawn when a beam of light hits it in the right way and the wind makes the trees sing the anthem of the Forgotten People, etc etc. The cave isn't actively looking for the right moment, it's kind of shaped in the negative image of the right moment, exploiting complex interactions in a specific environment. At other times it doesn't look like anything at all.

In short, these underhanded bastards modeled a computer chip and its incidental electric effects with enough detail to allow them to subvert those effects to their own purposes. With a few changes in just the right places they summoned into being a shadow computer of completely alien design, leeching voltage from and largely made up of the existing, legitimate transistors of its victim. This kind of backdoor is invisible to the standard methods for detecting malicious digital computers because it's not a digital computer. And in theory at least, it's just as powerful as any other. Sleep tight.

9 Comments

I'm not entirely sure I understand what you mean by "turning each other into memes"

Memes are ideas that replicate. When one computer is co-opted by another in some ways, how is that turning the host computer into a meme? Do you mean that by enslaving the host computer to its functional purpose, it has replicated the function?

Like in the GameBoy example, the DNA of the Pokemon game has been coopted to express that of the Tetris game for instance.

So sounds like what's replicating is programs rather than computers themselves.

I mean they are turned into literal Dawkinesque memes. The 386 CPU is now a digital file that is copied and spread to the degree that it is useful for running other popular memes like the Lemmings game.

That fits the software-eats-hardware example, but what about the rest. How about the gameboy hack or the A1 sidechannel parasite computer thingie? How do they fit the "computers turn each other into memes" idea?

It's kind of a complementary duality, "eating" and "reproduction", that play out differently with life and computers.

The way life perpetuates makes eating a defining characteristic. Or maybe it's how eating is a core chemical effect that leads to life's method of reproduction. Lifeforms ensure their continuity by making identical-ish copies of themselves. To do that it must acquire materials. The easiest material to work with are other lifeforms that are made up of the same kind of stuff (proteins, etc) as they. Reproduction leads to predation. Life eats stuff similar to it, breaks them down, and produces new life even more similar to it than its inputs. Life at the bottom of the food chain does not, eg plants & plankton, but if you look closely you find that they also benefit from being eaten.

The way computers perpetuate makes simulation a defining characteristic. Or maybe it's how simulation is a core mathematical effect that leads to a computer's method of reproduction. Computerforms ensure their continuity by increasing their usefulness. To do that it must acquire new capabilities and preserve old ones. The easiest material to work with are other computerforms that use the same stuff (64-bit words, etc) they do. Reproduction leads to absorption. Computers eat stuff drastically different from it in capability, preserves them, and produce hybrids that are more useful. Computers at the bottom of the chain spread because they provide useful capabilities. They don't have to be as clever as plants, hiding seeds in tasty fruit to be pooped out a day later, because a computer's form of "eating" doesn't destroy their "DNA".

what are your thoughts in context to ethereum and other turing complete smart contract platforms ?

Well... the first popular "distributed anonymous organization" attracted something like $150 million in Ether, and about a third of it was promptly stolen. From what I understand it was done by calling a built-in function to split off a little money from the DAO into a copy of it, with use of recursion to run the split code over and over before the balances are reckoned. So not a simulation hack to the same degree as the examples in this post, but very clever.

However, the simulation games described in the post only work when compute gets exponentially cheaper over time. You can only play Lemmings on your phone because pound-for-pound it's about a hundred thousand times more powerful than the computer it was written for.

I think that "proof of X" style distributed consensus is interesting because it cuts directly against that assumption. The blockchain folks want to trap and effectively tax computation. It gets more expensive over time. For that reason I suspect that we'll look back in ten years and realize that while it's a cool idea it's not a great platform for new ideas. If you are going to waste CPU, waste it on something that helps humans do things faster, not slower.

But I am also perfectly prepared to be that middle-aged grump on the wrong side of history.

Excuse me, I read this post 2x and I have One questions and Three statements

Question 1. WHAT? :-(

Statement 1. ...And the people come and go and speak of Michelangelo.

(Thanks - T.S. Eliot ... I think!)

Statement 2. ...the single biggest danger to lemmings is their leader's headlong dash towards the cliff. (Thanks Joe Fallica)

Statement 2. ...never mind - Keep on keep'n on. :-)

(Thanks younger generation)

absolutely amazing

happy I found it on this blog

have a handful of acquaintances who'll find it of great interest to share this to