The Leaning Tower of Morality

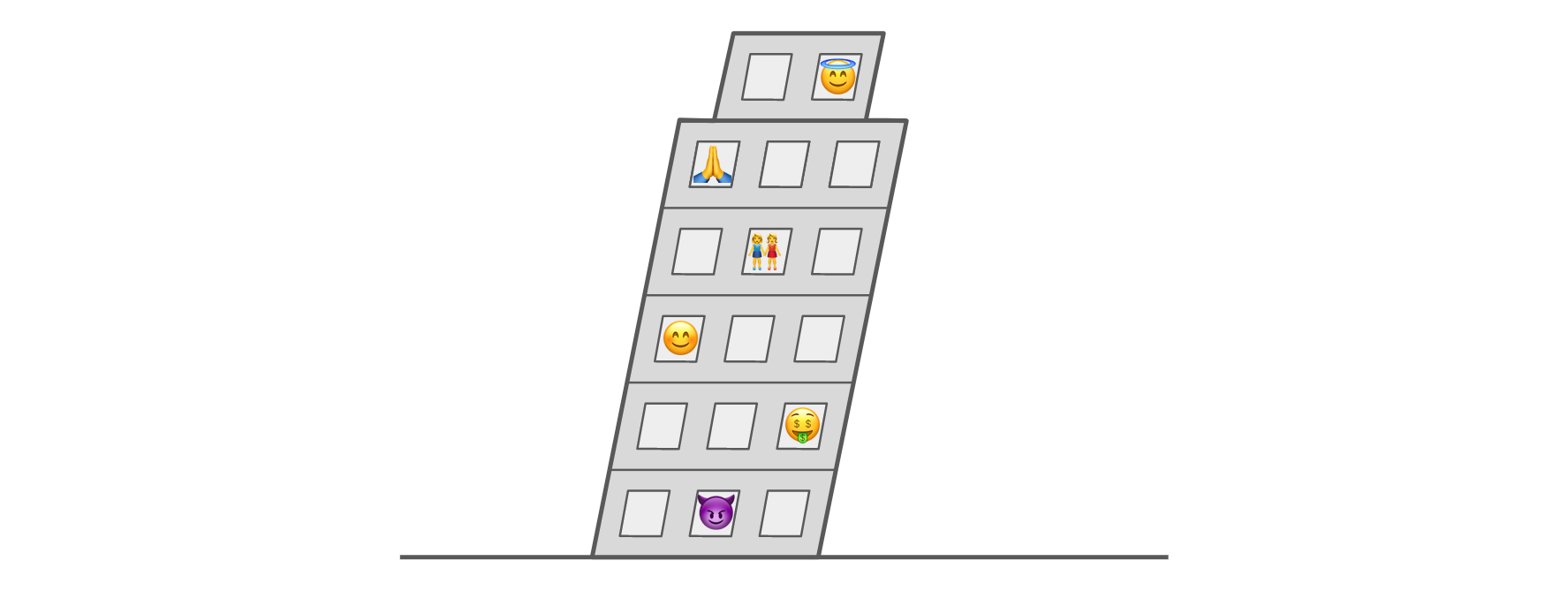

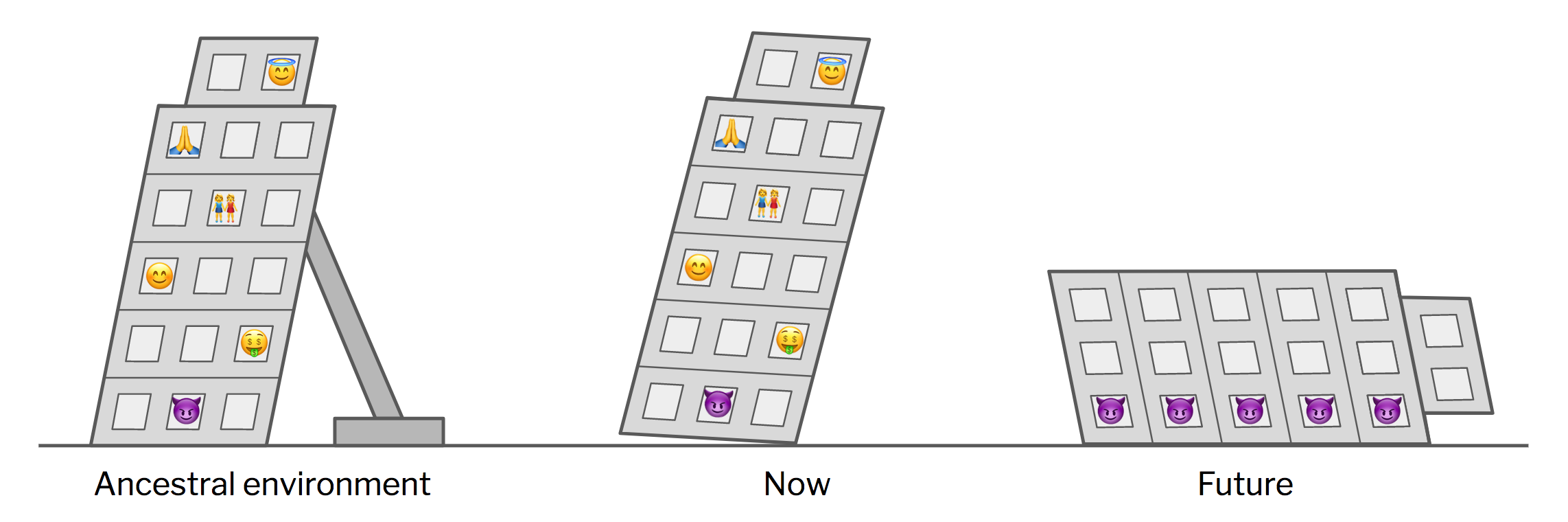

There’s an image that’s taken root in my mind recently that I can’t seem to shake. I picture humanity living in a large, rickety tower, tilting at a precarious angle to the ground — like so: The tower represents our capacity for moral behavior. Lower levels are more base; higher levels, more virtuous. We don’t need an exact floorplan, but here’s the kind of thing I’m imagining:Don’t hate the player, hate the game. — Ice-T

Game theory is asleep, cooperate for no reason. — Deity of Religion

- Ground floor: Perfect zero-sum selfishness. Aggression and exploitation. The war of all against all.

- Middle floors: Various flavors of mutualism. “I’ll help you if you help me.” Reciprocity. Tit for tat.

- Higher floors: Empathy and compassion. Turning the other cheek. True virtue (not just signaling). A tendency to cooperate in one-shot prisoner’s dilemmas.

- Penthouse: Perfect self-sacrificing altruism. A willingness to give time, energy, money, or even one’s life to help a stranger for nothing in return.

Here’s the question I want to explore today: How does this structure remain standing? On what ultimate explanatory principles do our moral instincts rest?

Let’s take a look at three possible answers.

God

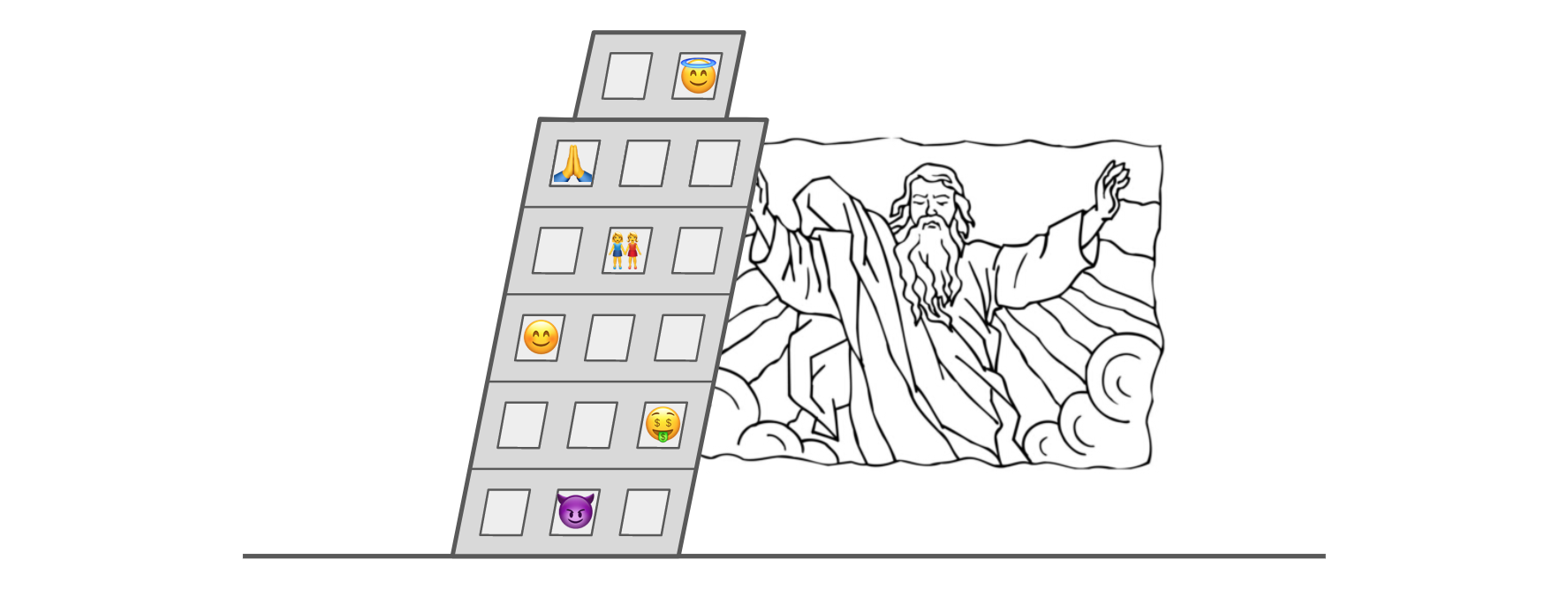

One common answer is that our moral instincts are propped up by God, the great cosmic buttress:

In the Christian worldview, it’s God’s love that induces us to climb higher. By promising eternal rewards (or threatening eternal punishment), God effectively subsidizes moral behavior, thereby stabilizing the higher floors. If He one day decided to forsake us, the tower would collapse and we’d fall to a much lower, more savage state.¹

Now, I don’t take this explanation particularly seriously. It strikes me, if you’ll pardon the expression, as a deus ex machina — a magical placeholder solution to what is otherwise a fascinating and important problem.² I just wanted to give a feel for the kind of thing that fits as an answer here.

Let’s now turn to the natural world, where two more interesting answers await.

Individual selection

A second answer to our question — what supports the tower of morality? — is natural selection. Specifically, individual selection. This is the Darwinian mechanism we all learned in high school: good old survival (and reproduction) of the fittest. The idea is that some individuals have more useful traits than others, and thereby live longer and pass more of their genes along to the next generation.

So if morality evolved via individual selection, it means that our ancestors who behaved morally outcompeted their rivals who didn’t; it was to their individual advantage to help others. This puts morality on the same foundation as our fear of heights, our taste for fatty foods, and our sex drive — namely, the logic of (genetic) self-interest.

As explanatory principles go, self-interest is solid. Certainly it’s capable of the kind of theoretical heavy-lifting needed to explain most features of the biological world. Metaphorically speaking, it means there’s nothing funky propping up the tower — just the ordinary forces of static friction and solids pushing back against solids:

The problem with individual selection is that it has trouble explaining precisely the traits we’re investigating today: namely, those that involve true self-sacrifice. If someone behaves altruistically, he’s giving up some of his own resources — his own reproductive EV — in order to help others. In the game of individual selection, this is (by definition) a losing move. To take an extreme example, someone might martyr himself on a grenade to save the lives of innocent bystanders — in which case he’s definitely not passing any more of his altruistic genes along. In this way, when individuals compete against each other, altruism inevitably gets weeded out of the gene pool:

I certainly don’t mean to suggest that individual selection is incapable of explaining morality. It’s just that any path from self-interest to moral behavior is going to be convoluted. Moreover, whatever explanation we come up with will necessarily entail two unpalatable conclusions — two “bitter pills” we would have to swallow if we want to ascribe our moral instincts to individual selection:- Bitter pill #1: Accepting that there’s no instinct for true altruism. Individual selection simply can’t evolve a creature that doesn’t optimize for its own bottom line; self-interest is non-negotiable. In the language of our tower metaphor, this means accepting that the penthouse doesn’t exist.

- Bitter pill #2: Accepting that even the highest floors, just below the missing penthouse, are manifestations of self-interest. In other words, every instinct for empathy, compassion, charity, and virtue, to the extent that it’s inborn, evolved because it benefitted our ancestors who expressed it.

Group selection

The third answer to the question of what “props up” our moral instincts is group selection:

Group selection — often discussed as multilevel selection — is another mechanism of Darwinian (genetic) evolution. The idea is that we have to look at competition among groups in addition to competition among individuals. This adds just enough of a twist to make things interesting.

Note that group selection, as biologists use the term, needs to be sharply distinguished from cultural selection — though the two are often conflated. Group selection is a genetic process whereby group competition causes some genes to become more or less frequent within a population. Cultural selection, in contrast, is a memetic process whereby group competition drives cultural change. (For example, one group might copy a practice from a more successful group.) There are many fascinating things to say about cultural selection, but in this post we are entirely concerned with group selection and its effects on human DNA.

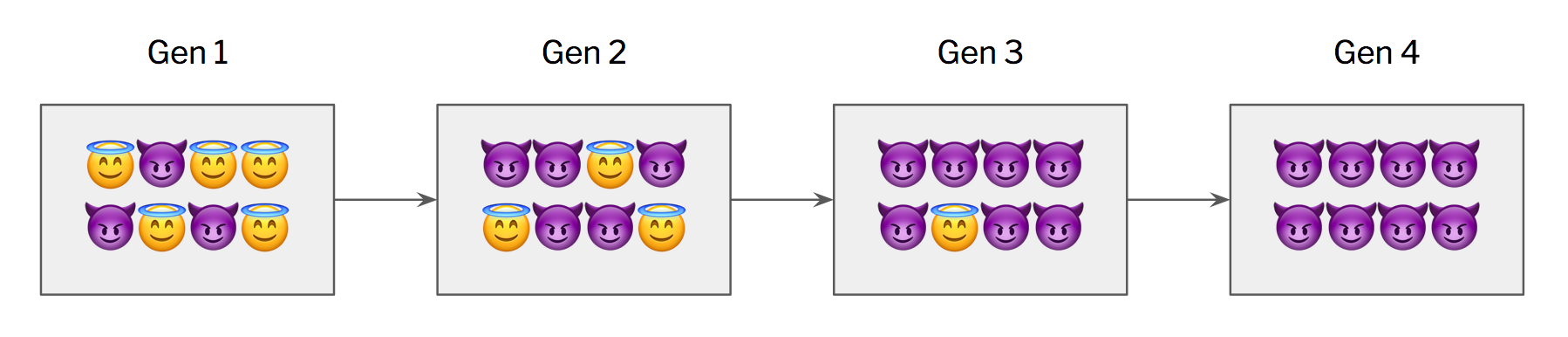

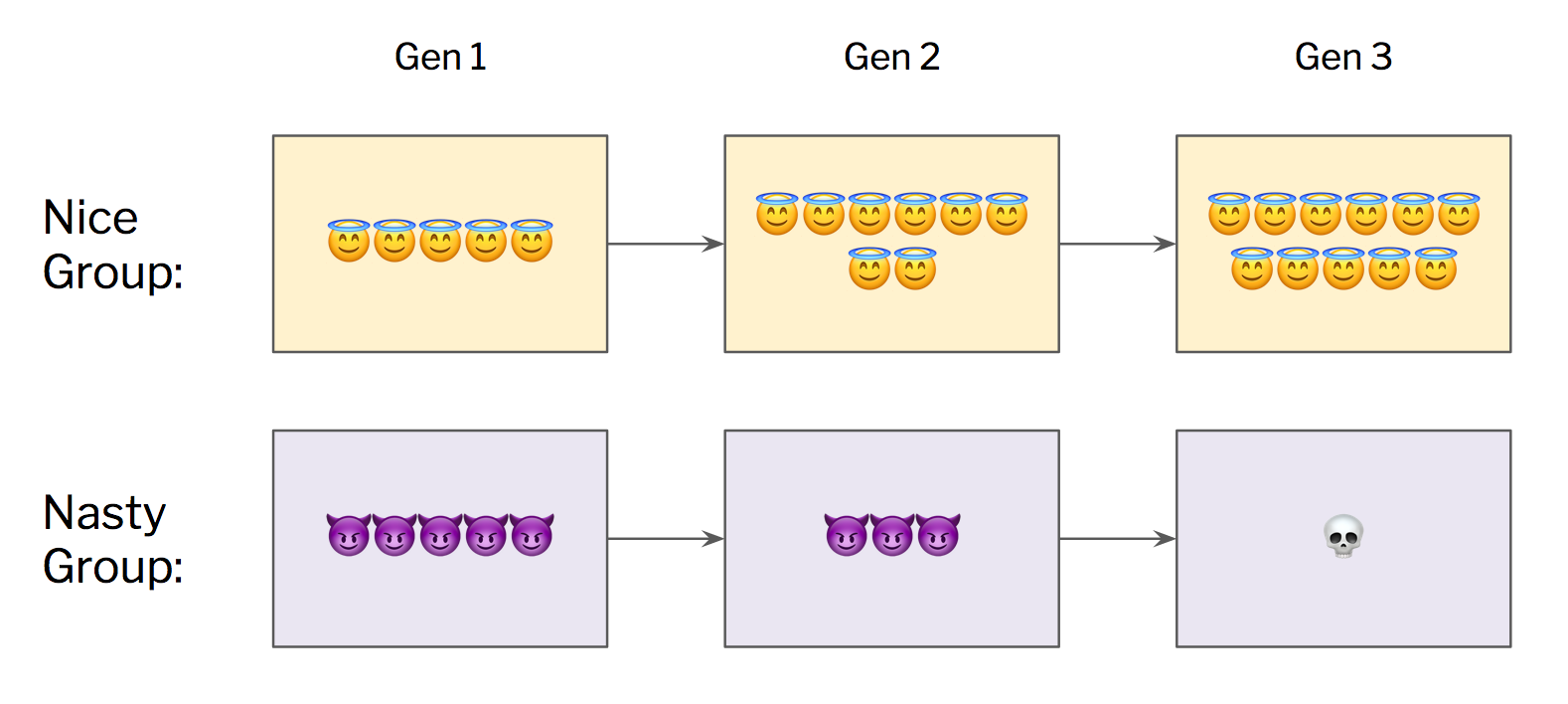

To illustrate group selection, consider a simplified model with just two types of people: saints (indiscriminate altruists) and sociopaths (ruthless exploiters). Further, assume a person's identity is fixed at birth and determined by a single gene. Now, within the context of a single group, saints will always lose out to sociopaths. An entire group of saints, however, will tend to outcompete a group of sociopaths — because the saints will cooperate while the sociopaths are cutting each other down. And thus nicer groups will grow, flourish, and make more babies, while nasty groups wither away. This is how we might, plausibly, evolve altruism out of “nature red in tooth and claw.”

Sounds great, right? This seems to give us exactly what we want: instincts to help others (well, fellow group members at least), self-interest be damned. In practice, however, there’s almost never such a pure matchup: 100% Saints vs. 100% Sociopaths. Everything is always more mixed, meaning that group selection and individual selection operate simultaneously. And, unfortunately, individual selection tends to work much faster and exert stronger evolutionary pressures than group selection, quickly eroding whatever altruism might otherwise be able to evolve.

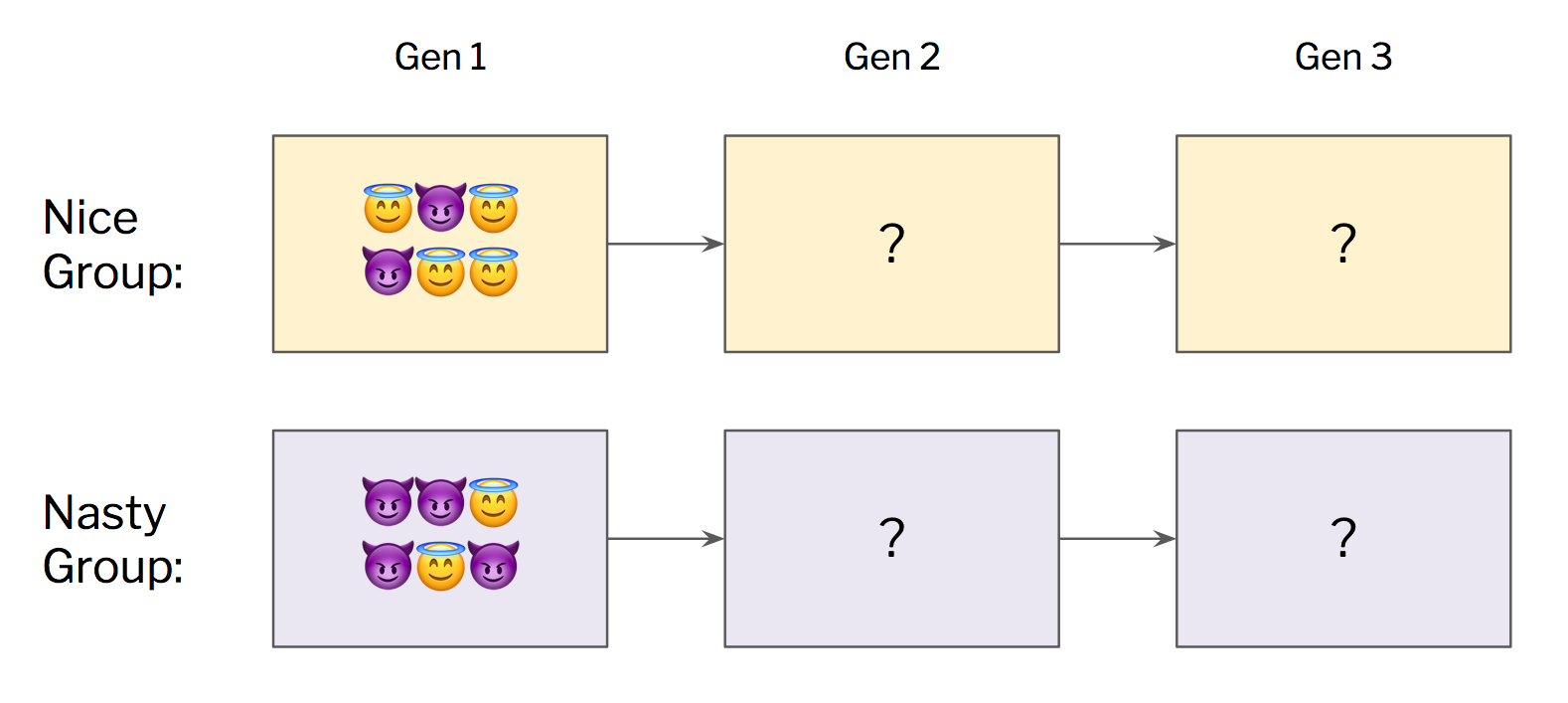

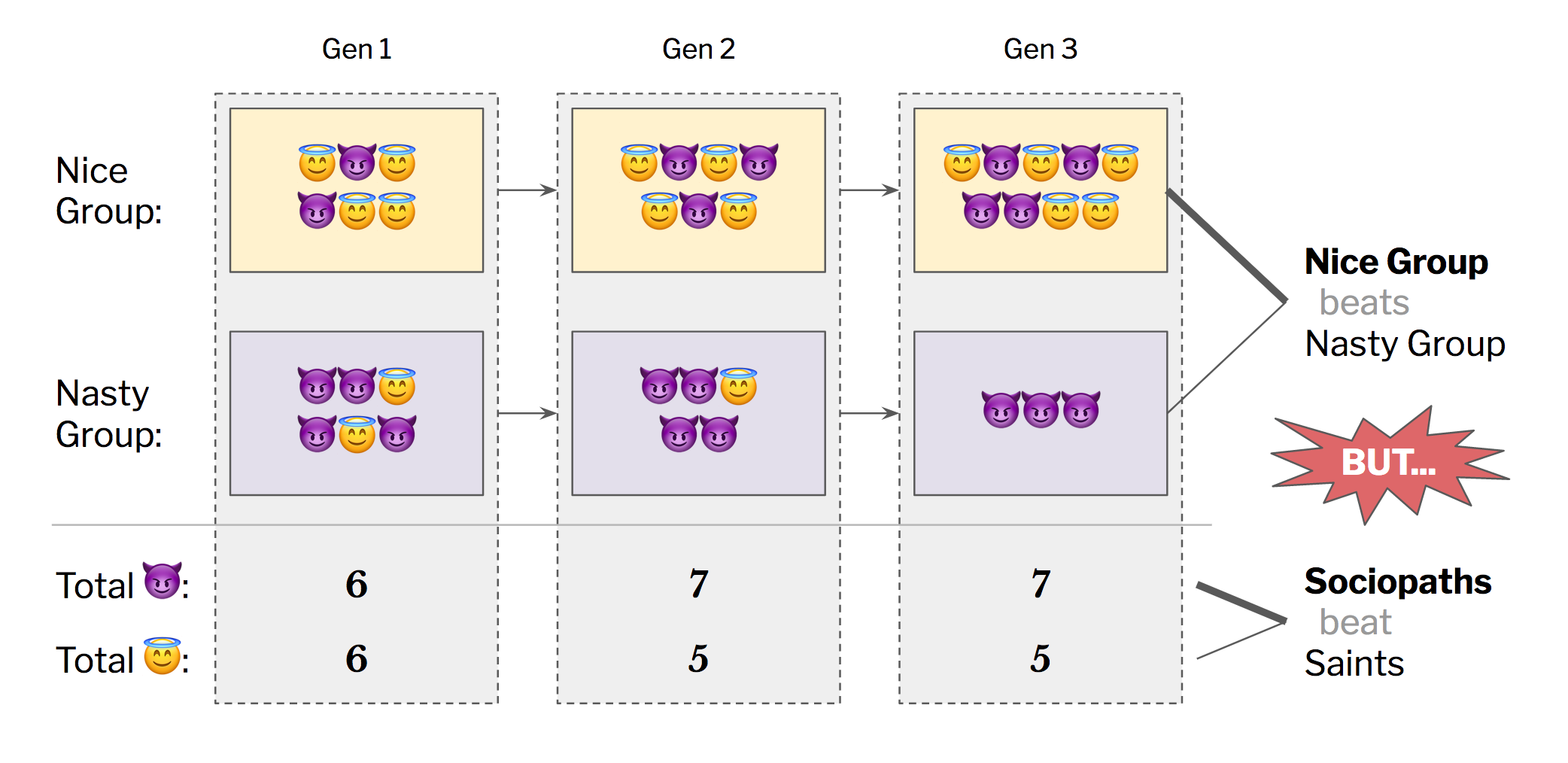

Let’s play it out. A common group-vs.-group matchup might look like this:

On a group level, we can reasonably expect the Nice Group to fare better, since it has a higher proportion of saints. But within each group, saints will lose to sociopaths. So the proportion of saints in the overall population will typically fall over time:

The only way to get group selection to work out, mathematically, is under very specific conditions. (1) Groups have to be fairly isolated from each other, enough that sociopaths can’t jump freely from group to group. And (2) they need enough time in isolation to allow group-level advantages to produce demographic gains. However, (3) the groups also need to come together periodically to remix their members. This all hinges on Simpson’s paradox, and you can read more about it here and here. But suffice it to say that the fussiness of these models is a real drawback when trying to use group selection to explain altruism.

A counterintuitive dilemma

OK, let’s regroup.

So far we’ve discussed two materialist hypotheses for the origin of human morality. Individual selection is robust, but requires us to abandon the idea of altruism and reconceptualize morality as a form of self-interest. In contrast, group selection gives us perfect (group-oriented) altruism, but requires a very special kind of environment in which to operate.

Now, Q: Which hypothesis should we root for?

I realize this is a strange question to ask. Emotional precommitments are a big scientific no-no, because Nature doesn’t care what we want to be true. (Channeling Feynman, the only legitimate scientific emotion is curiosity.) But I’d like to apply for an exception in this case, because interrogating our (irrational) desires will, I think, shed light on the nature of these two explanations.

So, please, indulge me: Which hypothesis should we root for?

I suspect many of us intuitively root for group selection. When we witness an act of true altruism, true self-sacrifice, we celebrate it with every fiber of our beings — and rightly so. We want true altruism to exist. And we want it not to be a fluke. Group selection gives us that. Moreover, it’s depressing to think that morality might be an outgrowth of self-interest. It would mean that, behind every virtuous act and noble impulse, our brains are doing some kind of calculation which implies that the deed in question is likely to benefit us in some way. And that’s not the kind of morality we want. So isn’t it right and good and proper to hope our species is better than that?

In this case, no. Such hope is intuitively appealing, but dangerously misplaced.

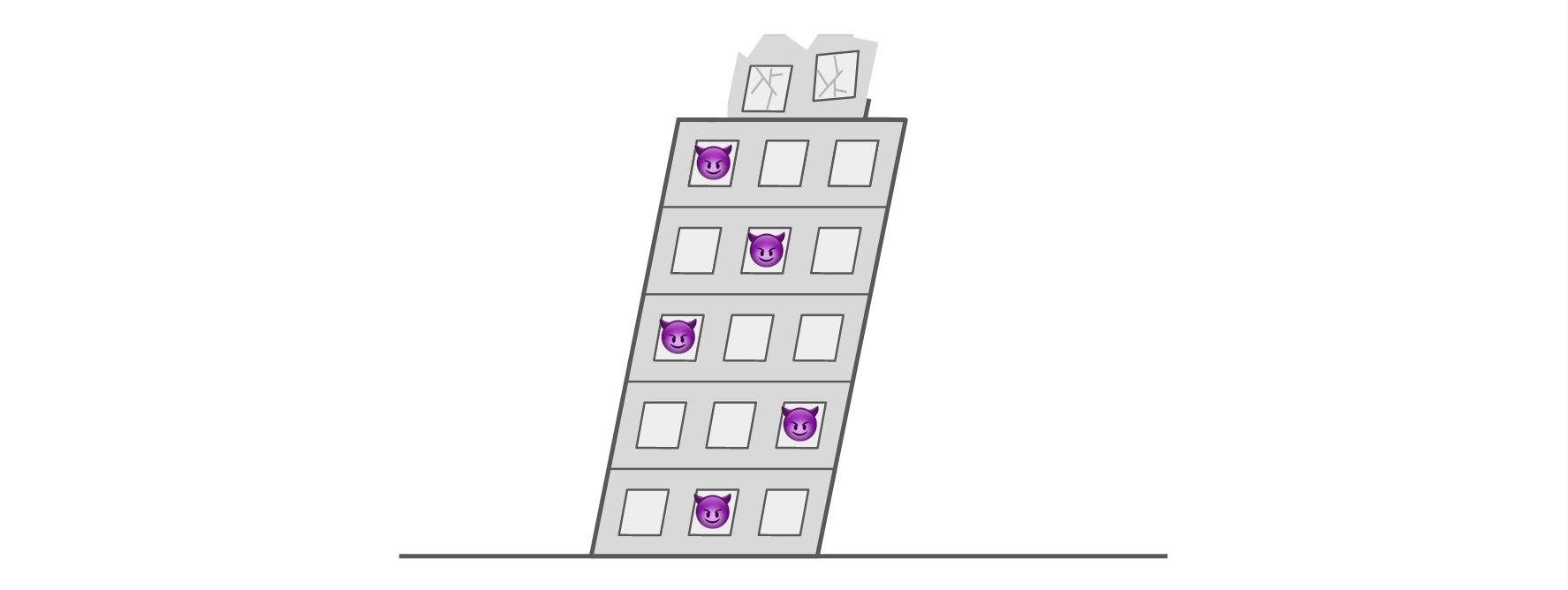

The problem with group selection — and why it’s definitely not worth rooting for — comes back to how particular it is. As we discussed, group selection only works in a very specific type of environment, one in which groups are isolated from each other for generations. And yes, it’s possible that our ancestors actually lived in those conditions. But — here’s the important part — our modern environment isn’t conducive to group selection. Most people today live in big heterogenous populations of more or less freely mixing (and freely mating) individuals. So whatever group-level processes were selecting for good behavior in the past are no longer operating today.

When a trait was once adaptive, but no longer is, we call it vestigial. And if morality derives from group selection, it must be a vestigial instinct. A holdover from our evolutionary past — like a dinosaur after the asteroid impact, somehow still alive but soon to be extinct.

In our tower metaphor, it’s as if a load-bearing support structure had been used to construct the tower, but was recently pulled out from under us. And this, in turn, implies a grim future:

Let me put this in even stronger (and more discouraging) terms. If group selection is how we arrived at our moral instincts, it means we’re now living in a world where bad people will outcompete good people. Where virtue will be punished and evil rewarded. Where sociopaths are destined to win — or at least have the upper hand for the foreseeable future.

I don’t know about you, but I have a pretty clear (if unscientific) preference for not living in such a world.

Quick scenic detour

There’s at least one other place in science where well-meaning intuition misleads us into rooting for disaster.

Suppose tomorrow we found evidence of single-celled organisms on Mars. At first blush, this seems like something to celebrate. We’re not alone! The universe isn’t as cold and dead as we feared! Unfortunately, such a discovery would be among the worst pieces of news we could ever learn.

To understand why, consider Enrico Fermi’s puzzle: the strange fact that we seem to be the only intelligent (technological) species in our galaxy, despite billions of years and billions of planets on which another one could have evolved. In very broad terms, there are two ways to resolve the puzzle: Either (1) the universe rarely makes an intelligent species, or (2) the universe makes a lot of intelligent species, but bad things inevitably happen to them (e.g., they destroy themselves with their own technology). When we put our choices this way, it’s pretty clear we should root for resolution #1. (Especially given that we already exist!) Unfortunately, every scrap of evidence we find of life on other planets makes #2 more likely to be the case. Even the existence of primitive life raises the odds that the universe can produce intelligence. And if we happen to find life on the very planet next door, it would imply that the universe is teeming with the potential to make other species like ours, shifting a lot of probability mass to #2 — which doesn’t bode well for our future. (I’ve simplified this a bit. Nick Bostrom has more.)

Happily, as with individual selection and group selection, Nature doesn’t care if we root for the “wrong” hypothesis. The question is already settled, out there in objective reality, just waiting for us to find it.

Back to morality

OK, time to come clean.

I don’t believe in group selection. Specifically, I don’t think our ancestors actually lived under the conditions that would allow group selection to operate — or, if they did, I don’t think the selection pressures would have been nearly strong enough to evolve significant traits. This isn’t just my opinion; near as I can tell, it seems to be the scientific consensus.³ This textbook chapter provides a good (and readable) overview. Steven Pinker also weighs in with characteristic clarity.

Earlier I described two “bitter pills” a person should be prepared to swallow in order to accept individual selection as the source of our moral instincts. Let me choke them down now:Bitter pill #1: There’s no instinct for true altruism.Sure, I’ll accept this.

Of course there are instances of perfect self-sacrificial altruism: people do occasionally fall on their grenades, undertake suicide missions, risk their own lives to save a stranger’s, etc. But there’s an important sense in which we should analyze these as mistakes or accidents, rather than deliberate (strategic) behavior — at least from the perspective of the genes that fashioned our brains.

More precisely, perhaps, we might say that self-sacrifice is an “unintended, low-probability side-effect” of instincts that otherwise serve us quite well. Previously I’ve described this as hill-climbing a volcano. Our instincts tell us to climb “up” the fitness landscape, and most of the time, that’s where we go. Great! But occasionally, we try to go “up” and plummet to the bottom of a crater. ¯\_(ツ)_/¯. But what are you gonna do? You can’t just sit and play it safe at the bottom of the hill while your rivals have all the fun.

Note that this doesn’t mean we shouldn’t celebrate such acts of selfless heroism. We definitely should! In fact, we must, because the very act of celebrating good behavior is what makes it more common. We just have to recognize that the behaviors we’re celebrating may not be game-theoretically sustainable. If good people sacrifice too much, the wicked will inherit the earth.Bitter pill #2: Most forms of moral behavior are rooted, ultimately, in self-interest.Sure, I’ll swallow this too, although it’s a bit harder to wash down.

What this means is that empathy, compassion, charity, etc., were all winning strategies for our ancestors. And even though our modern environment is different, similar incentives probably exist today. Thus our brains must calculate when potential actions — including moral actions — are likely to pay off. These calculations are often unconscious and heuristic, of course. But the point is, even when we’re “doing the right thing,” we’re typically doing the right thing for ourselves.

It might help to sketch, very briefly, what this kind of self-interested moral calculus looks like. I don’t have all the mechanics worked out, but I know one important factor is reputation. It’s arguably the lynchpin of cooperation outside the family. When other people know you as someone who consistently behaves well, they’re more likely to trust you, team up with you, help you out, etc., etc. In other words, they reward you. And knowing this, we’re all eager to enhance our reputations by a variety of means — e.g., by helping others. In this way, reputation is the alchemical agent that transmutes near-term self-sacrifice into longer-term self-interest.

But — I’ve heard this objection many times — if reputation is so important, why do people often behave well even when nobody’s watching? Doesn’t that smell suspiciously like altruism?

One possible answer is that there was less privacy in the ancestral environment than we have today, so we’re wired to behave as if we’re being watched all the time, even when we aren’t. I think there’s some truth to this — specifically, the EEA was a less private place — but mostly it seems like an excuse to reject the bitter pill. (Also, note that this explanation, like group selection, implies morality is vestigial.⁴)

So why do people behave well even when nobody’s watching? I prefer another answer: that it’s simply a good (self-interested) strategy, because cutting moral corners is risky. If you make a habit of always trying to calculate when an action of yours will or won’t be seen by others, you’ll inevitably slip up. You’ll get caught doing something bad when you thought no one would see it, and the ensuing loss of reputation will offset whatever gains you earned by cutting corners.

It’s not that we never cut corners — of course we do. In fact, we very pointedly use the odds of getting caught in determining whether or when to cheat; why else would transparency be so important? My point is, deciding not to cheat, even when you could probably get away with it is often a good, safe, EV-maximizing move.

A strange gospel

Most of what we’ve discussed today pertains to our innate sense of goodness, our moral instincts. To the extent that morality is a learned behavior, all bets are off.⁵ But there’s a good case to be made that at least some of our moral intuitions come prewired (in the form of tendencies, at any rate), so the question of how they arose remains extremely relevant. And as far as I can tell, the answer seems to be individual selection rather than group selection.

Here, then, is the chain of reasoning I want to endorse:- We have prewired moral instincts (probably).

- They arose via individual selection (probably).

- Therefore: there are conditions familiar to our species in which moral behavior is a winning strategy.

BEING GOOD IS (typically) GOOD FOR YOU! MORAL BEHAVIOR (usually) WINS!

Of course I’m hardly the first person to make this kind of claim. It’s the well-known philosophy of enlightened self-interest, the idea that we can “do well for ourselves by doing good for others.” It’s the philosophy described by Alexis de Tocqueville, preached by Adam Smith, and practiced by Benjamin Franklin. It undergirds Dale Carnegie’s famous self-help book. In the biological literature, it’s known as “indirect reciprocity” or “competitive altruism.” So the basic idea is certainly out there. I just find it strange how little emphasis it gets, relative to what it deserves.But why, exactly? Why is this not a more common gospel?

I think the main problem is that we’re squeamish about self-interest. To explain something pure, like moral behavior, as derived from something dirty, like self-interest, is to create a sacred/profane circuit violation, which is taboo. It also casts suspicion on the people who advance such explanations. If you say, “I do the right thing because it’s the right thing, period, end of story,” you leave no crack in your facade through which a pesky interlocutor might question your motives. However, if you say, “I do the right thing because it’s usually the right thing for me,” you’re practically inviting unwanted speculation: How can you be sure you’ll do the right thing next time? What if you’re tempted to cheat? Can you really be trusted at all??

Boy these pills are bitter.

But however uncomfortable all of this makes us, we owe it to each other — and to ourselves, collectively — to acknowledge the good news without flinching away. To do otherwise is to defect when we ought to be cooperating. Because this tower of morality we all inhabit isn’t a steel-girded modern skyscraper. It’s a rickety thing, built from old wood and (for all we know) ridden with termites. And while it has managed to stand for millennia, there’s no guarantee it won’t collapse during the next natural disaster.

If morality is generally a dominant strategy, it’s not an obviously dominant strategy, and certainly not in all circumstances. We — and especially those of us who help build systems — need desperately to study the conditions under which good behavior flourishes, so that we might preserve those conditions, and even enhance them.

This is what I’ve been attempting to do in a lot of my writing over the past few years: to reverse-engineer the tower of morality. (Tower of cooperation? Tower of positive-sum games?) I want to understand how, exactly, it manages to remain standing — sustainably, on its own, without external support or wishful subsidies. I’ve looked at the role of prestige, money, sermons, personhood, network effects, and of course reputation. In my upcoming book, I discuss the all-important role of norms and norm enforcement, and touch on the role of religion. Nowhere do I posit untethered instincts that work “for the good of the group.” I attempt to ground everything, as firmly as I can, in the logic of individual advantage. My tentative conclusion is that it really is possible to secure good behavior (if not perfect sainthood) using a structure built entirely on self-interest.

Which brings us back to that quote I started with:“Game theory is asleep, cooperate for no reason.”I love this quote because it hints, playfully, at many of the ideas we've just discussed. Taken literally, however, it would be a dangerous fantasy. Game theory never sleeps, and we — the good people of society — can’t just “cooperate for no reason” any more than we can just decide to hover 50 feet off the ground. But we can cooperate, and quite splendidly, so long as we have the right structure for it.

_____

This post was adapted — very loosely — from a conversation with Kevin Kwok.

Further reading:

- Addendum for evolution geeks.

- This post is in some ways a counterpoint to Scott Alexander’s fantastic post Meditations on Moloch — the yang to its yin, perhaps? I’m trying to hold both of these ideas in my head simultaneously, and the tension has been very fruitful. Do yourself a favor and go read Scott’s post (or give it a listen).

- Steven Pinker, The False Allure of Group Selection.

- Books: Supercooperators by Martin Nowak and Roger Highfield. The Biology of Moral Systems by Richard Alexander. And of course The Selfish Gene by Richard Dawkins.

Endnotes:

¹ I hope this isn’t a straw man. I realize there are more sophisticated theologies out there. (One that I’m particularly fond of, especially in this context, is Micah Redding’s Minimum Viable Theology.) But I also think there are plenty of real people who subscribe to something like what I’ve articulated.

² Dan Dennett’s distinction between skyhooks and cranes seems relevant here.

³ But cf. Michael Crichton’s remarks on the perversity of “consensus science.” Separately: Two notable exceptions who continue to advocate for group selection are David Sloan Wilson and E. O. Wilson (no relation). In fact, E. O. Wilson’s remarks on group selection in his 2012 book The Social Conquest of Earth were a major goad for me to write this post.

⁴ Evolutionary explanations that imply morality is vestigial are actually quite common, once you know to look for them. Just as I was finishing this post, for example, I heard one from the great evolutionist Richard Dawkins (relevant transcript here). The most general objection — to this whole class of explanations — is that humans are plastic enough to adapt to changing environments via learning, rather than having to wait for genetic evolution. (Yes, this is an efficient market–type explanation.) So when a new niche for bad behavior opens as a result of environmental change, it’s quickly filled by moral entrepreneurs. And thus, whatever good behavior remains is likely to be an equilibrium strategy rather than a vestigial instinct.

⁵ Or, rather, a different kind of argument kicks in — one in which reinforcement learning takes on a role similar to individual selection. More here.

22 Comments

Kevin, I agree with your most general thoughts, but want to raise some questions about the details.

Let me start with the agreement:

1. I very much agree that we should hope that a great deal of moral practice can be explained by inclusive fitness.

2. Reputation has got to be a very big part of the story, right?

3. We don't fess up to instrumental reasons for being moral, because it opens us up to just the kinds of questions you mentioned.

OK, that said, I want to raise some questions about the details . . .

1. Group selection: I don't think there's anything like a "consensus" against it. Pinker doesn't really address David Sloan (not EO) Wilson's multi-level selection theory very well. I would recommend people who read Pinker's piece also look at the replies by D.S. Wilson and David Queller (who, btw, spends most of his time trying to give inclusive fitness explanations). And, if you've got the time, actually dig into some of DS wilson's writings on the topic.

2. I don't share the intuition that pure altruism is a particularly pleasant thing to have. I don't want a world where people feel compelled to take on the role of drone to save the hive. (And I might even go so far as to wonder whether the fact that so many find pure altruism to be a good thing might be some evidence in favor of the idea that group selection did play some role in our past).

3. Along similar lines, my greatest fear regarding group selection is not that it might be a prop that we no longer have, but that it might be a force that drives us to a borg-like collectivist future. Group selection concerns me because I am more of an individualist than a collectivist.

4. Our genes' instrumental reasons aren't necessarily our personal psychological reasons. So we can be genuinely (psychologically) altruistic, even when our genes are being instrumentally rational.

5. In addition to reputation, I would add the moral emotions as costly to fake signals suggestion of Robert Frank, and the coordinated interaction models of Brian Skyrms (in "Passions within Reason" and "Evolution of the Social Contract" respectively). For a full-blooded attempt to give a model of moral discourse that makes use of emotional coordination, it's hard to beat Allan Gibbard's "Wise Choices, Apt Feelings."

I look forward to seeing your book. This has been a major area of study for me for years as well. I really liked your piece on "Personhood", which has a lot to add to the discussion about how we should expect moral discourse to go.

Thus our brains must calculate when potential actions — including moral actions — are likely to pay off.

Ummm... no. There's a difference between knowing the path and walking the path. Evolution gave us emotions/instincts that provoke us to altruism when altruism is likely to pay off (love, affection, friendship) and emotions that inspire us to violence when violence has been the optimal answer (hate, anger, etc.). These are feelings, not calculations. If we were still in our adaptive environment, we'd hardly ever need to reason- following our emotions would almost always give the optimum answer.

In a similar manner, berries that taste good are usually healthy, and berries that taste bad usually aren't. Your taste buds are good at their job, even if your brain doesn't know the difference.

I read once that the the stable level of sociopathy in a population is about 3 percent. If the number is lower, people are trusting enough that sociopaths flourish. A higher number makes people suspicious (for excellent reasons), and sociopaths don't thrive.

Remember that altruism isn't always warm and fuzzy. The desire to inflict punishment, even at a personal cost, is part of our makeup, and is necessary to keep sociopaths in check.

Yup, this is values *are* -- a kind of heuristic shortcut towards behavior that works out in community in the long run, which lets everyone align their interests.

In practice, calculating the gambles behind acting with honesty or generosity under uncertainty in everyday circumstances would be exhausting for the organism.

I wouldn't call these "values", rather "emotions" or "instincts". "Values" implies that they are consciously approved and socially lauded. Very few people or societies would claim to value hate or fear, but evolution provided us with both emotions because, more often than not, they improve inclusive fitness.

In other words, I would say that instincts *are* and values *are largely BS*.

Here's my view on feelings / values: https://medium.com/what-to-build/what-are-feelings-d54a741ea134

Great piece as always Kevin!

To add on to this,

I think an integral part that I didn't see mentioned in your piece, is that humans are much more likely to like and be kind to and more altruistic with those who they have more in common with, including looks, interests, background, ethnicity, country of origin.

How would you explain this in the framework you have laid out here?

Wouldn't group selection be a more apt explanation of this phenomenon?

Furthermore, it seems as if Homo sapiens were under heavy selection pressure when competing with other Homo species, and one of the leading theories is that we used myths/religion in order to create cooperation amongst our groups and we were able to coordinate larger groups of people than our ancestral cousins.

Wouldn't this also be suggestive of group selection?

I think there is truth to all 3 of your potential theories, and a mixture of them can explain altruistic behaviour. Not God in the sense of an omnipotent and omnipresent deity, but stories of religious kind that promotes self-sacrifice and collectivism. The more ancient cultures all are more collectivist in nature than the more recent Western culture that is pervasive today. More religious groups had a better chance of survival than the ones who didn't believe and couldn't coordinate.

I believe that in any case, us humans are so adaptable and plastic in our behaviours, that our environment plays a crucial role in nurturing certain aspects. The three biggest factors, in my opinion, of modernity that has caused altruism to decrease in effectiveness/pervasiveness, is the championing of the individual over the group, the short-term transactional nature of relationships in a world of ever-increasing mobility, and the lack of skin in the game afforded by the two previous factors, one would call it a meta-factor. When you don't have reciprocal skin in each other's games, there is less incentive to be cooperative. Furthermore, with the internet, one can easily switch peer groups, proceed anonymously, and burn bridges while maintaining their "success". Problem is for the sociopaths, in the long term, as you have eloquently stated, being good wins out, and the most successful people are those who are kindest and most altruistic, even if it is with their own intention in mind consciously or subconsciously.

Beyond that, I think that to gain extreme financial success, which is the hallmark of life success in many people's minds as nurtured by the capitalist system, sociopathic tendencies will be rewarded disproportionately. But I think Venkatesh has already spoken enough about this!

Once again,

Great essay!

Great Article! I always love your stuff Kevin.

Seems like you could get around bitter pill #1 (there is not true altruist) by describing it as a common and frequently inevitable mistake. (Not all mistakes get evolved away readily)

Off the top of my head you could describe it as similar to the Sickle Cell / Malaria mechanism.

One copy of an altruism gene puts you in a high performing, cooperative but still looking out for yourself place. This would need to be game-theory advantageous as described. Two copies and you are a virtue-saint. It doesn't need to be adaptive to exist.

You can also go with an "actively encouraged mistakes" pattern. For instance a mother bird will raise most things placed in their nest as their own. They aren't being altruistic, they are just making a mistake. In a similar way, people are optimized for pretty transparent reasons to be altruistic to kin. Trick them into thinking that everyone is kin, and they will start being altruistic to everyone. (See: father / brother titles of most religions)

The bulk of the society can still be self interested, but all trying to "trick" each-other into going "true altruist". It's even a pretty good plan for a sociopath to encourage this "tricking", much like a cookoo. (This would also fit nicely with gervais principle sociopath & clueless rolls)

Karma and Gnosis, bro.

https://www.youtube.com/watch?v=t6RLWbOSvUw

Re: not cutting corners, it matters that our morality is being judged by agents who will properly upweight the signal. The lower the chance of being observed, the more credit you get for moral behavior. And a single observation of immoral behavior by someone who doesn't expect to be detected outweighs many many observations when they knew you were watching.

Ideally we as observers and enforcera would set the upweighting & social punishment such that immorality doesn't pay (efficient punishment in law & econ).

Both of you would find Boyd's "How to be a Moral Realist" illuminating. He paints a picture of both individual wellbeing and community wellbeing as "homeostatic". And I think Boyd's view gets much closer to what people actually mean by altruistic vs selfish. In common parlance altruistic doesn't mean "not in accord with the fitness function"... that's a meaning imported from genetics. Boyd's working paper on evopsych errors "On Being Oh So Scientific" is also good here.

This is a place where I disagree with Pinker and the other first-gen evo psych people. Multi-level selection lets us see group selection as an aspect of inclusive fitness, rather than depending on unspecified woo, as did they earlier group selection. Yes, it does depend on some genetic structure related to social groups, and more importantly, on co-evolved social structures of punishing norm violators, etc.

I'd be interested in seeing recent quantitative genetic argument on the unfeasiblity significant group selection. So far as I know this goes back to hand-waving arguments from the 70's and way simplistic computer simulations. For example, adding a spatial dimension makes "altruism" possible. Not PC, but today we also have firm evidence that groups can live next to other for centuries with minimal inter-breeding.

As to the bitterness of evolutionary moral psychology. Yes, this is a thing, but there is a bit of self-own here. In first-gen EP anything that increases inclusive fitness is called "selfish". I see the newspaper headlines now: "Selfish mom jumps in river to save her child when she could have been volunteering in Africa". This is not normal English usage.

I prefer "self interested" to selfish for this reason. Dawkins himself has

Can I suggest a much more fundamental exploration? This is something that I have spent considerable time and effort on, because the game-theory/evolutionary/cultural explanations don't seem even kinda-sorta airtight to me. In the particular case of this essay, what exactly suggests that there is such a thing as a structure to begin with? Not to mention a physically well-designed structure like a building that presumably allows for temporal/generational mobility between its various levels. Why is such a complicated assumption an acceptable starting point, especially given how fundamental and unitary the nature of the inquiry that follows is?

Shouldn’t the mental model of the building (or whatever else it may be) follow as a result of the biological or sociological forces you observe, rather than the other way round? It’s a little bit of a self-fulfilling prophecy in that sense. While the essay does make for thought provoking reading, it makes me wonder why you would presuppose a building with stratified levels as a representation of where we find ourselves as a species. That is a very key, and in fact, a very loaded assumption. Change the building to something else, and the entire synthesis that follows will look very different. Because the metaphor of a building comes pre-loaded with ideas like foundation, structure, stability, crashing, scaffolding, external support etc. I don't think these are irrefutable ideas, or even believable ones, as far as our civilization goes. They might be convenient and comforting, but that's a different matter.

I have a work-in-progress, ugly-cousin version of this essay, and these were questions I have waded through, so I just had to put these out there…I find myself increasingly convinced that the fallacy of such explanations is actually in the very assumption that can be a cogent explanation at all.

*that there can be a cogent explanation at all.

I agree with a lot of what you say here. Intuitively, I think there is something correct about putting "altruistic sainthood" towards the top and "sociopathic selfishness" towards the bottom. There is some sense in which "altruistic" behavior is superior to "sociopathic" behavior. I think the y-axis would be labeled something like, "Likelihood of Contributing to the Sustainability of Civilization and the Non-Destruction of the Biosphere," or something like that. Kevin explores this in the section where he talks about 100% Saint vs 100% Sociopath societies.

I agree though, that the mental model of a building feels off. The reason, I think, aside from the points you mentioned, is that the building mental model implies some kind of permanent ascendancy. The subtle implication is that once someone has reached the top floor, they can stay there, unless they choose to walk down the steps.

I know talk of spirituality is somewhat risky in today's age of pop spirituality, but I think certain Buddhist Mandalas, which depict this same progression from "Sociopath" to "Saint" in a circle, rather than a vertical structure, is closer to my intuition about the problems of morality. The Buddhists still place the saints at the top and the asuras at the bottom, but represent the progression as a cycle, i.e., there is no possibility of permanent ascendence, once you reach the top, gravity and the curvature of the structure will lead you to slide back down eventually. Though one type of existence may be relatively better than another, both extremes are part of the same cycle.

(Maybe Kevin senses this too, which is partly why the tower is leaning; aside from representing the basic ricketiness of the whole thing, the line is trying to become a circle.)

The goal is not ascendence, but transcendence. "Buddha-hood" exists beyond the circle, but how can it be explained?

Excellent and thought-provoking essay. Nice use of emojis, by the way.

However, there may be an intractable problem at higher levels of analysis. Given that all individual human behavior is technically "moral" or "immoral" (as derived from and adjudicated by the behavioral heuristic that is actually in charge for each individual... regardless of Group membership(s)), which base-level moral code is to define good behaviors and bad behaviors for a Group of Groups (such as a Culture or a Nation or... a Species)?

For example, the moral code of Group A allows women to have a freedom of speech and rewards those who promote the active voice of women. The moral code of Group B denies women the freedom of speech and rewards those who suppress the voice of women. Which is "good" behavior? How do Groups decide which Group to follow? By what standard can the good be determined?

Popularity is probably not the answer because mobs are not known for their effective governance. Utility is probably not the answer because utility curves are not defined by one solitary point. Age (high or low) is probably not the answer because we have domain-constrained evidence that some old ideas outperform new ideas and some new ideas outperform old ideas. On top of these difficulties, there is our bounded rationality looming in the background with its ever-present lead pipe to smack the kneecaps of our collective cognition.

Must we look beyond the nature of humans for answers? Is there anything viable in the so-called "super-natural" space? It seems that the religious answers have been somewhat pushed to the side for the purpose of the essay. (I am not quibbling with this approach because picking winners among the mutually-exclusive truth claims of major religions is difficult at best.) Is this all we are left with, though? Full circle back to "you do you" and "don't tread on me"?

I really liked your line: "If good people sacrifice too much, the wicked will inherit the earth." I am tempted to judge all actions by curvilinear survival optimization... Altruism is good for you *and me* up to a certain point, and then just you and not me until I am spent. After I am spent, all things being equal, your survivability permanently declines to the same degree that my talent or treasure or time is still necessary in your life. Because the optimization point was ignored, we are both now dead. So, in addition to Minimum Viable Sociopathy, perhaps we should hold and establish a Maximum Viable Altruism that preserves us and our ability to help others in the future.

Interesting essay, but I think something important is missed : the level at which evolution/selection pressures work is not static. Your analysis seems to suggest there must be one Source, from the beginning to the end. Environmental changes lead to selection pressure changes lead to population changes lead to environmental changes. In general, one should ask themselves: at what level is the most important competition happening in this environment? That is where the selective pressure is greatest, for this snapshot.

As an example, today I would argue competition exists mostly at a technological level. If one group has computers and the other doesn't, it really doesn't matter what their genes (as individuals, or a group) or culture look like. Which brings me to your consideration of group selection, in which you specifically focused on genes and static groups: sure, the analysis makes it looks like group selection doesn't work. But the world, passed a certain date, isn't that static. What if you focused on later stages when groups were dynamic/fluid and discussed cultural selection pressures as well? We, as a society/higher level organism, can evolve outside of our genes. Our behavior changes, from generation to generation, based partly on new experiences but also on the stories we pass down to our kids. These learnings are likely based partly on individual survival, and partly on group survival (which of course benefits individual survival as well). I am not sure it "has" to be one or the other. And while genes were once the primary means of adaptation, I would hypothesize they are of much less importance today.

Tangentially, when considering groups the analysis also changes if you add in more degrees of freedom between 'altruistic' and 'selfish', and the ability to weed individuals out, I think (something like this: http://ncase.me/trust/)

Great article and very important discussion!

Just want to set this aside regarding your game theoretic analysis of hybrid saint-sociopath societies: this slate star codex article elaborates on the same insight beautifully (and, obviously, the Gervais Principle touches on it too).

http://slatestarcodex.com/2014/07/30/meditations-on-moloch/

Looking forward to reading further pieces on this subject.

Nice metaphor! Also I like the terms sociopath and Saint, much cooler than the boring old hawk and dove from game theory.

If you haven't already, I would strongly recommend reading Bruce Schneier's "liars and outliers - enabling the trust that society thrives on" as it's about these very same topics.

What about kin selection?

The desire for morality creates immorality because a concept that "*is*" cannot exists without a corresponding concept of what it "*isn't*" . A circle cannot be seen without a background and a background cannot be seen without the circle.

The very act of desiring and grasping for something that is only valuable without asking creates its opposite by asking for that that is only valuable if it does not need to be asked for. Prostitution cannot be love and a surveillance-state cannot be honest.

The actions of the self are in alignment with the actions of others the stronger one identifies with others. What is a fight can become a dance of give and take rather than a struggle to "win". Groups create this dynamic and allow decisions to go beyond individual life-spans for more long-term thinking (maximizing total value) and also by creating a reputation system that allows some degree of voting on possible outcomes. (Without reputation voting, only survival based voting is possible which is much slower)

Yet ironically the desire to create such benefits necessarily requires creation of an out-group. The higher the contrast with an out-group, the more powerful the boundary of the in-group. With more groups comes more competition and the threat to the dissolution of the group and the obliteration of their reputation allocations through their history.

The creation of groups and ideas has one fundamental goal, to create something that is beyond human. Death is a human frailty and so the group must become "stronger" in the face of these threats. The way they get stronger is increasing in strength. But a human group can only increase in human strength and so the godhood of the idea/group is further destroyed.

Groups and ideas become frail because of the desire for control, almost all well-meaning at the beginning. To steer an idea is to become a locus of control but also a point of humanity that necessarily limits immortality. (humans being mortal). Blockchains may be the beginning of homo-sapien based immortal ideas. They are a stepping stone to the understanding of the true immortal idea of "God" in its various forms. (I as one -> The group as one -> Nation as one -> World as one -> Universe as one)

The most optimistic idea I've had is that truly inspiring people seem to have one thing in common. That they are truly themselves. They are truly themselves despite being necessarily influence by their environment. Though the environment seems a deterministic trap, by not fighting it and instead flowing with it, they radiate a certain light and are truly themselves despite this trap. And as the individual is defined by the environment, it seems this light exists within the environment as well.

The dead tree in winter is revealed to be the fruit tree in spring. The dead rock in space is revealed to be a life-rock in the future. The tree was alive all along just as the rock was alive all along. Harmony with others is necessarily harmony with the self and all things.

Morality as a concept in itself seems impoverished (as all words are) through this lens as it is a description of a goal, something to be desired by reaching out for something that necessarily creates its shadow. This is in opposition to something inspired which is an energy that causes one to reach inwards for something. The shadow of the self is other and you realize all is one.

Just a few quick thoughts.

First, it is very much worth reckoning with a more modern expression of group selectionist ideas, and with philosophical efforts to think clearly about different meanings of “altruism” in the context of facts presented by evolution. For instance, is a parent’s sacrifice not “really” altruistic because it maximizes inclusive fitness? For all of this I’d recommend a significant addition to the further reading list, Unto Others, by David Sloan Wilson and Elliot Sober ( https://www.amazon.com/Unto-Others-Evolution-Psychology-Unselfish/dp/0674930479 ).

Second, just as an opinion, my impression is that multi-level selection has a bad reputation partly because folks are (still?) over-reacting to the original error of “good of the species” arguments in animal behavior that were in fact unsound because they contradicted the imperatives of individual fitness maximization. Everyone is taught not to fall into that particular error again, and to keep individual selfishness in mind, and that corrective training is so strong that maybe there’s a tendency to misidentify any other kind of possible dynamic, even when it’s rooted in individual fitness, as that original error.

Unfortunately virtue is not inherited. Socrates: What then? Can you name anyone Pericles has made wise, starting with his own sons?