Cloud Viruses in the Invisible Republic

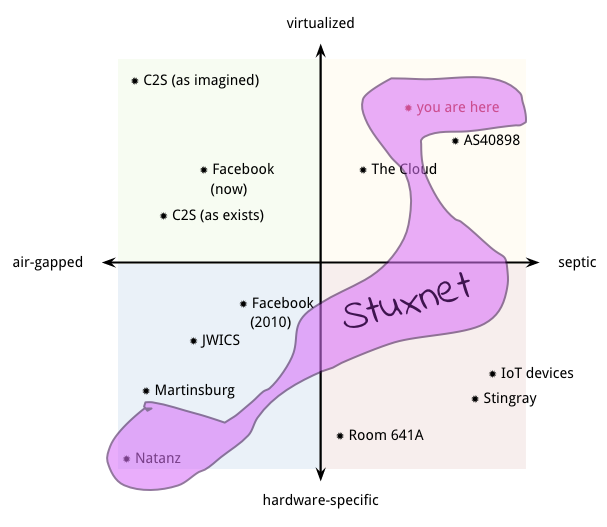

That probably requires some explanation. There are technological and environmental pressures that force spook shops and criminals to carry out permanent infiltrations into the computing infrastructure that the world economy depends on. It's possible that the attack/defense cycle will produce an infrastructure that has better "antibodies", and even co-options, but will never rid us of the infection. Meanwhile these pressures will continue to produce truly weird beasts. Can you guess what the pink beast plotted on the 2x2 below is?Q: What do an air-gapped private datacenter, a public cloud offering rented servers, and a botnet all have in common?

A: Sooner or later, they’re all wormfood.

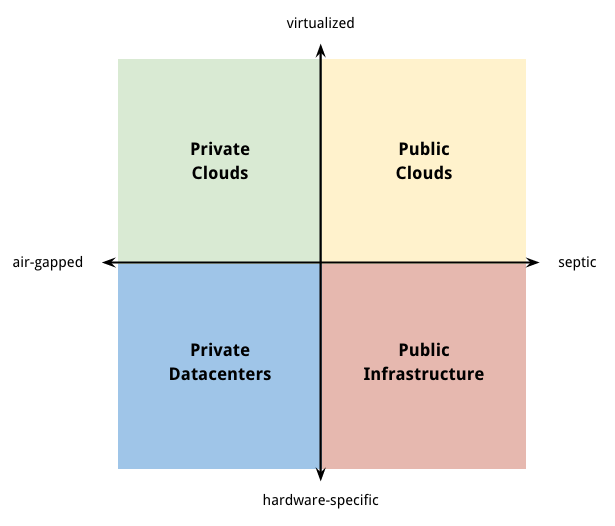

Two Dimensions of Cybersecurity

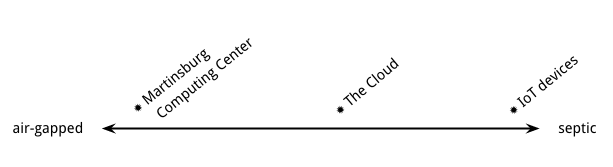

There are two dimensions of security that interact in interesting ways: "sepsis" and "virtualization". Let's take sepsis first. Computing environments can be ranked by how much actors are allowed to manipulate the environment and each other.

On one end you have air-gapped systems. Classified intelligence work (as well as the IRS and other islands of paranoia like petroleum and finance) makes use of computing environments that are physically separated from outside networks [0]. To reach the “high side”, data & software must be written to "dead" storage media and handed off through a literal gap of air. Internal security is enforced by a combination of physical, social, and technological means.

On the other extreme of the spectrum, the environments are septic. Think about all those unpatched home computers and nannycams with default passwords. They are metaphorical cesspits, home to rampant infection & colonization by malicious code.

In the middle are public clouds like Amazon Web Services, Google Compute Platform, or Microsoft Azure. A cloud datacenter is a gigantic building filled with computing equipment, open to anyone for almost any purpose. All you need is a credit card. For that reason cloud environments tend to have elaborate software protections in place against player-versus-player mischief and attacks against the environment.

Like the reconquista underway in America's downtowns, we're moving out of suburban gated communities of private datacenters and into high-tech hi-rises with thin walls and shared plumbing. This is the most consequential shift in computing security since the internet itself.Knock three times on the ceiling

Virtualization is the second dimension on our spectrum: how much the actors in a given environment depend on specific hardware or software infrastructure in order to live. That gives us neat quadrants to classify systems into.The cloud is a giant collection of virtual bubbles. There are economic pressures to give users more performance, to operate closer to the metal, and to colocate those bubbles on shared hardware to save money. This creates many opportunities to break abstractions for fun & profit.

For instance, people often forget that one should be more paranoid when running in a shared / septic environment, and create services with no encryption or authentication. Or they forget their physics. If Alice is running her super secret stuff on CPU 0 of a shared server, it may be possible for Eve to set up on CPU 1 and extract all of Alice’s secrets by observing the electromagnetic “noise” produced by the running of her code. Cloud vulnerabilities are discovered all the damned time.

I’m not saying that virtualized environments are insecure or that the designers are stupid. (I am saying that they are wormfood, but we’ll come to that.) The word “security” is overloaded with marketing and fear. Calling something "secure" or "insecure" assumes that you have considered every possible vector of attack. But security attacks are all about finding buried or wrong assumptions and exploiting them. It's better to break it down into smaller concepts.

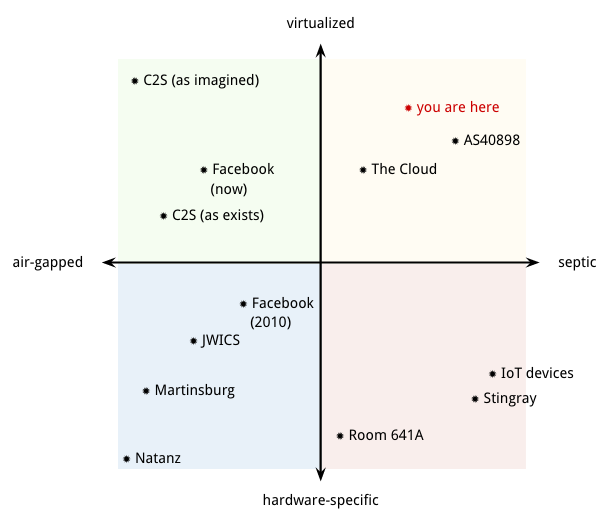

Let’s scatter some examples across the quadrants. We can quibble about specific placements on the axes but that’s not really important.

As a private citizen you live in the “public cloud” quadrant. The computing environments you use are generally very septic and increasingly virtualized. The odds are good that one of your devices or accounts has already been hacked. All of my personal info including finances, legal entanglements, family, close friends, medical history, and possibly my fingerprints were stolen in the epic data breach at the Office of Personnel Management. I didn't know until they sent me a letter. The irony is that I gave them that info in order to obtain the clearance I needed to work on data security.

And see that point labeled AS40898? That’s the infamous old address of the Russian Business Network, one of the more brazen cesspits of illegal activity, hacking, and botnets. The operation took a major hit in 2007, in one of the few cases in which the international community gathered together and literally kicked someone off the internet. But they have certainly not gone away.

The private cloud quadrant is equally interesting, but maybe not in the way you think. A few years ago Facebook was a bare-metal shop running in rented space, but they’ve since virtualized large portions of their stack while also moving to their own custom-designed buildings. They’ve hoisted themselves out of the traditional private datacenter quadrant and into the private cloud game, hanging out with the cool kids like banks and the CIA.

Wait, CIA? Yep. They are in that point labeled C2S. That’s the private, sort-of-air-gapped cloud built for them by Amazon. All of the fun of virtualized clouds with all of the security of JWICS. There are actually several private spook clouds. The Director of National Intelligence, who’s nominally in charge of all 17 American intelligence agencies, is trying to get them to standardize on one. No kidding.

In my opinion, the move from private datacenters to private clouds is not revolutionary. The concept of private clouds is a fluffy blue blankie created to reassure banks and spooks. It allows them to play at adopting the some of the flexibility of virtualized cloud computing without giving up their fetish for perimeters and ownership.

Keeping the cloud private shields them from the weird stuff you have learn when moving to a full-on public cloud: how to make sure your components can verify for themselves that they trustable & tamper-proof, that they can operate in the face of outages & shortages, be clever about sharing finite resources in a marketplace with a multitude of actors, and so on. Then again, as more agencies and contractors play in the same sandbox, at what point is your "private" cloud no longer private?There goes the neighborhood

Here's a real revolutionary idea: once you truly internalize how to do large-scale operations in a public cloud, and you are a spook shop, it's a only small step from there to doing large-scale operations in a hostile cloud. Living off the land, so to speak. Not one-off sabotage, not reconnaissance, I mean setting up shop permanently to monitor and influence your enemies. (Like, say, competing agencies...)When software eats the datacenter for reals it will be weirder and more surprising than we can imagine. The spooks can't ignore the reconquista. Their business is knowing other people's business, and messing with it as circumstances require. They have to go where the action is.

There's plenty of precedent for long-lived chokepoint hacks in the non-virtualized half of the spectrum. Ever wonder why companies like Google and Facebook suddenly moved to encrypt all of their network communications a while back? They made vague noises about Advanced Persistent Threats, long-term efforts by state-funded actors to hack into private systems. Every global power does this, but the scariest infrastructure hack we know about is brought to you by the American government. Room 641A, in a building a few blocks from where I'm sitting, is a nexus for fiberoptic snooping. Says Wikipedia:It is fed by fiber optic lines from beam splitters installed in fiber optic trunks carrying Internet backbone traffic and, as analyzed by J. Scott Marcus, a former CTO for GTE and a former adviser to the FCC, has access to all Internet traffic that passes through the building, and therefore "the capability to enable surveillance and analysis of internet content on a massive scale, including both overseas and purely domestic traffic." Former director of the NSA's World Geopolitical and Military Analysis Reporting Group, William Binney, has estimated that 10 to 20 such facilities have been installed throughout the United States.

Note the use of the present tense. Years after its exposure in the press and independent confirmation, Room 641A or places like it around the country likely remain in operation today. Even if the messages that pass through it are encrypted, the metadata is completely open and the encrypted contents can be recorded for cracking at their leisure. In 2011 Congress gave retroactive immunity to the telecoms companies involved. This is the world we live in. Sleep tight.

Note the use of the present tense. Years after its exposure in the press and independent confirmation, Room 641A or places like it around the country likely remain in operation today. Even if the messages that pass through it are encrypted, the metadata is completely open and the encrypted contents can be recorded for cracking at their leisure. In 2011 Congress gave retroactive immunity to the telecoms companies involved. This is the world we live in. Sleep tight.

Wormfood

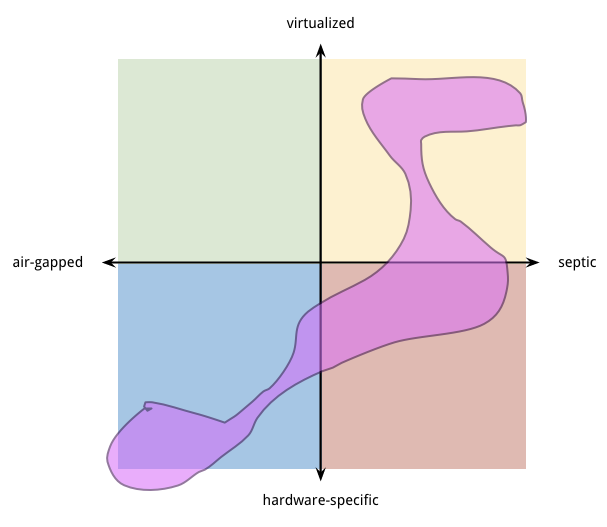

With its fiber splitters and specialized hardware, Room 641A is extremely platform-specific. The world gets even weirder once you throw in virtualization, which, after all, is supposed to make setting up infrastructure easier and cheaper than ever. So far we've been treating these quadrants are separate, and environments as points within them. But what if we break that assumption too?

- It exploited at least four zero-day vulnerabilities in USB drivers, print spoolers, and other Windows subsystems.

- It spread aggressively by physical USB infection and over internal networks, though it was hobbled to not spread over the open internet.

- It was designed to cross the air-gap and get into specific devices in a specific nuclear facility.

- The initial infections were targeted at companies and people in the supply chain of that facility.

- It used stolen certificate signatures from two apparently innocent hardware companies to pass as legitimate.

- It employed clever stealth techniques like hacking the filesystem in order to hide its existence and faking diagnostic data coming from the centrifuges it targeted.

- Once it had nestled in close to its target, it waited for weeks before carrying out its mission.

- That mission was to overdrive the centrifuges to break them and possibly release the extremely toxic uranium hexafloride gas they contain.

- While it's tempting to class it as a "platform-specific" hack, there's reason to believe that it's highly configurable, modular, and upgradable from remote command-and-control servers.

And not just good-guy operations, mind. Using a cyber weapon is identical to proliferating it. Over 100,000 copies of this damned thing were scattered to the winds, to be picked up and studied by who knows who. Trying to stop the proliferation of nuclear weapons with a cyber weapon that proliferates itself is like releasing rabid cats to eat the birds you released to eat the insects, etc. This is why it's so hard to formulate sane military policy around "the cyber". What, exactly, is your threat model when every move risks arming enemies and friendlies alike?

The weird rules of this new game kick up endless absurdities. Here's another fun one: the CIA "Vault 7" hacking tools recently revealed by Wikileaks are completely unclassified. Not declassified, but intentionally never classified in the first place. If they had been classified it would have been a federal crime to deploy them on the open internet. And you can't order your employees to break the law, now can you? That would be unethical.

But they are learning from their mistakes. The day will come when the spooks or the bad hombres will clue up and effectively combine all three of the big ideas we've discussed:- Taking advantage of the flexibility and structural security weaknesses of clouds.

- Setting up shop permanently inside multiple quadrants at once, relying on stealth, peer-to-peer coordination, and the robustness of a well-designed virus.

- Squatting on critical chokepoints to spy, sabotage, and misdirect.

We all live in a yellow subroutine

In late 2002 Brandon Wiley posted a whitepaper about a theoretical "superworm" he called Curious Yellow. CY is designed to take over the internet and every computer connected to it. In its most extreme form the worm could defend itself by making the responses to, information about, and perhaps even the people working against the worm disappear from the internet. It took years of coordination and negotiation to blackhole AS40898. This worm could remove them in an eyeblink --or make sure they stick around. Submariners like to joke that there are only two kinds of boats: subs and targets. In the bleak world of Curious Yellow there are only two kinds of software: worms and wormfood.It's fascinating to look back at a fifteen-year-old paper and see all the pieces: command-and-control networks, pervasive infection, live code updates, chokepoint squatting, scouring the internet to find a single actor using a specific air-gapped system, and even a real-world example of a semi-criminal network devouring a competitor. It sounds like science fiction. In fact the concept was later used in science fiction [1]. But it's also kind of plausible.

This is the world we live in. Sleep tight.Notes

[0] For example, government and military maintain Sensitive Compartmented Information Facilities. Sometimes affectionately known as "Squirrel Cage In Fairfax", SCIFs are tightly-locked rooms where radioactive classified information is stored. Congressman Nunes almost certainly learned whatever he learned about surveillance on Trump inside a SCIF.[1] In 2006 Charles Stross published his sci-fi novel Glasshouse, which features a bit of technomagic called Gates. These gates can disassemble you down to the molecular level, transmit that information to another gate across the galaxy, then reassemble you with a brush-up and medical check on the other side. Mankind lives in an archipelago of artificial satellites placed close to sources of energy and raw material. These islands are connected by gates people step through from world to world. One day the gates get hacked by a virus called Curious Yellow, which proceeds to murder its enemies and erase all memory of them. The plot explores hard questions about identity when you can be copied and edited as you go about your day, and the power wielded by whatever controls the gates. The moral of the story, of course, is that those who live in space houses should never stow clones.

6 Comments

Just saw this tweet... using the cache as a covert channel in the cloud. Quite fascinating. The linked paper title is one of the most ominous ever, "Hello from the Other Side: SSH over

Robust Cache Covert Channels in the Cloud"

https://twitter.com/gcouprie/status/847491627455336448

<3

Was a pleasure to get to read this prior to publish .

Hey have you looked at http://urbit.org/ yet? It's solving a lot of if not all of these problems.

"Freedom is an engineering problem" :) Not to worry, Ubrit will have its turn on these pages.

Coincidentally, BrickerBot made it into the news, which is described as "malware" by several authors but could also be perceived as a harsh immune response, the vangard of the T-cells to come.