Fat Thinking and Economies of Variety

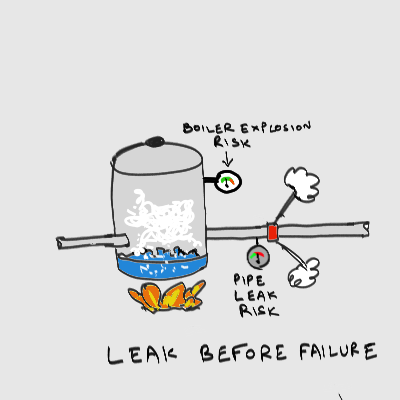

Leak before failure is a fascinating engineering principle, used in the design of things like nuclear power plants. The idea, loosely stated, is that things should fail in easily recoverable non-critical ways (such as leaks) before they fail in catastrophic ways (such as explosions or meltdowns). This means that various components and subsystems are designed with varying margins of safety, so that they fail at different times, under different conditions, in ways that help you prevent bigger disasters using smaller ones.

So for example, if pressure in a pipe gets too high, a valve should fail, and alert you to the fact that something is making pressure rise above the normal range, allowing you to figure it out and fix it before it gets so high that a boiler explosion scenario is triggered. Unlike canary-in-the-coalmine systems or fault monitoring/recovery systems, leak-before-failure systems have failure robustnesses designed organically into operating components, rather than being bolted on in the form of failure management systems.

Leak-before-failure is more than just a clever idea restricted to safety issues. Understood in suitably general terms, it provides an illuminating perspective on how companies scale.

Learning as Inefficiency

If you stop to think about it for a moment, leak-before-failure is a type of intrinsic inefficiency where monitoring and fault-detection systems are extrinsic overheads. A leak-before-failure design implies that some parts of the system are over-designed relative to others, with respect to the nominal operating envelope of the system. In a chain with a clear weakest link, the other links can be thought of as having been over-designed to varying degrees.

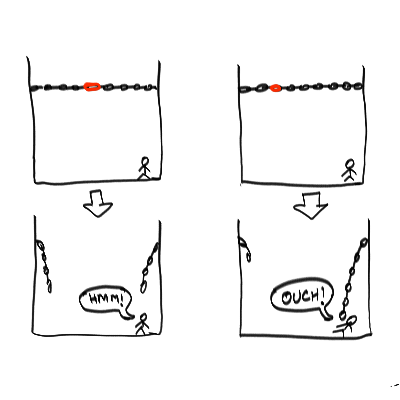

In the simplest case, leak-before-failure is like deliberately designing a chain with a calibrated amount of non-uniformity in the links, to control where the weakest link lies,. You can imagine, for instance, a chain with one link being structurally weaker than the rest, so it is the first to break under tensile stress (possibly in a way that decouples the two ends of the chain in a safe way as illustrated below).

You can imagine, in the same chain, another link that's structurally strong, but made of a steel alloy that rusts the fastest, so if there's a high humidity period, it breaks first. In the two cases, you can investigate the unusual load pattern, or possible failure in the HVAC (heating, ventilation and air conditioning) system.

You can imagine, in the same chain, another link that's structurally strong, but made of a steel alloy that rusts the fastest, so if there's a high humidity period, it breaks first. In the two cases, you can investigate the unusual load pattern, or possible failure in the HVAC (heating, ventilation and air conditioning) system.

Failure landscapes designed on the basis of leak-before-failure principles can sometimes do more than detect certain exceptional conditions. They might even prevent higher-risk scenarios by increasing the probability of lower-risk scenarios. One example is what is known as sacrificial protection: using a metal that oxidizes more easily to protect one that oxidizes less easily (magnesium is often used to protect steel pipes if I am remembering my undergrad metallurgy class right).

The opposite of leak-before-failure is another idea in engineering called design optimization, which is based on the exact opposite principle that all parts of a system should fail simultaneously. This is the equivalent of designing a chain with such extraordinarily high uniformity that at a certain stress level, all the links break at once (or what is roughly an equivalent thing, the probability distribution of link failure becomes a uniform distribution, with equal expectation that any link could be the first to break, based on invisible and unmodeled non-uniformities).

Slack and Learning

The inefficiency in a leak-before-failure design can be understood as controlled slack introduced for the purposes of learning and growing through non-catastrophic failure. A way to turn the sharp boundary of the operating regime of an optimized design into a fuzzy, graceful degradation boundary. So leak-before-failure is essentially a formalization and elaboration of the intuition that holding some slack in reserve is necessary for open-ended adaptation and learning. But this slack isn't in the form of reserves of cash, ready for exogeneous "injection" into the right loci. Instead, it is in the form of variation in the levels of over-design in different parts of the system. It is a working reserve, not a waiting reserve.

For those of you who are fans of critical path/theory of constraints methods, you can think of an optimized design as one where the bottleneck is everywhere at once, and every path is a critical path. It is a degenerate state. In the idealized extreme case, the operating regime of the system is a single optimal operating point, with any deviation at all leading to a catastrophic failure of the whole thing.

Mathematically, you get this kind of degeneracy by getting rid of dimensions of design or configuration space you think you don't need. This leads to a state of synchronization in time, and homogeneity in structure and behavior, where you can describe the system with fewer variables. A chain with a uniform type of link needs only one link design description. A chain with non-uniform types of link needs as many varieties as you decide you need. At the extreme end, you get a bunch of unique-snowflake link designs, each of which can fail in somewhat different ways, with each kind of failure teaching you something different. A prototype design thrown together via a process of bricolage in a junkyard is naturally that kind of design, primed for a whole lot of learning.

Leak-before-failure can be understood, in critical-path terms, as moving the bottleneck and critical path to a locus that allows a system to be primed for a particular type of high-value learning. Instead of putting it where you maximize output, utilization, productivity, or any of the other classic "lean" measures of performance.

Or to put it another way, leak-before-failure is about figuring out where to put the fat. Or to put it yet another way, it's about figuring out how to allocate the antifragility budget. Or to put it a third way, it's about designing systems with unique snowflake building blocks. Or to put it a fourth way, it is to swap the sacred and profane values of industrial mass manufacturing. Or to put it a fifth way, it's about designing for a bigger envelope of known and unknown contingencies around the nominal operating regime.

Or to put it in a sixth way, and my favorite way, it's about designing for economies of variety. Learning in open-ended ways gets cheaper with increasing (and nominally unnecessary) diversity, variability and uniqueness in system design.

Note that you sometimes don't need to explicitly know what kind of failure scenario you're designing for. Introducing even random variations in non-critical components that have identical nominal designs is a way to get there (one example of this is the practice, in data centers, of having multiple generations of hardware, especially hard disks, in the architecture)

The fact that you can think of the core idea in so many different ways should tell you that there is no formula for leak-before-failure thinking: it is a kind of creative-play design game which I call fat thinking. To get to economies of scale and scope, you have to think lean. To get to economies of variety on the other hand, you have to think fat.

The Essence of Fat Thinking

If you're familiar with lean thinking in both manufacturing and software, let me pre-empt a potential confusion: setting up a system for leak-before-failure is not the same as agility, in the sense of recovering quickly from failures or learning the right lessons from failure in a time-bound way, or incorporating market signals quickly into business decisions.

Easy way to keep the two distinct: lean thinking is about smart maneuvering, fat thinking is about smart growth.

Leak-before-failure in the broadest sense, is a way to bias an entire system towards open-ended learning in a particular area, while managing the risk of that failure. It is a type of calibrated, directed chaos-monkeying, that actually sacrifices some leanness for growth learning and insurance purposes. If in addition you are able to distribute your slack to drive potentially high-leverage learning in chosen areas, it is also a way to uncover new strategic advantages.

How so? Lean is really defined by two imperatives, both of which fat thinking violates:- Minimizing the amount of invested capital required to do something (so you need less money locked up in capital assets and inventory)

- Maximizing the rate of return on that invested capital (through, broadly, minimizing downtime, or equivalently, time to recover from failures).

This is why the "learning" by "leaning out" an operation serves as a way to cut costs (which often becomes the main purpose). This should strike you as bizarre, given that our archetypal examples of learning, young children learning through play, are costly money sinks who produce no immediate return on investment at all. Learning in a general sense should increase costs, not decrease them.

The kind of learning that intuitively strikes us as more natural, and closer to children playing, happens in fat regimes. This is where the term trial and error actually justifies itself. In strongly leaned-out systems, there is not actually much room for error.

When you get away from lean regimes, you're running fat: you've deployed more capital than is necessary for what you're doing, and you're deriving a return from it at a lower rate than the maximum possible.

You've deliberately introduced slack into the system, to pay for two things: safety insurance, and open-world learning. The system is likely to fail (and drive learning) where there is the least slack. One way to choose where you learn is to put slack everywhere except where you want to learn.

You've sacrificed some productivity of capital assets to gain some control over the process we commonly know as what doesn't kill you only makes you stronger.

Learn or Die Microeconomics

Here's the thing that separates open-world learning from closed-world: there is no systematic way to control failure-recovery times. To use a brain analogy, closed-world learning is like the closed loops of the brain stem and cerebellum. Open-world learning bubbles up to the open-ended thinking processes of the cerebrum and neocortex. These processes may include completely open-ended research problems, problems you don't know are NP-complete, poetic inspiration, and so forth.

To get killed in an open-world learning attempt is to experience an event or event cascade that causes such catastrophic damage that you don't have the reserve resources to recover at all.

To grow stronger in an open-world learning attempt is to scale along some vector uncovered by the failure, like a hydra growing two heads where one has been cut off. The fruits of open-ended learning are unpredictable. They might range from radical redesign of your whole system, to a pivot that changes the basic idea of what you're supposed to be doing. This is in contrast to the idea of kaizen in lean, where the learning occurs in predictably small steps near the local optimum, rather than in big leaps in directions that are potentially completely random.

(Caution: for a variety of reasons, it is best not to think of fat thinking/economies of variety as a kind of global optimization; that tempts you into one kind of model lock or another)

One way to understand this is to think of open-world learning as being waste-free: An error is just a trial you haven't learned from yet, and there's a good chance that you won't be the one to learn from it at all.

This is a basic mechanism driving markets: one company's unrecoverable error can serve as another company's freebie lesson. In fact, this is the default. The bulk of the value of innovation accrues outside the boundaries of the innovator, through surplus and spillover effects: the locus of failure and the locus of learning are widely separated in effect, spanning multiple entities.

Tim O'Reilly's advice to "create more value than you capture" isn't a prescription for being a good economic citizen. It's a description of operating conditions. If you can even capture enough to just barely survive, you're doing better than most.

Which means fat and lean thinking have macroeconomic consequences beyond a single company.

Learn or Die Macroeconomics

The idea that you can't be sure you'll be the one to learn from your failure is what makes attempting to grow as big as possible, as fast as possible, a very wise idea if you have the stomach for it.

If you grow big, it means your system boundary is bigger, and if other things are set up right, you have a higher chance of being the beneficiary of your own open-ended learning. You can benefit from much wider separations between loci of failure and learning.

Of course, there are other factors that make large size a disadvantage, such as the comforts of monopoly status lowering the incentive to learn at all, so there is a sweet spot where you're big enough to benefit sufficiently from your open-ended learning, but not so big that you have no reason to learn. A classic determinist error is to recognize the advantage of being bigger, while ignoring the cost. This is why I think Thiel's notion of a creative monopoly is somewhere between incomplete and wrong, but I won't go down that bunny-trail in this post.

So what doesn't kill you makes you stronger, and what does kill you makes the broader economy, and society at large, stronger. With the probability of the two outcomes depending on your own size relative to the size of the economy you're embedded in.

And of course, the system boundary for humanity is low earth orbit, so what doesn't kill the planet will make it stronger. Like climate change. Some people think AI risk is also one of these kill-the-planet-or-make-it-stronger learning attempts. I am not so sure.

This means we can also talk about lean and fat thinking across entire economies. An entire economy full of lean-thinking, six-sigma-ing, customer-listening corporations is, as a whole, in a closed-world learning regime with very little spillover and surplus outside boundaries. In such an economy companies live long and prosper, as captives of a rentier, cronyist class. But they produce very little by way of truly novel products and services. So the stability comes at the cost of slow eroding economic dynamism and increasing fragility of the national and global economies they are part of.

An entire economy full of fat-thinking, unique-snowflaking, product-driven corporations is, as a whole, in an open-ended learning regime with very high spillover and surplus outside boundaries. In such an economy, companies live free or die hard, at very high rates, churning rapidly, and produce a great many new products and services, most of which fail. But in so failing, they add economic dynamism and antifragility to the national and global economies they are part of.

Most critically, lean economies have all their growth reflected in the numbers, but are zero-sum overall. Fat economies have trouble accounting for all the open-ended learning accruing in nooks and crannies, which nobody has exploited yet, but are non-zero-sum overall. Most of the world economy today, with the exception of Silicon Valley, is probably in a lean phase.

Visibly perfect bookkeeping implies invisible stagnation. Visibly imperfect bookkeeping implies invisible dynamism. This applies at both microeconomic and macroeconomic levels.

If I knew enough about high finance, I'd turn this idea into some clever commentary on bond yields, stock prices, secular stagnation, and Japan jokes, and make a lot of money by placing genius bets.

Learning through Repetition and Aggregation

Getting back to a single corporation, assuming your leak-before-failure setup has created an open-world learning "story" that hasn't killed you, you will grow stronger in some indeterminate way that counts as a unit of scaling.

So scaling is really a series of weakly controlled (hence with indeterminate outcomes) attempts to create and redirect some slack in the system, sacrificing some productivity to learn a lesson somewhere between close-ended and open-ended, with some slight risk of killing yourself in the process. As you go more open, you'll let the failure determine what you learn rather than some objective like lowering cost.

"Economies" of any sort, as I discussed in Economies of Scale, Economies of Scope, are basically types of learning. How you learn determines how you scale.

Classical economies of scale are the result of learning through repetition in engineering processes, with benefits realized as falling cost curves and increasing yields, once you go from early open-ended learning regimes to close-ended regimes. Assuming you survive the high infant-mortality rate in the early part of the bathtub curve that describes learning over a lifespan.

Economies of scope are the result of learning through aggregation in market coverage processes, with benefits realized as falling systemic transaction costs. Infant mortality on this bathtub curve is failing to learn enough about the market, quickly enough, to get to profitability and enough of a free cash flow to fuel operations.

Both these two kinds of learning through economies are intrinsically close-ended most of the time, outside of birth and death regimes. If you're lucky enough to have had other companies make all the expensive mistakes before you, you can even start in the close-ended part of the curve, and skip the early, high-mortality part of the bathtub curve altogether. This is why "customer driven" companies and imitators survive better: the high-risk learning has already been done by another entity, may it rest in pieces. To the fast-follower the spoils.

These two together are what drive the business cycle. If everybody is making expensive mistakes early in the bathtub curve, you have an incentive to "defect" from the pioneering-innovation economy and start exploiting the learnings of the dead. This leads to a flood of defections, and now everybody is trying to exploit learnings through fast-follower strategies, with diminishing returns. So you can again defect, this time towards innovating by becoming more product-driven.

There is no simple relationship between the proportion of product-driven versus customer-driven activity in an economy and the booms and busts of the market, but I strongly suspect cycling on the one spectrum contributes strongly to the business cycle. Further, I suspect, the cycling will have a phenomenology similar to predator-prey population cycling as described by various models (with customer-driven companies being "predators" and product-driven ones being "prey").

There is a missing piece here though. It is easy to see how you can turn into a fast follower if there are a lot of struggling pioneers around. But how do you turn into a pioneer, when everybody is trying to fast-follow? More importantly, how do you do so in a way that doesn't make you the first lemming?

It is clear that we don't have good answers to this question, which is why companies and entire nations find it easier to slip into a customer-driven regime than break out of it. So individual companies enjoy occasional bouts of inspiration that leads to some pioneering. Nations sometimes get inspired as a whole and get out in front, leading the global economy.

But sometimes, everybody is hanging back, waiting for somebody else to do the new learning.

Learning through Variation

As you might expect, what I call economies of variety involves learning that is closest to Darwinian: it is learning through artificial (or co-opted natural) variation. In the past few decades, a few companies seem to have demonstrated that it is possible to be consistently product-driven, pioneering one new category after another. Apple is of course, the textbook example, but there are several other candidates.

Others, like Twitter, have shown what happens when you fail to realize economies of variety, through what I call a too-big-to-nail pathology: they don't get big enough, quickly enough, to retain enough of the value they create. This can happen through either weak management, or creating too much value.

Economies of variety are the result of learning through variation (in the sense of trying a variety of things looking for product-market fit for example), with benefits realized as creative innovation capacity distributed throughout the system.

If economies of scale and scope are about doing the thing right, economies of variety are about doing the right thing. Where the "right thing" is figuring out new product categories repeatedly, before old ones enter their harvest phases or are taken over by imitators, fast-followers, and traditional voice-of-customer driven competitors. Economies of variety are fundamentally what long-lived product-driven companies are good at creating and banking. They pioneer category after category in a predictable way, because they run fat rather than lean, using leak-before-failure creativity to survive their own learning risks.

These companies don't seem to merely deal with Darwin, they appear to systematically beat Darwin. This beyond-Darwin survivability is variability selection. I didn't make up that term; I borrowed it from a theory that offers an explanation for why humans, in a sense, beat Darwin at the selfish-gene level and moved the game to the memes-and-culture level. Hominids, effectively, were selected for having brains capable of generating and adapting to variety in software rather than hardware. We are not only more exploratory and curious than most species, we have hardware that can handle a lot more variety and survive.

So companies that figure out economies of variety are to industrial age companies as hominids are to other animals: they've grown an organ analogous to a brain through a process like variability selection.

Scaling Innovation

One way to understand what these companies is to think of them as having figured out how to scale innovation itself, something industrial age companies largely failed to do.

Innovation as a function, and the economies of variety it can deliver when scaled, has historically been something of a third wheel in industrial age corporations built around economies of scale and scope.

Awkward constructs like industrial R&D labs, bolted onto fundamentally mercantilist corporations to turn them into Schumpeterian ones, have historically had uniformly poor track records of returning value to the parent company.

Xerox famously "fumbled" the future: the one bit of innovation from PARC it managed to hold on to, the laser printer, gave the company a fresh lease on life that sustained it for a couple of decades longer, but everything else that came out of the lab created wealth elsewhere in the economy. And Xerox was no exception. AT&T was not the primary beneficiary of the invention of the transistor at Bell Labs; Intel was. There are other examples.

These companies did capture some value, but they would have liked to capture more of the value than they did. Or better yet, gain control over the process of how much value they generated and retained.

The modern open innovation economy in the sense of Chesbrough (basically early-stage IP trading among corporate R&D outfits) is something of a market of consolation prizes for fumbling pioneers.

The classic industrial age corporation is a two-element chicken-egg loop of scaling and scoping. To expand on a thought in my earlier post, in an industrial style corporation, scoping decisions lead to scaling commitments, and scaling decisions lead to scoping commitments. There is no locus in this tight loop for innovation to enter in an endogeneous way. The best model we've had to date has been to have an R&D lab invent things and throw them over a wall into the loop (as an exogenous input), hoping for something good to happen.

Economies of variety offer an alternate model. You don't think in terms of a bolted-on laboratory and a scale-and-scope operation separated by a wall, with "innovation" consisting of throwing "inventions" over the wall as exogenous inputs and hoping for the best.

Instead you think in terms of a fat operations that use leak-before-failure designs that introduce calibrated amounts of variety across nominally uniform operations, to catalyze endogenous growth.

Note #1: The term 'fat thinking' is derived from Ben Horowitz's 2010 post The Case for the Fat Startup, which in turn is a response to the then-peak-of-fashion Lean Startup mode. The sense I'm using the term 'fat' here is related but not quite the same. Ben's sense primarily has to do with finance, while mine here primarily has to do with the relationship between innovation and scaling.

Note #2: Thanks to Dan Schmidt and Tiago Forte for useful discussions.

13 Comments

Great post, really got me thinking about some stuff.

1. If we are starting to see the emergence of "hominid companies" (Apple, Google w/ Google X, Amazon, etc.), what is the future of competitive markets? If some large companies have evolved a "brain" and can now effectively pioneer without getting killed, can we create a market where many entities can compete effectively? Or are we on a path towards monopolization in (and across) industries?

2. Before we reach the point of an economy run completely by enlightened, hominid companies, does this framework support audacious Keynsian fiscal policy? The government is usually one of the largest systems within any economy. Also it ostensibly exists for the benefit of society directly (as opposed to indirectly through some mechanism like profit seeking). Does the fat-learning framework support the idea of governments acting as martyr pioneers who are happy to pump large amounts of capital into huge projects and let the learnings accrue to others?

- Reminds me of this video -> https://www.youtube.com/watch?v=Z3tNY4itQyw

3. As someone with some background in finance (although not enough to get rich off of genius bets), bond returns can be used as a metaphor for the returns from fat learning. Usually with bonds, the vast majority of returns comes from the interest on interest, not from the individual bond's cash flows. Same way that most of the returns from fat-learning come from the spillover learnings that happen away from the point of failure, not necessarily the failure itself.

Would love to hear your thoughts.

I will have to return to this post and re-read it for greater profit. The minute I saw "bricolage" I told myself, Venkat is channeling Taleb. Then, antifragility, where to put the fat (Tony), and "Learn or Die" Microeconomics. I couldn't stop smiling. You sir are a master. Kudos.

Mr Rao,

I very much liked your post on "Legibility". Thank you.

Have you read Ludwig von Mises, "Bureaucracy"? I think that would add to the analysis of why states are so bent on "legibility".

It's available gratis on Mises.org

Thank you, and keep writing!

"The Wonderful One Horse Shay" - late 18c poem about a carriage so "perfectly" constructed that no part wore out before any other part (hence it disintegrated at one instant). From Oliver Wendel Holmes father of OWH Jr, famous supreme court justice.

http://holyjoe.org/poetry/holmes1.htm

Ah damn I read that poem decades ago. If I'd recalled it, I'd have mentioned it. Yes, exactly that principle.

this is exactly right. I can confirm as a former reactor operator.

haven't read the whole piece...just the blowoff valve principle is completely spot on.

our reactor was completely safe, and too small to fail.

we used to run drills of simultaneous shootings, earthquakes, spills, etc.

as per 10cfr20 and 10cfr50.

also most of the media hype about spent fuel storage is pretty bullshit.

or at least that's how me & coworkers felt because our reactor was so small.

it is worth noting our director believed that hormesis held for radioactive exposure.

same as high altitude sun exposure from plane flights...or mountain climbing.

wikipedia.org/wiki/Hormesis

abiding principle:

don't put a massive power reactor on an island where it could have an earthquake.

chernobyl's still doing OK.

now it's a nature preserve!

nice place to visit, just don't stay too long.

Did you mean to link http://allthingsd.com/20100317/the-case-for-the-fat-startup/ instead of this blog in the second last paragraph?

On this note, all evolved machines (living ones), contain large amounts of slack, and they are often the points where entirely new features crop up. Excess bones in the skull evolve into inner ear structures. Listening to high pitched sounds evolves into echolocation and so on.

Yes, thanks. Fixed.

Interesting wrinkle. I've been mainly thinking of slack in areas where you want either failover (so redundancy) or a 'failure budget' of multiple failures so it's the leaner parts that fail and evolve. I think what you're pointing out is a different vector of fatness, where failures might suggest new features to evolve towards. So that kind of slack is more like generic slack/stem cells/potential energy/liquid cash reserves.

I've been thinking through the biological analogy more closely, and I made up this speculative idea in another private discussion which could be completely wrong. Maybe you can correct me if so.

--- begin quote from private discussion --

Leaning out a part of a system is actually about turning it into a sensor in part. One of the 40 ways the eye probably evolved is simply some surface cells being more sensitive to light. Then there would be evolutionary advantage to evolving an eyelid to control when that sensor is used. Then there would be an evolutionary advantage to adding an intelligence/decision-making loop on there to control when to open or close the eyelid. So the full lean/fat analysis says the eye is the lean bit, the eyelid is the fat bit, and the brain circuit that controls when you open/close eyes is what drives agility and smart growth.

Interesting thought. I'll have to think more about it but at a certain level the distinction between sensor and control element and intelligence is not that well-defined in natural systems. Any slack can and will be coopted in different ways, "there would be evolutionary advantage to evolving an eyelid to control when that sensor is used" is not how evolution works, it could be that there are nerves around that are not used for something else all the time and when they start being used for this it confers a survival advantage to the critter leading to more copies of it. Fat cells may be primarily used for storing energy, but now that they are there, they will also be used for sequestering fat soluble toxins, or cushioning, or insulation, or hormone production.

Yeah, I get that... I was skipping lightly over a few million steps :D

But you're bringing up a new and perhaps profound point I think: liquidity of a resource is a function of time, not an absolute. We tend to think of a pile of money as a very "liquid" resource and a pile of very specific spare parts as a very "illiquid" resource. But given enough time, all resources are liquid.

In economic terms, money solves the dual-coincidence-of-wants problem via intermediation. But if time is not an issue, then you can wait billions of years for a particular kind of spare resource, like extra bone, to meet a particular kind of adaptive environment, such as one that makes sensitivity to sound a useful thing.

I agree, that's an excellent point "given enough time, all resources are liquid".