Quasiparticles and the Miracle of Emergence

Let’s start with a big question: why does science work?

Writ large, science is the process of identifying and codifying the rules obeyed by nature. Beyond this general goal, however, science has essentially no specificity of topic. It attempts to describe natural phenomena on all scales of space, time, and complexity: from atomic nuclei to galaxy clusters to humans themselves. And the scientific enterprise has been so successful at each and every one of these scales that at this point its efficacy is essentially taken for granted.

But, by just about any a priori standard, the extent of science’s success is extremely surprising. After all, the human brain has a very limited capacity for complex thought. We human tend to think (consciously) only about simple things in simple terms, and we are quickly overwhelmed when asked to simultaneously keep track of multiple independent ideas or dependencies.

As an extreme example, consider that human thinking struggles to describe even individual atoms with real precision. How is it, then, that we can possibly have good science about things that are made up of many atoms, like magnets or tornadoes or eukaryotic cells or planets or animals? It seems like a miracle that the natural world can contain patterns and objects that lie within our understanding, because the individual constituents of those objects are usually far too complex for us to parse.

You can call this occurrence the “miracle of emergence”. I don't know how to explain its origin. To me, it is truly one of the deepest and most wondrous realities of the universe: that simplicity continuously emerges from the teeming of the complex.

But in this post I want to try and present the nature of this miracle in one of its cleanest and most essential forms. I’m going to talk about quasiparticles.

Let’s talk for a moment about the most world-altering scientific development of the last 400 years: electronics.

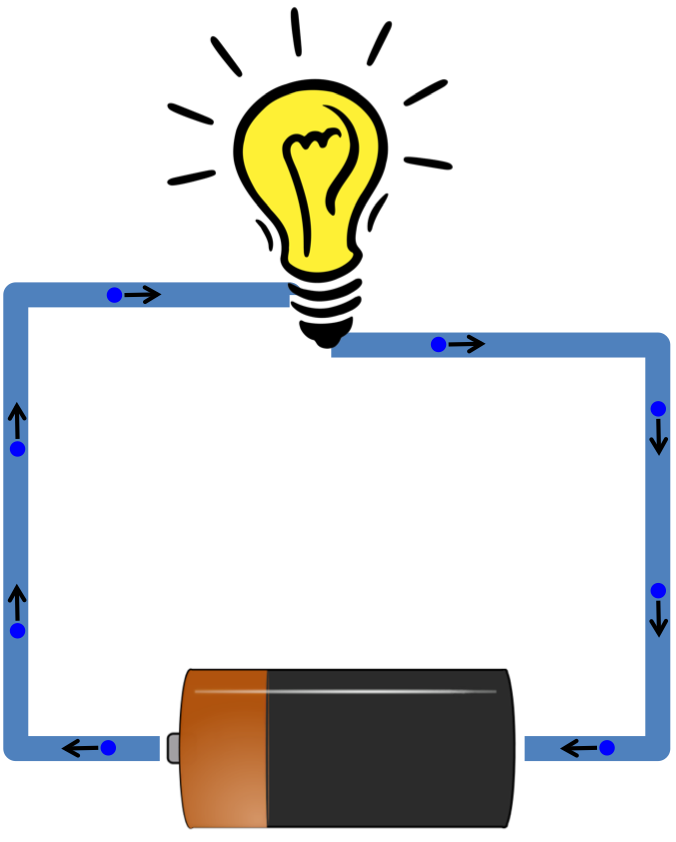

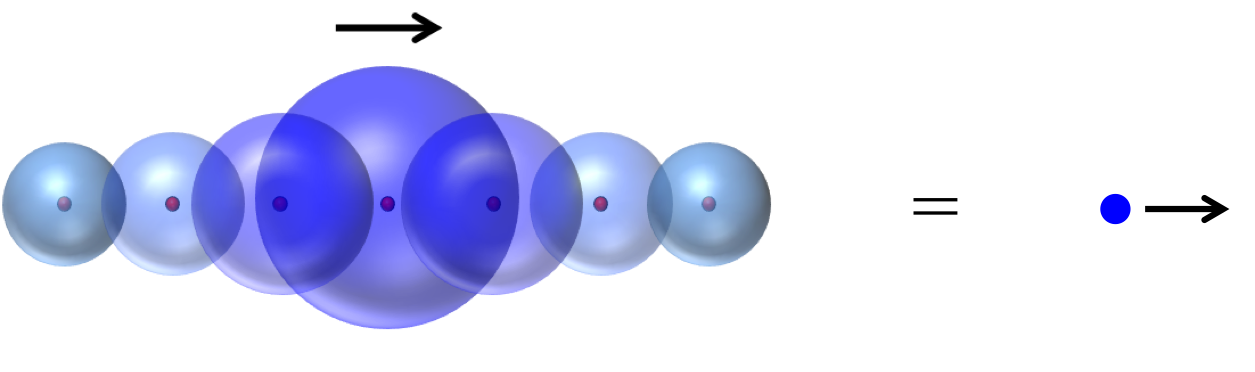

When humans learned how to harness the flow of electric current, it completely changed the way we live and our relationship to the natural world. In the modern era, the idea of electricity is so fundamental to our way of living that we are taught about it within the first few years of elementary school. That teaching usually begins with pictures that look something like this:

That is, we are given the image of electrons as little points that flow like a river through some conducting material. This image more or less sticks around, with relatively little modification, all the way through a PhD in physics or electrical engineering.

But there is a dirty secret behind that image: it doesn’t make any sense.

And an even deeper secret: it isn’t electrons that carry electric current. Instead, the current is carried by much larger and more nuanced objects called “electrons”.

Let me explain.

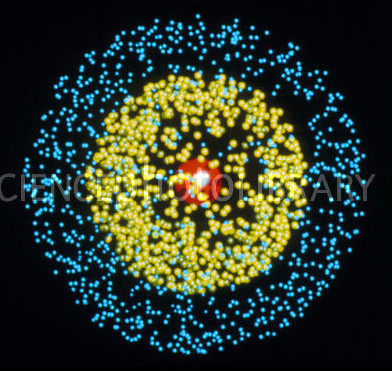

To see the problem with the standard picture, think for a moment about what metals are actually made of. Let’s even take the simplest possible example of a metal: metallic lithium, with three electrons per atom. A single lithium atom looks something like this:

Those points are meant to show the probability density for the electrons inside the atom. Two of the electrons (the yellow points) are closely bound to the nucleus, while the third (blue points) is more loosely bound. The exact arrangement of electrons around the nucleus is actually a difficult question, because the three electrons are continually pushing on each other (via very strong electric forces) as they orbit around the nucleus. So the precise structure of even this simple atom does not have an easy solution.

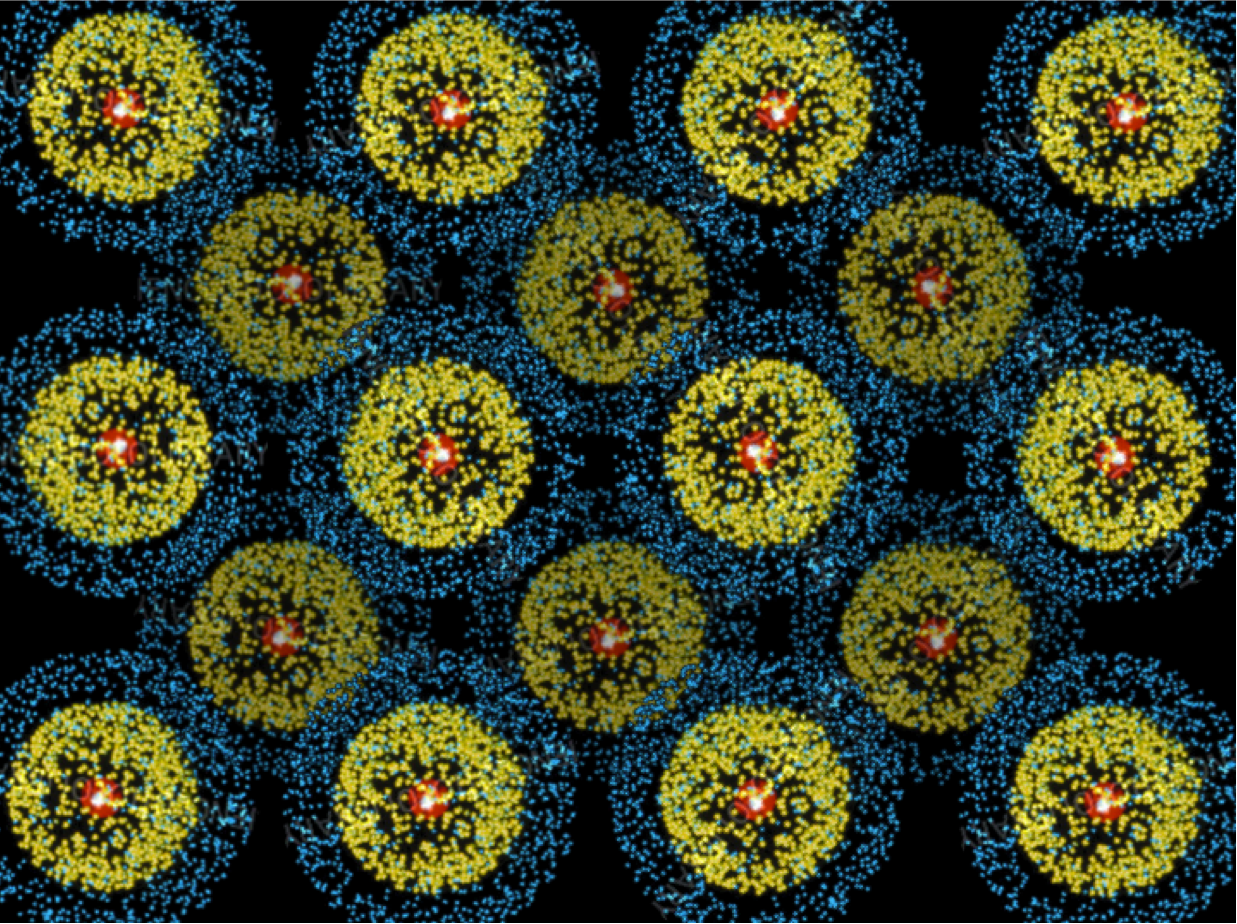

The situation gets exponentially messier, though, when you bring a whole bunch of atoms together to make a block of lithium. Inside that block, the atoms are packed together very tightly, something like this:

This picture may look tidy, but consider it from the point of view of an electron traveling through the metal. Such an electron has no hope for a smooth and simple trajectory. Instead, it gets continuously buffeted around by the enormous forces coming from the other nearby electrons and from the nuclei. So, for example, if you injected an electron into one side of a piece of lithium, it would absolutely not just sail smoothly across to the other side. It would quickly adopt a completely chaotic trajectory, and any information about its initial direction or speed would be lost.

(Of course, this is all to say nothing about how messy things are in a more typical metal like copper. In copper each atom has 29 electrons swirling around it in complicated orbits, rather than just 3. I can’t even draw you a good picture of a Copper atom.)

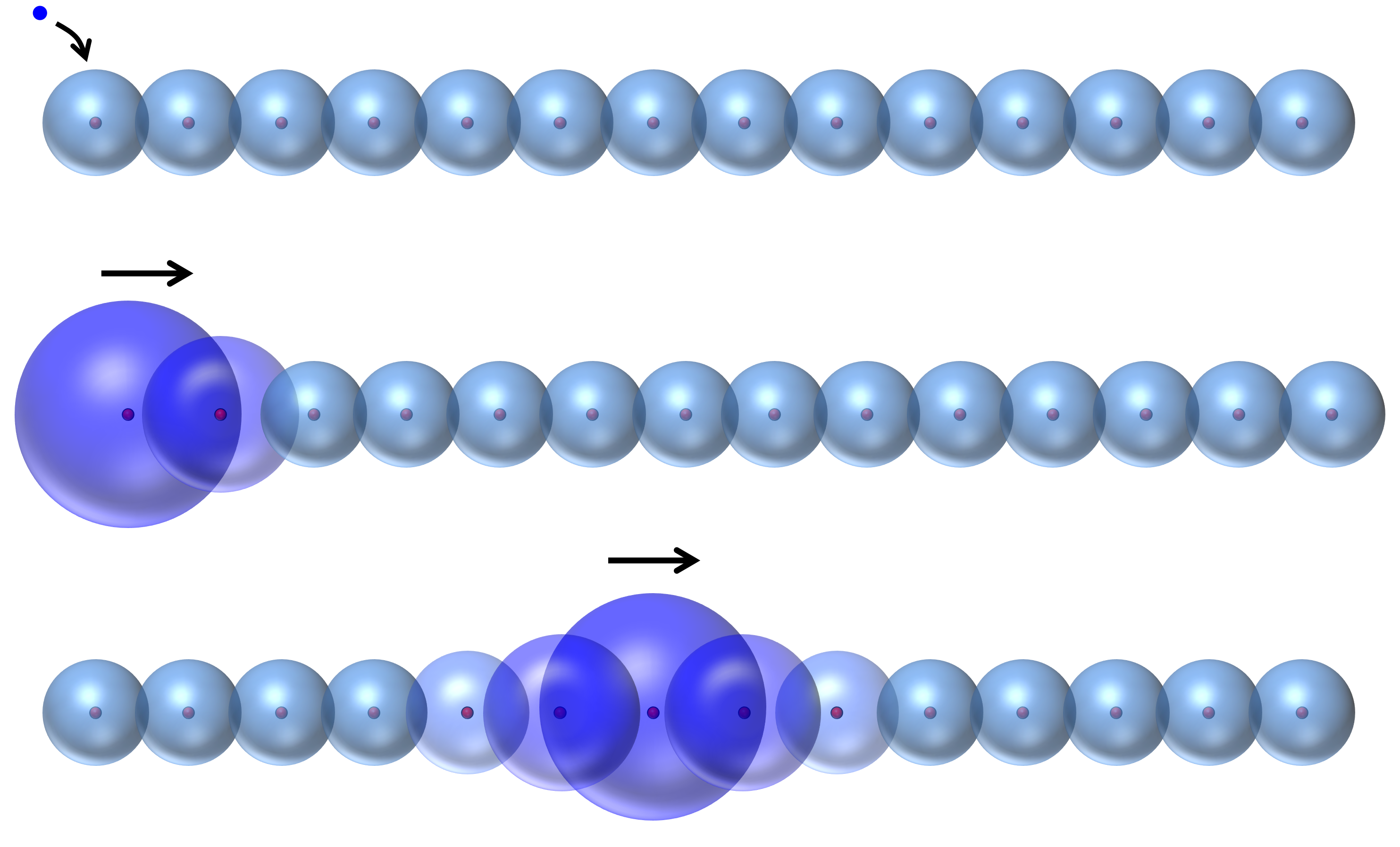

So thinking about individual electrons is hard – much too hard to be useful for any simple human reasoning. As it turns out, if you want to make any headway thinking about electric current, it actually makes sense to forget about the electrons’ individuality and just imagine clouds of probability density around each nucleus. Now, when you inject an electron into one side of the metal, it just adds some probability to the electron clouds on that side. And you can imagine that over time this probability moves on down the line, like so:

So this is how electric current is really carried. Not by free-sailing electrons, but by waves of probability density that are themselves made from the swirling, chaotic trajectories of many different electrons.

Messy, right? Well, now comes the miracle.

The key insight is that you can think about all those swirling, chaotic electron trajectories as a quantum field of electric charge, conceptually similar to the quantum fields out of which the fundamental particles arise. And now you can ask the question: what do the ripples on that field look like?

The answer: they look almost identical to real electrons.

In fact, those waves of electric charge density look so similar to “bare” electrons flying through free space that we even call them “electrons”. But they are not electrons as God made them. These “electrons” are instead an emergent concept: a collective movement of many jumbled and densely-packed God-given electrons, all pushing on each other and flying around at millions of miles per hour in chaotic trajectories.

But the emergent wave, that so-called “electron”, is startling in its simplicity. It moves through the crystal in straight lines and with a constant speed, like a ghost that can travel through walls. It carries with it the exact same charge as a single electron (and the exact same quantum-mechanical spin). It has the same type of kinetic energy, [latex]KE = \frac{1}{2} mv^2[/latex], and for all the world behaves like a naked electron moving through empty space. In fact, the only way you could tell, from a distance, that the “electron” is not really an electron, is that its mass is different. The “electron” that emerges from the sea of chaotic electrons feels either a bit heavier, or a bit lighter, than a bare electron. (And sometimes it as much as 100 times heavier or lighter, depending on the details of the atomic orbitals and the atom spacing.)

This discovery – that the fundamental emergent excitation from a soup of electrons looks and acts just like a real, solitary electron – was one of the great triumphs of 20th century physics. The theory of these excitations is called Fermi liquid theory, and was pioneered by the near-mythological Soviet physicist Lev Landau. Landau called these emergent waves “quasiparticles”, because they behave just like free, unimpeded particles, even though the electrons from which they are made are very much neither free nor unimpeded.

To my mind, this discovery emphasizes the essence of what is beautiful about physical science. Complexity, by itself, has no inherent beauty. But there is something beautiful about observing a very simple thing emerging from an environment that initially appears to be a complicated mess. It gives the same fundamental pleasure as, say, watching waves roll onto the seashore (or, to a lesser extent, seeing people in a stadium do the wave).

A good deal of physics (especially condensed matter physics, my own specialty) is built on the pattern that Landau set for us. Its practitioners spend much of their time combing through the physical universe in search of quasiparticles, those little miracles that allow us to understand the whole, even though we have no hope of understanding the sum of its parts.

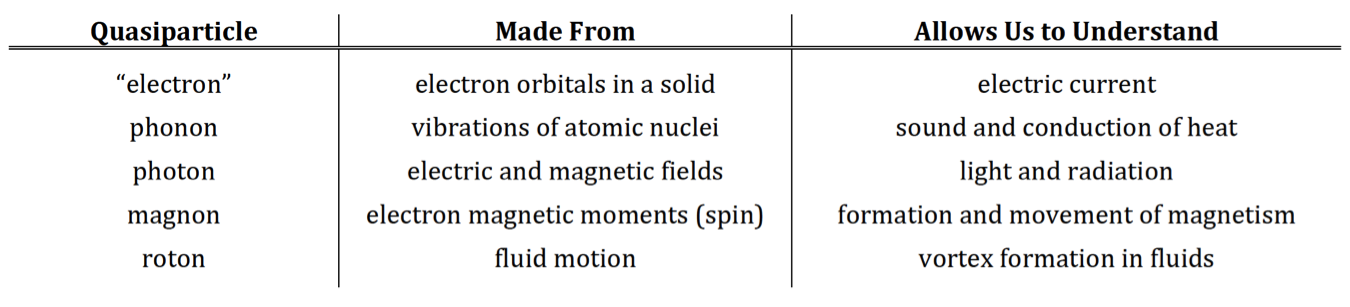

And at this point, we have amassed a fair collection of them. I’ll give you a few examples, in table form:

Each item in this list has its own illustrious history and deserves to have its story told on its own. But what they all have in common are the traits that make them quasiparticles. They all move through their host material in simple straight lines, as if they were freely traveling through empty space. They are all stable, meaning that they live for a long time without decaying back into the field from which they arose. They all come in discrete, indivisible units. And they all have simple laws determining how they interact with each other.

They are, in short, particles. It’s just that we understand what they’re made of.

One could ask, finally, why it is that these quasiparticles exist in the first place. Why do we keep finding simple emergent objects wherever we look?

As I alluded to in the beginning, there is no really satisfying answer to that question. But there is a hint. All of these objects have a mathematically simple structure. It somehow seems to be a rule of nature that if you can write a simple and aesthetically pleasing equation, somewhere in nature there will be a manifestation of the equation you wrote down. And the simpler and more beautiful the equation you can manage to write, the more manifestations you will find.

So physicists have slowly learned that the one of the best pathways to discovery is through mathematical parsimony. We write the simplest equation that we think could possibly describe the object we want to understand. And, in no small number of instances, nature finds a way to realize exactly that equation.

Of course, the big question remains: why should nature care about man’s mathematics, or his sense of beauty? How can the same sorts of simple equations keep appearing at every scale of nature that we look for them? How is it that math, seemingly an invention by feeble human brains, is capable of transcending so thoroughly the understanding of its creators?

These questions seem to have no good answers. But they are, to me, continually awe-inspiring. And they swirl around the heart of the mystery of why science is possible.

26 Comments

I'm embarrassed to admit I never even tried to revisit high-school physics in light of undergrad q-mech or ask myself how "electrons" can move through metal in probability wave terms. I think I was imagining some sort of musical-chairs-in-a-straight-line played on a string of Bohr atoms or something.

I vaguely recall reading a book on semiconductors in high school that explained things using something called "band theory". Are energy bands a useful abstraction at an elegant-physics level, or more of an engineering hack?

Energy bands are definitely a useful idea. All materials have bands of allowable and non-allowable energies through which conduction occurs, and these bands arise from hybridization (overlap) of the atomic shells. My point is only that the current through those bands of energies is carried by the quote-unquote "electrons" (emergent probability waves), rather than by actual point-like electrons.

Do quasiparticles explain things like speed in light in various media and refraction?

As I understand your question, the correct answer is yes. A bare photon travels at the speed of light, but we see light moving through materials (like water) at a slower speed. Just like "electrons" in a solid, light through a material is carried by "photons" which are really a combination of electromagnetic waves and polarization of the electrons in the material. These quasiparticles can have an effectively lower "speed of light".

As a side note, the fact that light moves slower in materials allows for very cool things to happen. For example, a very fast moving particle can move through a material faster than the light velocity. When it does so, it leaves behind an optical shock wave, just like sonic boom shock waves when a jet plane goes faster than the speed of sound. The optical shock wave is called "Cherenkov radiation".

Ah, so that's what Cherenkov radiation is.

That simple math equations show up in nature shouldn't be surprising. Math is not a pure invention of the feeble human mind, but a way to understand, as abstractly as possible, the workings of nature. Goedel proved you needed some assumptions/axioms to get to all the truths in a mathematical system. Those assumptions are the link to the "real world", so math is just a study of reality, just as-abstract-as-possible.

There are two questions here. One is "why is nature a mathematical system?" Or, in other words, why do mathematical assumptions hold when applied to the real world?

The second question is "why is nature a mathematical system at every level?" Even if you assume that the universe is an intrinsically mathematical object at its smallest-scale, most fundamental level, that doesn't immediately explain why it still looks like a mathematical object at much higher levels.

It is this second question, rather than the first, that I am (whimsically) calling the "miracle of emergence".

Well, since math is a HUMAN construct to describe fantastically-complex nature...ta-da, there's your answer. *Of course* we see formulae reflected in nature - it's a formalization of the observed behavior. For this to *not* happen would be expecting to see a moped when you say the word "car".

Don't let your training overthink this.

PS. Thanks for the blogging...brilliant, insightful stuff, and I'm a semi-educated arm-chair QED physicist (meaning I've read a few books on it in the search for the "great underlying reality", but I really don't know squat, and lack the training for the math behind it).

This stuff sounds really interesting. What book(s) would you recommend to someone who wanted to learn it, from a background of undergraduate math/physics?

I have personally always learned best from oral instruction and conversation, so there aren't any books that immediately come to mind. Let me think about this some more and I'll try to make some suggestions.

A few relevant books that I have really enjoyed (in order of increasing difficulty):

Quantum Field Theory for the Gifted Amateur, by Lancaster and Blundell

Theory of Solids, by Ziman

Statistical Physics of Fields, by Kardar

Learning upper-level physics by book takes a lot of patience, though, and usually an iterative approach where you read, think about/play with it for a while, and then go back and read it again.

I find this idea of "elegance" which seems to be ubiquitous in science/math/engineering to be very strange. To me, it seems that if a person considers something long enough, it is inevitable that they will see beauty in it. I wonder if it is a remnant of these field's emergence from mystical precursors?

I think a key point of science is being lost here. The models we make of the physical world, are just that, models, which can at best only be asymptotically correct using experiments designed to break them. As these models were specifically designed to model reality, why would it be surprising that they do it well, or that simpler models will work at larger length scales? I do not find it surprising that the simplest known particles follow simple rules, I would find the opposite to be more strange.

I can't help but think that the brain's strength as a pattern recognizer is becoming maladaptive here.

I'm not trying to be mean, just trying to pushback a little :)

I appreciate the pushback. I was trained in the "Russian school" of theoretical physics, for which a central tenet is that ideas advance only when someone is willing to argue with you. :)

It seems to me that the major question is not "why are our models simple?", but "why is it possible to successfully build simple models at scales much bigger than the particle scale?" It may seem very natural that the most fundamental particles obey simple rules, but it is still very surprising to me that much larger objects, which are built of an enormous number of chaotically-moving particles, follow equally simple rules.

You might be right, though, that physicists have an excessive focus on "elegance". I think part of it is just people wanting to justify thinking about ideas that they like, rather than (potentially more useful) ideas that they find ugly. So when someone succeeds scientifically by explicitly seeking "elegance", we tout those successes.

Then again, there is definitely something to be said for allowing people to work on the ideas that they're excited about.

Wouldn't it be more accurate to say that some large objects and macro phenomena follow simple rules and some do not?

Take climate or weather for example. Or even planetary orbits in systems with multiple bodies and over a long enough time.

Of course. I guess that, in my mind, things that are too complicated to understand (like the climate and the weather, or the orbits of many planets together) seem much more "natural" at the large scale than things that are simple enough to understand. So it remains somewhat of a mystery to me why there exist any things at all at the large scale that can be understood in very simple terms.

What I am trying to say, is that maybe the thought process of perceived "elegance" is a consequence of a model actually describing reality. Not the other way around, in which a model is perceived as elegant, then, surprise, it describes reality.

Maybe even, the process of interacting with a model for a significant time, molding it, refining it, till it almost becomes a part of you, gives you this perception of it's elegance.

I'm not a scientist, but yes, I imagine that justifying spending so much time on something, especially when very few others do, would be a major challenge. On the other hand, from my perspective it seems most anything can become interesting, if you look deeply enough into it. This may just be me, but I don't tend to have any deep interest in a thing until I start digging into it.

It is amusing to me that this all may stem from people expecting a person to justify their pursuits. :) Everything seems to link back to our biological/social roots.

I can understand from a pure mathematical view, it seems strange that a simple model may describe reality reasonably at larger scales. But my everyday experience of the world physically, making simple models of everything around me continuously and subconsciously, that are good enough to usually have no problems, makes it not seem strange to me that simple model making is possible at all scales. Maybe this is a logical fallacy, "appeal to human experience" :)

I can definitely get behind people doing what they're excited about :)

What is the speed of this emergent electron in metals?

Just like a normal electron, the emergent "electron" can have essentially any velocity. Unlike light, the "electron" quasiparticle does not have a characteristic speed. It only has a characteristic mass.

Of course, there is sort of an upper limit on the quasiparticle speed, which is set by the speed of the physical electrons as they move around their electronic orbitals inside the atoms.

> It moves through the crystal in straight lines and with a constant speed, like a ghost that can travel through walls... In fact, the only way you could tell, from a distance, that the “electron” is not really an electron, is that its mass is different.

Interesting. I thought that "real" free electron moves through the solid crystal, accelerating in the electric field - on average. So it's not a motion "with a constant speed". But looking properly I guess i might be similar to wave of probability; after all, Ohm's law isn't for nothing.

Yeah, when you first learn Ohm's law, it is presented in the picture of "free" electrons that get accelerated through the crystal by an electric field, but that every now and then collide with something and have their speed reset.

This picture is more or less correct, but you need to mentally replace the point-like free electrons in that picture with the probability wave "electrons" that I drew above.

In college (where I was not any kind of science major) I remember reading a paper by Leibniz where (if I remember correctly), he calculated the angle of refraction of light in water by first assuming that it would take the least amount of time possible to travel through water and therefore would have to alter its angle accordingly. Voila, wouldn't you know the angle he calculated turned out to perfectly match what had been empirically recorded. As a person generally befuddled by math, the only deep impression this left me with was a feeling of amazement that light would somehow "know," what the fastest course would be, and that Leibniz would casually assume that this must be so.

Not sure if that is the kind of emergent property you were talking about, but I think the sensation I felt at that moment was similar to the wonder you describe, if more vague. I don't draw the same kind of metaphysical conclusions Leibniz did (and it doesn't seem like you do either), but I certainly appreciate the strangeness of it all.

The principle of least time (now called Fermat's principle) is a truly beautiful piece of physics. We have now generalized it to something called the "principle of least action", which is one of the greatest and most useful ideas in physics. It still does feel a little miraculous that it works.

So yes, that is very much the kind of wonder that I'm talking about. I'm glad you could appreciate it. And I'm impressed that a non-science major was trying to read Leibniz!

By the way, you may appreciate this post (and perhaps the footnote in particular): https://gravityandlevity.wordpress.com/2015/10/11/the-simple-way-to-solve-that-crocodile-problem/

Brian,

Thank you for this. I had not spent much time puzzling about it, but the idea of pushing a wad of stuff we call an electron through another wad of stuff we call a copper wire always struck me as fantastical.

I am happy to have such a clear explanation of quasiparticles - it has been exactly my experience that learning this level of physics is very difficult when only using books.

I am also intrigued by two things at this moment. You say:

'the “electron” is not really an electron, is that its mass is different. The “electron” that emerges from the sea of chaotic electrons feels either a bit heavier, or a bit lighter, than a bare electron.'

A further explanation would help me, if that isn't too hard. Is this another facet of Ohm's current/voltage relationship? Or a clue to what creates mass? It seems to me that all the definitions I have heard of mass & gravity have been simply circular, and not very revelatory of what I consider a very deep mystery. It is something like what you are saying here. You ask why all this chaotic activity resolves to something that can be described in simple observational terms. Likewise, I am continually amazed that a reality that is so well described by probability fields can feel so solid. How anything can appear to have heft and sharp edges. My thought is that the phenomenon of mass is key. I think we are circling the same mystery.

I am also happy to see photons on the list of emergent quasiparticles. I have always been suspicious of the silliness that gets attributed to the Welcher-Weg experiments. How a photon is treated as if it is both a bowling ball and a pond ripple coexisting, then we look at it, and the photon becomes one or the other. If instead, a 'photon' is a compact description of a disturbance traveling through some kind of background, then we should not be so surprised when its observed properties squish around as we hit it with an experimental hammer.

Hi Eric,

Thanks for your comments. The mass of the probability-wave "electron" actually has nothing to do with gravity. Gravity is more or less completely irrelevant when you're talking about the interaction of things that are smaller than planet-sized.

Instead, the mass here is the inertial mass, by which I mean it is a measure of how hard you have to push on the "electron" in order to accelerate or decelerate it. When you try to accelerate the "electron", the shape of the probability wave changes a little bit (essentially, getting narrower or sharper), and this gives it a larger energy due to electric repulsion of the charges inside it. The net effect is the same as for normal massive objects, where having a higher mass means that you have to push harder to get them going. But here the very concept of mass is also an emergent property, rather than something God-given.

I wrote some more about the idea of effective mass here:

https://gravityandlevity.wordpress.com/2010/08/16/when-f-is-not-equal-to-m-a/

Anyons are interesting https://en.wikipedia.org/wiki/Anyon

They might be used for quantum computation https://golem.ph.utexas.edu/category/2008/03/physics_topology_logic_and_com.html

With respect to the beauty of emergent phenomena, you may be interested in the work coming out of Jim Sethna's group at Cornell. See:

http://www.lassp.cornell.edu/sethna/Sloppy/