The Cactus and the Weasel

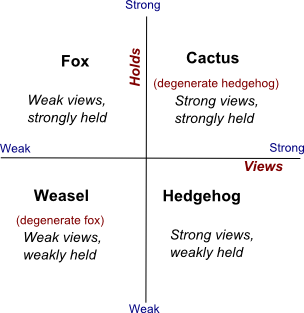

The phrase, strong views, weakly held, has crossed my radar multiple times in the last few months. I didn't think much about it when I first heard it, beyond noting that it seemed to be almost a tautological piece of good advice. Thinking some more though, I realized two things: the phrase neatly characterizes the first member of my favorite pair of archetypes, the the hedgehog and the fox, and that I am actually much better described by the inverse statement, which describes foxes: weak views, strongly held.

If this seems counterintuitive or paradoxical to you, chances are it is because your understanding of the archetypes actually maps to more commonplace degenerate versions, which I call the weasel and cactus respectively.

True foxes and hedgehogs are complex and relatively rare individuals, not everyday dilettantes or curmudgeons. A quick look at the examples in Isaiah Berlin's study of the archetypes is enough to establish that: his hedgehogs include Plato and Nietzsche, and his foxes include Shakespeare and Goethe. So neither foxes, nor hedgehogs, nor conflicted and torn mashups thereof such as Tolstoy, conform to simple archetypes.

The difference is that while foxes and hedgehogs are both capable of changing their minds in meaningful ways, weasels and cacti are not. They represent different forms of degeneracy, where a rich way of thinking collapses into an impoverished way of thinking.

I seem to have been dancing around these ideas for about a year now, over the course of three fox/hedgehog talks I did last year, and even a positioning for my consulting practice based on it, but I was missing the clue of the strong views, weakly held phrase.

It took a while to think through, but what I have here is a rough and informal, but relatively complete account of the fox-hedgehog philosophy, that covers most of the things that have been bugging me over the past year. So here goes.

Views and Holds

Let's first make the connection between the fox/hedgehog pair and the views/holds pair explicit.

The basic distinction between foxes and hedgehogs is Archilocus' line, the fox knows many things, the hedgehog knows one big thing. The connection to views and holds is this: many things refers to weak views.; one big thing refers to strong views. We'll get to views and why this connection holds in a minute, but let's take a quick look at strong and weak holds, about which Archilocus' has nothing explicit to say. There is an implicit assertion in the definition though.

To get a hedgehog to change his/her mind, you clearly have to offer one big idea that is more powerful than the one big idea they already hold. To the extent that their incumbent big idea has a unity based on ideological consistency rather than logical consistency (i.e., it is a religion rather than an axiomatic theory), you have to effect a religious conversion of sorts. The hedgehog's views are lightly held in the sense of being dependent on only a few core or axiomatic beliefs. Only a few key assumptions anchor the big idea. That is the whole point of seeking consistency of any sort: to reduce the number of unjustified beliefs in your thinking to the minimum necessary.

To get a fox to change his or her mind on the other hand, you have to undermine an individual belief in multiple ways and in multiple places, since chances are, any idea a fox holds is anchored by multiple instances in multiple domains, connected via a web of metaphors, analogies and narratives. To get a fox to change his or her mind in extensive ways, you have to painstakingly undermine every fragmentary belief he or she holds, in multiple domains. There is no core you can attack and undermine. There is not much coherence you can exploit, and few axioms that you can undermine to collapse an entire edifice of beliefs efficiently. Any such collapses you can trigger will tend to be shallow, localized and contained. The fox's beliefs are strongly held because there is no center, little reliance on foundational beliefs and many anchors. Their thinking is hard to pin down to any one set of axioms, and therefore hard to undermine.

This means that it is actually easier to change a hedgehog's mind wholesale: pick the right few foundational beliefs to challenge or undermine, and you can convert a hedgehog overnight. It is the reason the most fervent true believers in a religion are the new converts. It is the reason the most strident atheists are the once-religious. Hedgehogs whose Big Ideas are undermined through betrayal by idols can turn into powerful enemies overnight.

Now let's talk weak and strong views.

The Strength of Views

We don't hold our beliefs as large collections of atomic propositions. Instead, the bulk of our beliefs are organized into clusters we call views that correspond to beliefs about specific domains. One or a few of these views may be deep views, representing one or more home domains.

If all our views happen to be connected and relatively consistent, we call it a world view. Both foxes and hedgehogs have views. Hedgehogs in addition have world views.

Consider the difference between strong and weak religiosity. In a Christian culture, the former typically evokes the image of a Biblical literalist, who believes every little detail in the Bible literally. The latter typically evokes the image of somebody who believes in an eclectic subset of moral principles captured in favored proverbs, parables and allegories.

The latter belief system is robust to challenges to a vast majority of literal details in the Bible. The former will be forced to defend many more fronts against attack, ranging from the 7-days-of-creation belief to the core belief in the literal resurrection of Christ.

A view is generally a belief complex: A set of interdependent beliefs. Some are so fundamental, they are practically axiomatic (in either an ideological or logical sense). Undermine those fundamental beliefs and everything else falls apart. Others are so peripheral, nothing depends on them.

Within views, you find complex structures that behave differently under different interpretations. For example, if you treat a religious view as metaphoric, it becomes a lot harder to undermine than if you treat it as literal. Metaphoric views create strong holds because they are weak interpretations.

So a view is a belief complex with a non-random structure (there are more and less fundamental elements), along with an interpretation: a system of justification that allows you to reach less fundamental beliefs from more fundamental ones.

A strong view is one that encompasses a large number of beliefs in a domain, and is defended with the most literal interpretation available. By contrast, a weak view is one that encompasses only a few critical beliefs, and is defended with the most robust interpretation available.

A strong view is strong in two senses of the word.- It is powerful. Because it says so much, and so literally, to the extent that it is true or unfalsifiable, it is very useful. A detailed and literal religiosity is a fully featured operating system for a lifestyle. A vague and figurative spirituality may be more defensible in debates with atheists, but offers very little by way of practical prescriptions for life. A detailed prediction about the future of an industry, with predictions about individual companies down to the future behavior of their stocks, is something you can bet money on. A loose and figurative prediction at best allows you to quickly interpret events as they unfold in detail.

- It is tedious to undermine even though it is lightly held. A strong view requires an opponent to first expertly analyze the entire belief complex and identify its most fundamental elements, and then figure out a falsification that operates within the justification model accepted by the believer. This second point is complex. You cannot undermine a belief except by operating within the justification model the believer uses to interpret it. A strong view can only be undermined by hanging it by its own petard, through local expertise.

For most views we hold today, we often have no idea what is fundamental and essential, and what is peripheral and dispensable. There is so much knowledge in the world today about major issues such as global warming and the obesity epidemic that nobody seems able to zero in on the fundamental premises within any given goat rodeo.

This was apparent in the recent evolution versus creationism between the Bill Nye and Ken Ham. I watched a part of it and was struck by how thoroughly pointless it seemed. Neither side could convince the other within their own schemes of justification and interpretation. In large part because neither side really understood the fundamental beliefs in their own view, let alone on the other side.

Changing Your Mind

We change our mind all the time when we are dealing with isolated, atomic beliefs. We might experience a minor stab of chagrin when somebody googles us wrong in real time, but it's a pinprick that passes.

When people talk about the difficulty of changing minds, both their own and others, they generally mean changing views, complete belief complexes about a particular domain, or world-views, belief complexes that encompass the totality of human existence.

Changing a view is like uninstalling a software program from your computer, installing a substitute, and learning the new software. Changing a world view is like switching entire operating systems.

Strong views represent a kind of high sunk cost. When you have invested a lot of effort forming habits, and beliefs justifying those habits, shifting a view involves more than just accepting a new set of beliefs. You have to:- Learn new habits based on the new view

- Learn new patterns of thinking within the new view

This is why I would never attempt to debate a literal creationist. If forced to attempt to convert one, I'd try to get them to learn innocuous habits whose effectiveness depends on evolutionary principles (the simplest thing I can think of is A/B testing; once you learn that they work, and then understand how and why they work, you're on a slippery slope towards understanding things like genetic algorithms, and from there to an appreciation of the power of evolutionary processes).

Paradoxically, this again means it is harder to change fox minds than hedgehogs. A lack of deep expertise means there are fewer strong doer habits anchoring beliefs, and instead, beliefs are anchored by beliefs in other domains. Belief modification through behavior modification is harder because there are fewer behaviors to modify.

We now have a basic account of holds and views, strength and weakness, and thumb-nail portraits of foxes and hedgehogs in those terms. So what does it mean to have a strong view, weakly held? What is the best-case behavioral profile of an enlightened, non-degenerate hedgehog?

Strong Views, Weakly Held

Strong views, weakly held is a powerful heuristic because it suggests you cultivate the ability to switch full-blown hedgehog world views, very fast. In the software metaphor, you get very good at switching out software packages and rebuilding your operating environment anew, and even complete operating systems (in the case of deep conversions).

The key to holding your views weakly is recognizing a basic fact about human thinking: it is far easier to recognize when one of your fundamental beliefs has been undermined than to figure out which of your beliefs is fundamental. It is harder to recognize all the ways in which you can be checkmated than to recognize an opponent's specific move as a path to an inevitable checkmate (the sign is fear-uncertainty-doubt as all sorts of things start going wrong for you).

This means you learn faster when there is an adversary trying to undermine your beliefs.

This is because for hedgehogs, habits are far more fundamental than the beliefs associated with the habits. But for an adversary who does not have your habits, the logical structure of your beliefs (some of which you may not even be aware of) is all that is relevant. All the behavioral clutter and inertia has been eliminated. They are more free to spot your fundamental premises and attack them (it is a behavioral analog to being hung by your own petard: to have your own habits used against you).

Once you recognize that your adversary has an advantage in learning some things about you, due to the lack of the baggage of habits, you see an adversary as a teacher or a learning aid, rather than somebody who is just there to be defeated (that too, of course).

This is the idea of rapid reorientation (or "fast transients") in the OODA-loop view of decision-making, and sheds light on what precisely is involved in achieving this ability.- Learning to recognize when your views have been completely undermined, versus lightly damaged.

- Immediately switching to the default assumption that every other belief within the view is likely suspect now, even if it hasn't yet been specifically undermined

- In building a new view, on top of new habits, treating old beliefs as false and irrelevant unless proven true and relevant. In other words, if a new habit collides with an old habit or belief, the latter must be assumed guilty until proven innocent.

Beginners don't even know how to recognize when they are completely screwed, and soldier on bravely until somebody puts them out of their misery. Intermediate level thinkers recognize when their position has been completely undermined, but fail to immediately put a question mark on every other belief in the view, and switch into salvage mode rather than reconstruction mode. Advanced thinkers do both, but can be sloppy about preventing old habits and beliefs from contaminating new view formation.

A hedgehog who learns to achieve fast transients is set up to play an infinite game rather than a finite game.

Now, what about the enlightened fox, if there is such a thing?

Weak Views, Strongly Held

I do not hold truly strong views because I do not have much of a capacity for deep domain-specific detail, and outside of very narrow areas, am not much of a doer, which means I have far fewer specialized habits of expertise than powerful doers.

In most areas: politics, culture, governance, technology, startups and all the other topics about which I offer views from an armchair, my thinking could be characterized as weak views, strongly held. Even in areas where I have some home-domain expertise and doer skills, I don't hold particularly strident and detailed views. To a large extent, I have no home domain. I am a cognitive nomad.

So by weak views, I mean I primarily approach all areas (including my nominal home domains) as an outsider, with the intent of identifying and forming opinions about fundamental premises, rather than achieving insider status and mastery.

This is not always possible, because often the most important beliefs within a view are buried too deep inside the technical part of a belief system, and inaccessible to casual outsider tourists. For example, in formal logic, a casual tourist is unlikely to encounter, let alone appreciate, the idea that the axiom of choice is fundamental.

Or worse, the most fundamental beliefs may never have been articulated at all. This is the strong form of Taleb's model of antifragile doer-knowledge, where the set of habits mark out a bigger space of cognitive dark matter than the set of explicit beliefs cover, leading to the possibility that the most important beliefs have not yet been stated, and might never be.

By strongly held, I mean, I tend to accept or reject any locally fundamental beliefs I find based on justifications from other domains (often driven by analogy of metaphor). What is strong about the "holding" is that it is anchored by many independent justifications in unrelated domains, just as strong views are anchored by many details and unexamiend habits in one domain.

Take for example one of my favorite technical ideas: that "random inputs drive open-ended learning." It's an idea you find in machine learning, control theory, theories of fitness (ideas like "muscle confusion") and dieting, cybernetics (Ashby's law), signal processing, information theory, biological evolution, automata theory, metallurgy, and optimization theory. It also happens to be a key idea in Taleb's notion of antifragility -- the "disorder" that things gain from, though not the key idea (that would be convexity, an equally ubiquitous many-domains idea). To my knowledge, nobody has come up with a canonical grand-unified version of the set of instances of the principle (Ashby's version comes closest, but is pretty weak).

I have no particular attachment of the version of the idea in my home domain of control theory (where it is known as persistency of excitation). It is the version I understand best, but not by much. I use the version of the idea that fits the immediate pattern recognition problem the best.

If the basic challenge for the hedgehog is to get better at fast transients in the sense of switching rapidly from one strong view to another, and quickly shifting habits, the basic challenge for the fox is to shift quickly from one pattern of organization of related beliefs in multiple domains to another. Hedgehog fast transients are like paradigm shifts in physics. Foxy fast transients are like shifting from one organization scheme for stamp collecting to another.

As with hedgehog fast transients, when this works, foxes are set up to play the infinite game rather than the finite game. They do not get locked into any particular habits of pattern recognition that can be used against them (such as 2x2 diagrams, archetypes or specific narrative structures).

When a belief works across so many domains and seems fundamental to many, you naturally hold on to it strongly, and even if it is apparently undermined in one, you don't reject it, because it has some independently demonstrated value and credibility in other domains. You tend to suspect a local mistake, exceptional conditions or a pattern recognition mistake on your own part.

This means, weak views, strongly held, is a heuristic for collecting domain-independent truths.

But hedgehogs presumably encounter and collect domain-independent truths too. What makes the two different is what they do with these collections.

What can you do with domain-independent truths? You can either form totalizing world views, or you can end up with a refactoring mindset. The former is the hedgehog strategy, the latter is the fox strategy.

This gives us two types of religion.

Heuristic and Doctrinaire Religions

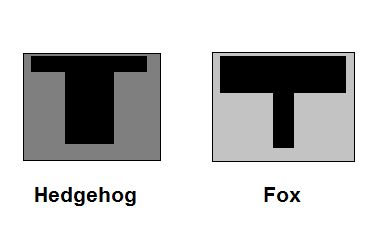

The deep difference between foxes and hedgehogs comes down to their preferred styles of thinking and doing outside their home domains. To get at this difference, you have to first get beyond the coarse distinction between generalists and specialists. There are really no pure generalists or pure specialists. Everybody is what career counselors call a T-shaped professional (an awful term, but it's stuck).

The difference is that hedgehogs are fat-stemmed Ts who explore the world in a dominantly depth-first way, prioritizing home-domain expertise first, while foxes are fat-bar Ts who explore the world in a dominantly breadth-first way, doing the minimum necessary for survival in a home domain.

Each has an "antilbirary" of the unknown, to use Taleb's term, of the complementary shape, as shown below (black is known, white is unknown). Foxes have lots of books 30% read, and a few 100% read, hedgehogs have lots of books 5% read (judged by their cover), and a few 300% read (repeatedly and closely re-read).

Don't make the mistake of thinking one is top heavy while the other is solidly rooted. There is no gravity in this T-metaphor.

Let's consider thinking and doing in turn. Thinking first.

Foxes prefer to rely as little as possible on what they know from their home domain (for the very good reason that as weak-stemmed T's, they don't trust their home-domain expertise much anyway), and instead rely on ad hoc metacognition based on freewheeling use of metaphor, narrative, analogy and other kinds of cheap tricks. They eschew Platonic abstractions and grand-unification formalisms. This makes their religions highly heuristic. Catholicism and Hinduism in daily practice (as opposed to theological study) are highly heuristic religions. In Kahnemann's terms, foxy religions are System 1 religions.

Heuristic religions are based on fragmentary, unintegrated collections of meta-knowledge. Practicing them involves a lot of energetic and lively metacognition. You have to work with analogies, metaphors, stories, patterns and so forth, in order to form beliefs in new domains. They require heavy-bar T personalities and cognitive styles.

A non-religious example is the kind of refactoring I do on this blog: apply a grab-bag collection of tools and ideas on sets of meta-knowledge, without attempting to coalesce them into grand unified theories. To think using a refactoring approach, you merely try a number of likely seeming tools, and work with the first one that fits and does something vaguely useful. This is why you can think fast with a foxy religion. The heavy-bar T helps speed you up.

Hedgehogs rely a great deal on what they know from their home domain (because they know a lot there, and are inclined to milk that knowledge) and prefer to apply that knowledge through abstraction and reasoning based on those abstractions. They eschew ad hoc metacognition and work hard to form strong and efficient metanorms instead. This makes their religions highly doctrinaire. Islam and Protestant Christianity are highly doctrinaire. In Kahnemann's terms, hedgehog religions are System 2 religions.

Doctrinaire religions are highly integrated collections of meta-knowledge, where the integration is achieved through inductive generalization based on abstract categories inspired by privileged instances of patterns from a home domain. In other words, you use a thick stem to sustain a thin bar on your T. In order to form beliefs in new domains, you first fit the new domain to your efficient abstractions, and then reason with those abstractions (carefully, because your abstractions come with a home-domain bias).

A non-religious example is Taleb's philosophy of antifragility. To think using his philosophy of antifragility, you have to first cast the ideas in a particular domain into the abstractions used in his model: disorder, convexity and so forth, and then apply careful formal reasoning. This is why you can only think slow with a hedgehog religion. The light-bar T doesn't help you much.

On the other hand, when it comes to doing in a new domain, the advantages are flipped. Foxy religions aren't very useful for guiding actions, quick or otherwise, even though they offer quick-and-dirty appreciations of novelty. Foxy religions are naturally self-limiting. They limit you to an armchair.

Hedgehog religions on the other hand, yield strong guides to action in alien territory. This is because abstract categories lead to very quick actions once you can fit data to them. These are metanorms: behavioral principles based on abstractions. An example from the antifragility philsophy is Taleb's principle of integrity: if you see fraud and don't say fraud, you are a fraud.

This requires a strong abstraction associated with the concept of fraud.

It is no accident that the metanorm here supports a decisive separation into good and evil. Much of the action driven by efficient metanorms involves actions of ideologically driven inclusion or exclusion. Another example from Taleb is his assertion that much of academic scholarship outside of physics and some parts of cognitive science, is nonsense.

Much of the time, inclusion/exclusion is the only kind of action we take in alien domains anyway. We decide whether or not to visit certain cities or countries. We decide whether or not certain people are worth paying attention to. We decide whether or not certain subjects are worthy of further study.

So far, I haven't done myself and my foxy brethren any favors. Foxy religions are sloppy, error-prone, quick-and-dirty ways of manufacturing insight porn from armchairs, and are useless in guiding action. Hedgehog religions are careful, reliable and slow-and-steady ways of manufacturing solid guides to action in alien territory.

The hedgehogs win on home turf too. They are solid and expert doers on home-ground, thanks to their thick-stemmed T personalities. Foxes, if their T's even have a respectable stem, are rarely respected experts and leaders in their fields.

At best, they are credited with being the imaginative and flighty idea people in their home domains, where they don't produce much, but make for lively party guests and occasionally get their more formidable peers unstuck on some minor point (a capacity usually attributed to luck).

Is there any value at all to being a fox?

The Tetlock Edge

The one slim area where foxiness is generally acknowledged to be an advantage is anticipation. By a slim margin, and based on relatively sparse evidence from one domain (political trend prediction), foxes appear to be somewhat less wrong when it comes to predicting the future than hedgehogs.

It isn't much, and given the half-life of facts, the presumptive advantage may not last long, but we'll take it. Beggars can't be choosers.

Where does this advantage, let's call it the Tetlock edge, come from? I have a speculative answer.

It comes from eschewing abstraction and preferring the unreliable world of System 1 tools: metaphor, analogy and narrative; tools that all depend on pattern recognition of one sort or the other, rather than classification into clean schema. Fox brains are in effect constantly doing meta-analyses with unstructured ensembles, rather than projecting from abstract models.

That's where the advantage comes from: eschewing abstraction.

Abstraction creates meta-knowledge via inductive generalization, and can grow into doctrinaire world views. The way this happens is that you try to formalize the interdependencies among all your generalized beliefs. Your one big idea as a hedgehog is an idea that covers everything, the whole T-box, so to speak. Abstraction provides you with ways to compute beliefs and actions in domains you haven't even encountered yet, thereby coloring your judgment of the novel before the fact.

Pattern recognition creates meta-knowledge through linkages among weak views in multiple domains. The many things you know start getting densely connected in a messy web of ad hoc associations. Your collection of little ideas, densely connected, does not cover everything, since there are fewer abstractions. So you can only form beliefs about new domains once you encounter some data about them (which means you have an inclusion bias). And you cannot act decisively in those domains, since you lack strong metanorms. This means pattern recognition leaves you with a fundamentally more open mind (or less strongly colored preconceptions about what you do not yet know).

The way you slowly gain a Tetlock advantage, if you live long enough to collect a lot of examples and a very densely connected mind full of little ideas, is as follows: The more you see instances of a belief in various guises, the better you get at recognizing new instances. This is because the chances that a new instance will be recognizable close to an existing instance in your collection increases, and also because patterns color the unknown less strongly than abstractions.

As you age, your mind becomes a vessel for accumulating a growing global context to aid in the appreciation of novelty.

Abstraction offers you a satisfyingly consistent and clean world view, but since you generally stop collecting new instances (and might even discard ones you have) once you have enough to form an abstract belief through inductive generalization, it is harder to make any real use of new information as it comes in. There is already a strongly colored opinion in place and guides to action that don't rely on knowing things. Your abstractions also accumulate metanorms, and give you an increasing array of reasons to not include new information in your world view.

The Fox-Hedgehog Duality

If you've been following along closely, you might have foxily jumped to a conclusion pregnant with irony: foxiness is antifragile metacognition and fragile doing. It gains from disorder in the form of new, non-local information.

This is what it means to have a thick-bar/thin-stem T. Since foxy thinking operates via associations among instances of patterns, there is no single point of failure for a broad-based belief. The belief might not even exist in reified form as an abstraction, much as hedgehog doer-beliefs might only exist in the form of unconscious habits.

Hedgehog thinking is fragile metacognition coupled with antifragile doing. It gains from disorder in a local domain, but the associated pattern of metacognition gets progressively weaker, less reliable and more exclusionary.

This is a very strange conclusion, but there is an interesting analogy (heh!) that suggests it is correct -- the distinction between structured and unstructured approaches to Big Data, relying on RDBMS technology and NoSQL technology respectively.

Foxes are fundamentally Big Data native people. They operate on the assumption that it is cheaper to store new information than to decide what to do with it. Hedgehogs are fundamentally not Big Data native. If they can't structure it, they can't store it, and have to throw it away. If they can structure it with an abstraction, they don't need to store most of it. Only a few critical details to fit the Procrustean bed of their abstraction.

Because foxes resist the temptation of abstraction (and therefore the temptation to throw away examples of patterns once an inductive generalization and/or metanorm has been arrived at, or stop collecting), they slowly gain an advantage over time, as the data accumulates: the Tetlock edge.

We can restate the Archilocus definition in a geeky way: The fox has one big, unstructured dataset, the hedgehog has many small structured datasets.

But this takes a long time and a lot of stamp collecting, and foxes have to learn to survive in the meantime. Young foxes can be particularly intimidated by old hedgehogs, since the latter are likely to have accumulated more data in absolute terms.

So how do foxes survive at all? Why haven't we gone extinct as a cognitive species?

A behavior of wild foxes is very revealing. The metaphor of the fox in the hen-house is based on a characteristically foxy behavior: when given an opportunity to sneak into a hen-house, a fox will kill every chicken in sight. What the metaphor does not capture is the reason foxes do this: far from having a bloody-minded taste for indiscriminate slaughter, they operate on the assumption that they have to lock in gains on the rare occasions they do get a chance to score big (foxes bury their extra kills all over their territory, according to an Attenborough documentary I once watched).

By contrast, when a general belief exists only as an abstract principle strongly anchored by a detailed understanding in one preferred home domain, and the finer details (including details that might potentially conflict with clean-edged abstractions) of distant, alien examples have been discarded, the belief becomes fragile to logical error or new alien counter-examples. Over time, the world-view becomes more unreliable.

To the extent that the abstract beliefs have been assembled into an entire abstract religion, the complete belief structure can unravel if foundational abstract beliefs are undermined, leading to an existential crisis.

Ultimately, the fox-hedgehog duality is a result of bounded rationality. You only have so much room in your head. You have to choose where to put in a lot of detail.

Can you get past this fundamental limit? I don't know yet. Possibly with computational prosthetics.

The conclusion Isaiah Berlin drew from his study of Tolstoy was this: Tolstoy's talents were those of a fox, but he believed one ought to be a hedgehog. The resulting tension informs all his work (especially his later work, when he grew religious).

When I first tried to put Taleb's views in relation to my own, it struck me that his talents are those of a hedgehog, but he believes one ought to be a fox (for a while, I thought that description applied to me, until I looked in my Talebian evil-twin mirror and realized it didn't).

The account I've developed so far, I think, accounts for the lives of both. With Tolstoy, it explains his later moralistic fiction to my satisfaction. With Taleb, it explains the curious build up of ideological tension in his books (what was mere winner's glee in Fooled by Randomness turns into a virtuoso abstraction in The Black Swan and ideological hatred by the time you get to Antifragile). It explains the paradox of his preference for trial-and-error and heuristic thinking within domains, but abstraction and System 2 logic across domains.

John Boyd appears to have been another mashup character, somewhere in between the two, but closer to Taleb than to Tolstoy.

The effort to transcend the fox-hedgehog dichotomy in one way or another, is certainly laudable. I might even try it myself one day. But for most of us, most of the time, the bigger challenge is avoiding degeneracy. Which brings us to weasels and cacti.

Dissolute Foxes and Hidebound Hedgehogs

It is easy to forget that in Berlin's original essay, the subjects he was analyzing were all renowned artists, writers or thinkers. But we commonly limit ourselves to thinking about dissolute foxes and hidebound hedgehogs, where both archetypes are reduced to negative stereotypes with no redeeming qualities. What precisely is involved in this reduction?

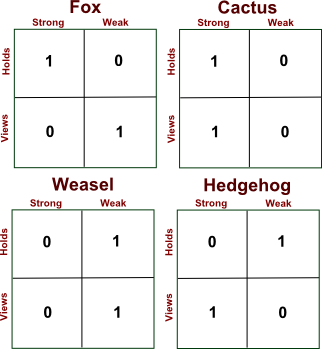

To see why strong views, strongly held and weak views, weakly held represent degenerate hedgehogs and foxes respectively, consider an alternative representation of each of the four archetypes as 2x2 matrices, where you have rows marked views and holds and columns labeled strong and weak.

Full-blown hedgehogs and foxes will give you a 2x2 matrix that is "full" in a sense (what mathematicians call "full rank"), where you cannot delete any row or column without losing some information. This makes their belief systems truly two dimensional: they have views and world views, cognition processes and meta-cognition processes, norms and metanorms. Where they differ is a matter of emphasis on the bar or stem of the T, and the strength of their coloring of the unknown.

Strong views, strongly held is the stuff of dogma. Cognition without meta-cognition. A hidebound inability to change views at all, via a disconnection from reality through elevation of fundamental beliefs to unfalsifiable sacredness.

Weak views, weakly held is the stuff of bullshit. Metacognition without cognition. A dissolute and ephemeral engagement of ideas in purely relative terms.

The first is the pattern of degeneracy that threatens hedgehogs who never unroll from a balled-up state to scurry to another place, turning into de facto cacti.

The second is the pattern of degeneracy that threatens foxes who become unmoored from any kind of ground reality, becoming impossible to pin down, but also incapable of telling truth and falsehood apart, turning into weasels.

Bullshit Detection

Non-degenerate foxes and hedgehogs are both capable of weathering bullshit.

Foxes are bullshit resistant. They do not build complex and fragile edifices of metacognitive abstraction that might collapse, and are also agnostic to the state of detailed truths of any domain. There's not much more to say about how they weather bullshit.

A hedgehog weathers bullshit by detecting it. This is a more complex way to weather bullshit.

When one strong view collides with another, sincere ideological opposition from other hedgehogs is easy to detect. In the simplest case, you get the opposite view by flipping all the truth values. Big-picture opposition from a non-bullshitting fox is also easy to detect, because you get coherence with respect to fundamental beliefs.

But an insincere opposition to a strong view will reveal itself by having a random relationship to the elements of the strong view: a bullshit-detector will fire.

If you're a hedgehog, here's an explicit little bullshit detection test you can do in specific situations: make a list of 20 basic and obscure beliefs yes/no beliefs in your domain. Now ask another person, with about the same level of claimed home-comfort in that domain, for their beliefs on those questions.

Now do what computer scientists call an XOR between the two sets of yes/no beliefs. If members of a pair of beliefs are both yes or both no, you get a 0, an agreement. If not, you get a 1, a disagreement.

A soul-mate will give you all zeros. Perfect alignment. An idealized nemesis should give you all ones. Perfect opposition. A fox-hedgehog type opposition should give you either alignment or opposition on the basic beliefs, and random results for the obscure ones. This is an evil-twin relationship.

But if all the results are all random, you are either dealing with a bullshitter, or are yourself a bullshitter.

Note #1: In one of his guest posts last year on the Tempo blog, Greg Rader came at this same theme from a different angle, that of developmental trajectories to foxhood or hedgehoghood: The Cloistered Hedgehog and the Dislocated Fox.

Note #2: For those of you new to this theme, Tempo might be a useful read, and the glossary I posted recently might be a useful aid.

38 Comments

What are some of the obstacles you would see to transcending the fox-hedgehog dichotomy? What are some of the ways to get closer to that?

Some ideas that came to mind:

- Having nearly equally thick horizontal and vertical sections of the T shape seems achievable.

-I can think of a fox having an aesthetic appreciation for abstract theories that explain many things, and I can think of a hedgehog having aesthetic appreciation for metaphors and empirical data. But I'm not sure that's enough. It seems like there is more to how each is oriented than that. It seems to be based on preferences, and I'm not sure it would be desirable to truly have no preferences on the topic, or what that would look like.

-What about the approach to the unknown? That seems at odds. If a fox wants to wait for data before acting and the hedgehog wants to extend abstract principles without checking to see if they are valid and act immediately, I'm not sure how to reconcile the two. With enough time, a careful observer or participant could attempt to make the principles and data match up, and there are different processes that could achieve that. This could be done by a fox or a hedgehog. But, presented with the unknown, is it possible to follow both hedgehog and fox instincts and habits simultaneously?

-I think the approach to bullshit could be more easily integrated. Having more than one approach to detecting bullshit in one's toolkit can be useful.

I suspect you cannot have balanced T's because what thickens/thins either the stem or the bar is some sort of snowballing positive/negative feedback process. The two processes will compete for the same source of cognitive energy and small initial imbalances will quickly compound.

More generally, can you get past this? Well, one definition of intelligence might simply be the total area you have in your T, a function of processing smarts, RAM and persistent storage. That computing metaphor suggests that prosthetic memory or processing aids might help people break out. Or possibly not. Possibly the tools will exaggerate the bias rather than correct it.

I think you could have balanced T's as a matter of how much knowledge and expertise you have. It is possible to be very knowledgeable in one domain, able to extend theories from that domain to others (like a hedgehog), and also to have a breadth of knowledge and alternate perspectives (like a fox). That's what I was thinking of when I said the T's could be balanced.

But I think the T diagram works better as a model of where foxes or hedgehogs put their trust, rather than how they acquire knowledge. In that case, it wouldn't really matter how much knowledge of the other kind you had, when it came down to making a decision or choosing how to live, a fox would trust in one approach, the hedgehog in the other.

It is also a matter of what importance to place on things, and what one would care about.

A hedgehog would think that the fox's worldview was deficient because it was not completely consistent. A fox wouldn't really care, and might feel that the data points are what matters and eventually some model might be found that accounts for them all, but if it is not yet found, that's not a big deal. That's where the fox does not spend the cognitive energy, making sure everything matches up consistently.

A fox would think that the hedgehog's premises are oversimplified and that the system built upon such unsound foundations leads to wacky conclusions that get individual situations wrong. The hedgehog would feel that the rules are there precisely to deal with the wacky situations, so that you know what the right thing to do is there, even if it is counter-intuitive. So, that's where the hedgehog does not spend cognitive energy, changing the worldview based on individual situations, or constructing limits on their premises.

I don't think it is just a matter of cognitive energy, though. I think it is a preference on what method of making sense of the world to trust, and I think it has to do with personality, although it's possible social context and training plays a part.

So, could you trust both equally? I suspect not. Distrust both equally? Maybe, but I'm not sure what a third, alternative approach would be. At some point, you have to have something to go on as a basis for living in the world.

The post also reminded me of the introduction to Adrian Bejan's book on Constructal Theory, where he goes to great lengths to distinguish constructal theory from biomimicry and make a case for why theory is important in engineering. He almost sounds like he's pleading at some points "Theory is important! Really! It is!"

I am also reminded of Frederick Brooks' idea that during the process of designing something, an explicit wrong assumption about the users is better than an implicit correct assumption. That seems like a hedgehoggy thing to say, but it was in a context of a design process that seems more fox like. So, maybe combining the two is not so awkward as it first appears.

Hmm... Yes, trust in your own knowledge is something to be explored more. I'd say foxes are systematic doubters of both stem and bar.

The Brooks quote doesn't seem either foxy or hedgehogy to me. It's just a clever insight. If you treat it as a sacred rule, you're being a hedgehog about it. If you're treating it as a tweetable bon mot, or a bunny trail trailhead, you're being a fox about it.

I think a mix is definitely possible, though it may be one type consciously going the other direction, like Taleb or Tolstoy. You're probably right that it's a cascading effect in the majority of cases: I doubt anybody lands in the middle by accident.

I probably ping strongly as fox, in that my core beliefs are generally cobbled together from many sources, and subject to revision (rather than outright replacement). However, I've also learned a hedgehog side to combat the foxy fragility of action. I can fall back on (and trust) my current coherent world-view, and postpone the deep work of reassessment/refactoring for later, if action has become imperative. It is usually an act of willpower to do so, but I've noticed the will necessary becomes less as I get more used to it.

It's definitely a dichotomy: the fox always nips at the hedghog's tail hoping to move it, and the hedgehog (being a student of history and sociology) pricks the fox's nose with bitter experience.

Technical tools like a contacts list, calendar, and GPS definitely help, in providing habitual crutches for anti-fragility of action.

Technical tools like a contacts list, calendar, and GPS definitely help, in providing habitual crutches for anti-fragility of action.

GPS itself is non-resilient and fragile. The moral of the death-by-GPS story is that you better know how to use a map. However GPS vastly expands our sense of what is possible when the system runs stable and events are normally distributed and in my case it also prepares me for something. I work in the greater Munich area and it takes me 6 hours to walk home from my work place. In the unlikely case of the big blackout, when the whole traffic system breaks down, including GPS, I would find my way home with visual cues only even without a map based on 3 training sessions where I did the walk for fun - using GPS. The reliance on a fragile system actually creates the individual skills that enable some of us to get rid of even analog crutches.

The fox/hedgehog tale is basically for Venkat IMO, since he is the only one I see by far who has the potential to be a hedgehog. The petty intellectual model of a guy who slowly grows his deep home turf and is otherwise open minded enough for touristic excursions might be what we imagine as an average hedgehogs life and probably it is but this is not what is meant. To understand what a pure hedgehog thinks let me quote a longer passage:

It is from the preface of Schopenhauer's "Die Welt als Wille und Vorstellung". I wonder if whole generations of intellectuals discovered themselves in those words and ambitions and wanted to do it again.

I might be a fox, with occasional bullshitting weasel-like behaviour.

It looks like there is a small error, I think you meant "When I first tried to put Taleb’s views in relation to my own, it struck me that his talents are those of a hedgehog, but he believes one ought to be a fox". What you have there now describes him as a Tolstoi rather than an anti-Tolstoi.

Also, Alan Kay has many good quotes but one of them is along the lines of "the best way to predict the future is to invent it". How does that statement fit in to the fox/hedgehog dichotomy? He seems to have a couple hedgehog ideas that he has pursued his entire career, but his recommended reading list seems relatively broad. Would he qualify as a true hedgehog?

Fixed, thanks.

Interestingly enough, I've had discussions on Alan Kay before. The invent/predict thing is pure hedgehog of course, but other things he's said are more foxy.

I think he too is talents of hedgehog/belief in foxiness like Taleb. He can get quite religious in his discussions on code bugginess, technical debt etc. and seems to be harboring a certain wistfulness that his visions were not realized in the idealized way he conceived them, but otoh, he has his "perspective is worth 80 IQ points" type thinking as well, and foxy focus on DSLs and the importance of perspective in general.

I don't think breadth of reading is a good indicator. Both foxes and hedgehogs can have huge and broad libraries and anti-libraries. It's how they process them that matters. Also, I suspect (but can't be sure) that even within the diversity, there is a certain ideological filtering that happens up-front with hedgehogs. They look for certain shibboleths and red flags on book back covers as an initial inclusion/exclusion principle.

My reading of this post leads me to think that you're considering these (meta)cognitive categories from a strictly mechanical data-collecting-and-processing point of view.

Do emotions play any part? How would you differentiate someone who has strongly-held views because their beliefs are rationally anchored in multiple instances in multiple domains from someone who has strongly-held views simply because they're prideful and stubborn in their ways/thoughts? (Is the feeling of pride epiphenomenal with having strongly-held views?) Similarly, how would you discern between someone who has weakly-held views because of their cognitive dependence on core axiomatic beliefs, and someone who has weakly-held views out of fear of commitment? Could someone with weak views simply be someone who can't "handle the truth"? (Does my asking of these questions suggest I'm a hedgehog?)

I think emotions are just another kind of data processing and come in fox/hedgehog varieties. Haven't thought too much about it though.

Venkat,

Sorry to bring a tangential point, but I was reading your very interesting series on "Entrepreneurs are the new Labor" in Forbes and wanted to see if you explored the ideas any further. I felt you touched on the Global City-State economy very lightly in the series with some hints of a more detailed thesis. Have you come across any interesting commentary on this topic? The a16z folks (Balaji Srinivasan esp.) seem to have had similar ideas around organizing economies around new cities/exits.

I work with a16z, so familiar with Balaji's thinking.

Lots of smart people thinking about this all over the place. I've personally shifted my attention elsewhere. See my Cloud Mouse, Metro Mouse post though.

If there is anything which comes close in human pragmatics to an unbound meta-cognitive hyperactivity we see it in the financial markets. It is not that he fox comes to town tomorrow but is is us who stand at the gates of the empire of the weasels. For Marx this was an unprecedented perversion, the total inversion of value in the name of economic rationality. The economic abstraction pervades all of our society and lets us colonize others while we are confusing concrete with abstract human relationships. He didn't really care about philosophical or mathematical towers to Babel but about life which has become abstract. Everyone acts within someone else's scheme which coincides with a pattern of addictive behavior.

Should we defeat the empire of the weasels, should it be weakened by trust into institutions and by means of "regulations", should it be further unleashed and nurtured, should we accept it with stoic fatalism and hope for survival?

I still do think there is still enough truth in the story to take it seriously. We are not done with it but I wonder how a slightly evil response looks like?

I recognize myself as a mostly-fox in your descriptions. I appreciate the perspectives and insights you offer, and, perhaps because I'm a fox, give little significance to the barely-few typos. However, I'd like to share this piece with a potentially mostly-hedgehog audience, and its entirely possible that some among the audience will notice a typo and erroneously question the fat-stemmed "expertise" of the author. Please let me know when the piece is republished w/out errors. I would like to cite in my forthcoming book. Thanks.

Point out any specific typos you spot and I'll fix 'em. There is no such thing as "republishing" in blogging. Stuff just evolves and gradually cleaned up depending on how much attention a piece is getting :).

I am afraid if your persuasion efforts might stumble on typos, they're probably doomed anyway, but all the best anyway.

Good point. Good blogging. Thanks.

since Mary broached the subject, here is one English bug I noticed:

"What is strong about the “holding” is that it is anchored by many independent justifications in unrelated domains, just as strong views are anchored by many details and unexamiend habits in one domain."

'unexamined' perhaps?

Not a profound point here, but just FYI, when I click on "Venkat" with a link to https://www.ribbonfarm.com/about/venkatesh-g-rao/

You 404’d it. Gnarly, dude.

Surfin’ ain’t easy, and right now, you’re lost at sea. But don’t worry; simply pick an option from the list below, and you’ll be back out riding the waves of the Internet in no time.

Hit the “back” button on your browser. It’s perfect for situations like this!

Head on over to the home page.

Punt.

Oops. Such are the dangers of trying to make error/warning messages amusing. Reminds me of my FTP saying "Timeout - Try typing faster next time".

Artifact of a recent ISP move. It's the default 404 page, which I haven't bothered to edit. Thanks. Hopefully fixed now for future comments.

This is the smartest thing I've read this year. You just summarized my entire intellectual life much better than I ever could. Profound thanks, Venkat

Brad Urani

Foxey Antifragilista

I am wondering how well you could map this model to countries and whether that could reveal how prone they are to revolutions, coups and other social disruptions.

Funnily enough, Machiavelli already used a similar model in The Prince, and I was going to mention this before I saw your comment. He pointed out that it would be harder to conquer the kingdom of the Turks (the Ottoman Empire) than France, but much easier to hold it once conquered, on the basis that power in the Ottoman Empire was centralised on the Sultan (strong views weakly held) whereas in France power was dispersed throughout the nobility (weak views strongly held). The King of France might be weaker, but anyone who replaces him would face the same forces undermining his own rule. This is directly analogous to Venkat's discussion of changing the minds of Hedgehogs and Foxes respectively.

His view of republics could be described as "strong views, strongly held"; the fact that the views are dispersed amongst the citizenry results in them being strongly held, and the views themselves are strong in that they are simple principles observed in tradition and codified in law. The options for managing a conquered republic boil down to a lengthy and necessarily destructive campaign of terror, or deliberate non-interference in the republic's laws and traditions.

I don't think there's any illustration in Machiavelli of a weasel state. There's a temptation to think here about nations in terms of their geopolitical behaviour, and we might be able to think of some weasely nations that switch allegiances frequently and observe few long-term principles, but geopolitics is only a relatively small part of what a nation state does. I'm not sure I can imagine what a state governed on weasel principles would look like - perhaps some failed states are weasels by default, lacking the ability to arrive at views at all, let alone strong ones.

That's a really good analogy. In a different comparison, UK vs France in the 17th/18th century, France would be the hedgehog and UK the fox in a navy comparison.

I can think of weasel and cactus states.

A very minor quibble, but one cannot really be hung by one's own petard. The idiom is "hoist by his own petard", viz. to be blown up by one's own bomb, a petard being a small bomb used in siege warfare and "hoist" meaning something like "lifted".

s/false/falls/

Thank you Venkat. You have more or less confirmed (for want of a better word) my suspicions about the way I think that I've had for quite a few years. That is: that I think in patterns and that others think in discrete facts. I'm talking as a general rule obviously.

It corroborates with a lot of facts in my discussions with people.

- lots of people claim to find it impossible to win an argument with me (perhaps they don't realise my thoughts are fluid and grounded on many points). As a corollary to this, some people say I will never admit that I am wrong. I think now that perhaps what they don't realise is that I am defending a pattern of thought and they are trying to attack the specific facts/minor details of what I am saying. I very often admit that I'm wrong when in discussions with like minded thinkers.

- i feel many people find my arguments confused or don't understand the connections. That is because I am making comparisons of patterns, whereas others are making comparisons of factoids. I will happily compare the behaviour of police cars to the source code of a computer virus detection software, whereas others might say "we're talking about software not police". (ps I just made that example up)

- it's also fairly true to say that I am particularly good at predicting the future, especially in regards to business. Now I have a good theory of why.

Do you have any good tips about transcending the fox/hedgehog/cactus/weasel boundaries? I find this particularly difficult and frustrating.

Also, I'm a first time commenter but I've been reading for some time. Thanks for all the interesting articles. You have even inspired me to start my own blog, although it's not nearly where I want it to be yet. Got any good advice for starting up?

Thanks again. Have a nice day.

"Knowing only one thing" makes me think of physicists for whom "everything else is just stamp collecting", free market fundamentalists, or libertarians for whom, it seems, if liberty is guarded, everything else will take care of itself, or extreme pragmatists who see no need for a concept of truth that is not subordinate to usefulness, or utilitarians who introduce "utilons" or "hedons" into every conversation; the most extreme behaviourists. People who have succumbed to the wishful notion that everything can be reduced to a single maximization problem (or minimization - you can trivially turn one into the other). Ultimately this means that all problems will be solved for all times once we have solved that one maximization problem. These are strong and deeply rooted ideas; ideas one might well be powerfully drawn to, which really do have a lot of explanatory power. Are such people cactuses? Mostly they couldn't change if they wanted to: their every thought is structured by the magnetic field of their one great idea.

The key difference is how each of these species comes to know what it knows. Hedgehogs are foundationalists with centralised small-world belief networks, and foxes are coherentists with distributed large-world belief networks.

For hedgehogs, their epistemic criteria and axioms ground a few core beliefs which then ground most of their other beliefs. These core beliefs usually have cross-domain applicability, though lossy translation of the target domains is often required, which means the material is fitted to the tools rather than the other way round. Of course, not every connection in the network has equal strength, so beliefs which seem like they should be weakly held because they are grounded by few other beliefs may actually be held quite strongly due to very high Bayesian priors. Hedgehog beliefs may thus be like high-carbon steels - hard but brittle.

For foxes, their epistemic criteria ground a lot of disparate beliefs which incidentally ground or reinforce various domain-independent beliefs. Such beliefs when grounded in many domains can come to be held more strongly than the originating beliefs. Being domain-independent, they are necessarily general and abstract, and hence weak. This weakness means that they rarely ground other beliefs, which prevents most foxes from transforming into hedgehogs. Foxy beliefs, especially their domain-independent beliefs, tend to have better fit with the world, which grants them predictive advantages.

Hedgehogs make stronger predictions so they are susceptible to falsification Popper-style, though they can deny most contradictions by the Duhem-Quine thesis. After all, contradictions are not inherent to data points but exist in their interpretations. Foxes make modest predictions and can buffer contradicting evidence, sometimes even assimilating it into their networks, displaying negative capability.

Hedgehogs turn into cacti when their core beliefs reflexively exclude their own falsification; their strong views remain weakly held only insofar as these are falsifiable. Enlightened hedgehogs know that their axioms are provisionary and open them to revision, allowing iterated experiments to reveal contradictions, shift paradigms, and discover new knowledge as in David Deutsch's The Beginning of Infinity or Hegel's dialectics.

Foxes turn into weasels when accumulated contradictions overload and reprogram their epistemology. Enlightened foxes allow feedback from domain-independent beliefs into their epistemology, allowing resolution of contradictions and setting up the infinite game.

Perhaps one other characterisation of these species is that hedgehogs have a few answers to many problems, but foxes have many answers to few problems.

For hedgehogs, their epistemic criteria and axioms ground a few core beliefs which then ground most of their other beliefs.

Can we observe this "epistemic criteria and axioms" other than by means of examining their texts by some sort of literary criticism?

I begin to suspect that for Tolstoi all of this wasn't so much about knowledge and life in general but about finding poetic principles. He was discontent about the quality of his writings. He got stuck with it and this is something which was even worsened by his fame.

Refactoring the hedgehog means that you take the general axioms or framework of the hedgehog and see what the specific problem was the hedgehog wanted to solve: extract the specific from the general and return the specific as its purpose.

Example. I didn't quite grok what was all the go-east about in Kevin Simler's recent "Technical debt of the west" post. I later understood what he is heading for from reading his speculations about rogue neurons, agents-all-the-way-down, about living code bases and other fun stuff. A discussion spun off about the avoidance of fear of death in Buddhism. A short intro to Buddhism followed and Buddhism was anchored by the well known "life is suffering" axiom. Now what was the specific content of Buddhists suffering? Maybe it was really metaphysics and socio-religious struggles in some historical background and when you suffer from metaphysics as well Buddhism might be a tested solution for you.

Thanks for this breakdown: nice in that it seems both self-contained, and a good starting point for looking into lots of other related topics. I think I am a Fox, by the way.

You only mentioned, like, two advantages that Foxes have over Hedgehogs, but in thinking about my own thought processes and my wife's (I think she's rather hedgehoggish), I wonder if maybe anxiety management is another distinction between the archetypes, and maybe one advantage the fox has over the hedgehog?

Specifically, I sense that the hedgehog (who hasn't turned into a cactus) has to deal with an almost constant siege of extrinsic anxiety: the sense that their worldview could come crashing down at any moment, and that they're constantly trying to maintain coherence. The fox, on the other hand, has a constant shifting of loyalties and coherence built into their thought process... in a sense, by making room for cracks in the facade, the fox has integrated the anxiety into a sort of built-in anxiety management mechanism.

Feel like I'm leaning too heavily on the structural-integrity metaphor, maybe. Still, may I suggest: foxes have an edge in securing contentment/peace of mind/emotional balance, as long as they don't get caught up in traps of self-assessment and self-comparison?

No one here who wants to be a cactus or a weasel. An instance of Dunning-Kruger or is the self selected readership really that special?

Probably a little bit of both. I doubt either cactus or weasel spends too much time reading through and commenting on dense philosophical treatises. The first doesn't like the challenge to its beliefs, the second doesn't like the challenge of having beliefs.

They also seem like failure modes of their respective archetypes, rather than archetypes of their own. A fox may sometimes be a weasel, or a hedgehog a cactus, without as much of the accumulated-gains effect that makes a fox unlikely to become a hedgehog. Though it may be that the path through cactus/weasel territory is how one changes from one of the fulfilled archetypes to the other.

Not quite Dunning-Kruger, because we're trying to self-evaluate our way of looking at the world, not some particular skill. It has less to do with ignorance vs. competence, and more to do with honesty and self-knowledge, in this case.

Of course, as Nathaniel says, nobody is going to want to identify with a failed archetype. I didn't expect anyone to be so hard on themselves as to say, "Hey, you just made me realize: I'm one of the pathological ones!" Knowing this, of course, I think we all take our self-assessments with a gain of salt.

I've known it to happen though. Negative archetypes are often more recognizable in the mirror than positive.

very nice piece, only this bit was puzzling to me, in a good way though:

"quick-and-dirty ways of manufacturing insight porn from armchairs?"

would love to hear more about that.

I suspect "insight porn" is something Venkat accuses himself of in darker moments. His dark moments are probably not as dark as mind, which would, I think, facilitate his ability to put out such a steady stream of rather interesting ruminations. I say this with all due respect Venkat, as you've been for some time the center of gravity of my web experience -- seeing current politics as fraught with a massive breakdown of common sense w.r.t. judging what sources are credible (e.g. an inability to recognize Fox News as far more propagandistic than the "MSM" or "Lamestream media" as they like to say. Is Fox News, or movement conservatism hedgehoggish? Maybe yes and no -- maybe they've learned the trick of building a coalition of various hedgehoggish subgroups -- neo-conservatives, libertarians, the Christian Right -- an ad-hoc coalition to save the world from the hedgehoggish caricature of liberalism that they scare themselves with).

OK so that coalition in my brain of ideas wanting to be expressed (I've been reading Dennett) made brought me to "Less Wrong" which I see as perhaps splendedly wrong the way Simmler sees Jaynes as splendidly wrong -- but in their infinite maze of blogs I picked up a reference to Ribbonfarm.com's treatment of "Thinking Like a State", and have kept coming back to RF ever since, which has lead to discovery of "omniorthogonal" and "melting asphalt".

You say "foxes appear to be somewhat less wrong when it comes to predicting the future than hedgehogs." Yes but Less Wrongers mostly seem very hedgehoggish to me, especially when I hear words like "hedons". And they are not alone in promoting some form of ultra-utilitarianism. There is a strong current in academia of wanting to simplify the landscape by dropping truth as it has been understood, replacing it with "whatever serves". This is especially true of Social Epistemology as practiced by Steve Fuller & Co.

I've been mulling on variations of this issue for a long time. The second hand idea of Berlin's Foxes and Hedgehogs has had a pull on me for a long time.

I've had a love/hate attitude towards "big ideas", and long ago wrote an essay on that, as it pertains to history in "Ways of Thinking About History" (http://jmisc.net/essays/WaysOfThinking.htm). If fact for anyone interested enough to read that, most of what's in jmisc.net/essays stays pretty close to that obsession.

Echoes of this dichotomy are everywhere. Splitters/lumpers is very close to Foxes/Hedgehogs or New-paradigm-buiding/Normal-Science or Brainstorming/systematic-planning or the "simultaneous loose-tight organization" of _In Search of Excellence_, or maybe Manic-depression or maybe Yin and Yang (which is which I don't know) -- or maybe even the Business Cycle.

To me they seem best viewed as necessary and complementary different ways of coming at the world. History (at least as viewed from my chosen subdiscipline of early 19c American history) has a natural rhythm. For decade or two it will be dominated by some set of theoretical issues, until people get sick of them, and some form of microhistory comes into fashion, and people tend to go in all different directions like 18th/19th century taxonomists making meticulous studies of this or that. Sooner or later, with the aid of a couple of decade's worth of small scale low-theory studies someone sees a new way to look at the whole that excites people again. Actually it's not that simple -- the wave like rhythm is definitely there, but at any given time there are always microhistorians and grand theorists of various sorts.

I hope this has cleared everything up.

Sorry, typo: " His dark moments are probably not as dark as mind" ==> "... mine"