UX and the Civilizing Process

To the modern ear (and stomach), the behaviors discussed here are crude. We're disgusted not only by what these authors advocate, but also by what they feel compelled to advocate against. The advice not to blow one's nose with the meat-holding hand, for example, implies a culture where hands do serve both of these purposes. Just not the same hand. Ideally."Some people gnaw a bone and then put it back in the dish. This is a serious offense." — Tannhäuser, 13th century.

"Don't blow your nose with the same hand that you use to hold the meat." — S'ensuivent les contenances de la table, 15th century.

"If you can't swallow a piece of food, turn around discreetly and throw it somewhere." — Erasmus of Rotterdam, De civilitate morum puerilium, 1530.

These were instructions aimed at the rich nobility. Among serfs out in the villages, standards were even less refined.

To get from medieval barbarism to today's standard was an exercise in civilization — the slow settling of our species into domesticated patterns of behavior. It's a progression meticulously documented by Norbert Elias in The Civilizing Process. Owing in large part to the centripetal forces of absolutism (culminating at the court of Louis XIV), manners, and the sensibilities to go with them, were first cultivated, then standardized and distributed throughout Europe.

But the civilizing process isn't just for people.

UX is etiquette for computersembarrass, verb. To hamper or impede (a person, movement, or action) [archaic].In 1968 Doug Englebart gave a public demo of an information management system, NLS, along with his new invention, the mouse. Even from our vantage in 2013, the achievement is impressive, but at the same time it's clumsy. The demo is frequently embarrassed by actions that have since become second nature to us: moving the cursor around, selecting text, copying and pasting.

It's not that the mouse itself was faulty, nor Englebart's skill with it. It was the software. In 1968, interfaces just didn't know how to make graceful use of a mouse.

Like medieval table manners, early UIs were, by today's standards, uncivilized. We could compare the Palm Pilot to the iPhone or Windows 3.1 to Vista, but the improvements in UX itself are clearest on the web. Here our progress stems not from better hardware, but from much simpler improvements — in layout, typography, color choices, and information architecture. It's not that we weren't physically capable of a higher standard in 1997; we just didn't know better.

A focus on appearance is just one of the ways UX is like etiquette. Both are the study and practice of optimal interactions. In etiquette we study the interactions among humans; in UX, between humans and computers. (HHI and HCI.) In both domains we pursue physical grace — "smooth," "frictionless" interactions — and try to avoid embarrassment. In both domains there's a focus on anticipating others' needs, putting them at ease, not getting in the way, etc.

Of course not all concerns are utilitarian. Both etiquette and UX are part function, part fashion. As a practitioner you need to be perceptive and helpful, yes, but to really distinguish yourself, you also need great taste and a good pulse on the zeitgeist. A designer should know if 'we' are doing flat or skeuomorphic design 'these days,' just as a diner should know if he should be tucking his napkin into his shirt or holding it on his lap. And in both domains, it's often better to follow an arbitrary convention than to try something new and different, however improved it might be.

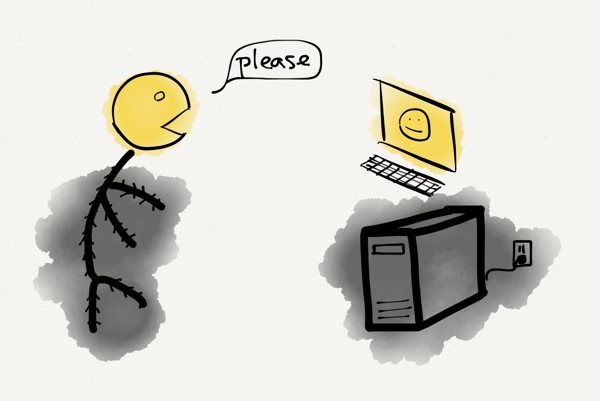

As software becomes increasingly complex and entangled in our lives, we begin to treat it more and more like an interaction partner. Losing patience with software is a common sentiment, but we also feel comfort, gratitude, or suspicion. Clifford Nass and Byron Reeves studied some of these tendencies formally, in the lab, where they took classic social psychology experiments but replaced one of the interactants with a computer. What they found is that humans exhibit a range of social emotions and attitudes toward computers, including cooperation and even politeness. It seems that we're wired to treat computers as people.

Persons and interfaces

The concept of a person is arguably the most important interface ever developed.

In computer science, an interface is the exposed 'surface area' of a system, presented to the outside world in order to mediate between inside and outside. The point of an (idealized, abstract) interface is to hide the (messy, concrete) implementation — the reality on top of which the interface is constructed.

A person (as such) is a social fiction: an abstraction specifying the contract for an idealized interaction partner. Most of our institutions, even whole civilizations, are built to this interface — but fundamentally we are human beings, i.e., mere creatures. Some of us implement the person interface, but many of us (such as infants or the profoundly psychotic) don't. Even the most ironclad person among us will find herself the occasional subject of an outburst or breakdown that reveals what a leaky abstraction her personhood really is. The reality, as Mike Travers recently argued, is that each of us is an inconsistent mess — a "disorderly riot" of competing factions, just barely holding it all together.

Etiquette is critical to the person interface. Wearing clothes in public, excreting only in designated areas, saying please and thank you, apologizing for misbehavior, and generally curbing violent impulses — all of these are essential to the contract. As Erving Goffman puts it in Interaction Ritual: if you want to be treated like a person (given the proper deference), you must carry yourself as a person (with the proper demeanor). And the contrapositive: if you don't behave properly, society won't treat you like a person. Only those who expose proper behaviors — and, just as importantly, hide improper ones — are valid implementations of the person interface.

'Person,' by the way, has a neat etymology. It comes from the Latin persona, referring to a mask worn on stage. Per (through) + sona (sound) — the thing through which the sound (voice) traveled.

Now if etiquette civilizes human beings, then the discipline of UX civilizes technology. Both solve the problem of taking a messy, complicated system, prone by its nature to 'bad' behavior, and coaxing it toward behaviors more suitable to social interaction. Both succeed by exposing intelligible, acceptable behaviors while, critically, hiding most of the others.

In (Western) etiquette, one implementation detail is particularly important to hide: the fact that we are animals, tubes of meat with little monkey-minds. Thus our shame around body parts, fluids, dirt, odors, and wild emotions.

In UX, we try to hide the fact that our interfaces are built on top of machines — hunks of metal coursing with electricity, executing strict logic in the face of physical resource constraints. We strive to make our systems fault-tolerant, or at least to fail gracefully, though it goes against the machine's boolean grain. It's shameful for an app to freeze up, even for a second, or to show the user a cryptic error message. The blue screen of death is an HCI faux pas roughly equivalent to vomiting on the dinner table: it halts the proceedings and reminds us all-too-graphically what's gone wrong in the bowels of the system.

Apple makes something of a religion about hiding its implementation details. Its hardware is notoriously encapsulated. The iPhone won't deign to expose even a screw; it's as close to seamless as manufacturing technology will allow.

Thus the civilizing process takes in raw, wild ingredients and polishes them until they're suitable for bringing home to grandma. A human with a computer is an animal pawing at a machine, but at the interface boundary we both put up our masks and try our best to act like people.

The morality of design

Sure you can evaluate an interface by how 'usable' it is, but usability is such a bland, toothless concept. Alan Cooper — UX pioneer and author of About Face — argues that we should treat our interfaces like people and do what we do best: moralize about their behavior.

In other words, we should praise a good interface for being well-mannered — polite, considerate, even gracious — and condemn a bad interface for being rude.

This is about more than just saying "Please" and "Thank you" in our dialogs and status messages. A piece of software can be rude, says Cooper, if it "is stingy with information, obscures its process, forces the user to hunt for common functions, [or] is quick to blame the user for its own failings."

In both etiquette and UX, good behavior should be mostly invisible. Occasionally an interface will go above and beyond, and become salient for its exceptional manners (which we'll see in a minute), but mostly we become aware of our software when it fails to meet our standards for good conduct. And just like humans, interfaces can be rude for a whole spectrum of reasons.

When an interface choice doesn't just waste a user's time or patience, but actually causes material harm, especially in a fraudulent manner, we should feel justified in calling it evil, or at least a dark pattern. Other design choices — like sign-up walls or opt-out newsletter subscriptions — may be rude and self-serving, but they fall well shy of 'evil.'

Yet other choices are awkward, but forgivable. Every product team wants its app to auto-save, auto-update, support unlimited undo, animate all transitions, and be fully WYSIWYG — but the time and engineering effort required to build these things are often prohibitive. These apps are like the host of a dinner party who's doing the best he can without a separate set of fine china, cloth napkins, and fish-forks. To maintain the absolute highest standards is always costly — one of the reasons manners are an honest signal of wealth.

Finally, there are plenty of cases where the designer is simply oblivious to his or her bad designs. Like the courtly aristocrats of the 17th century (according to Callières and Courtin), a great designer needs délicatesse — a delicate sensibility, a heightened feeling for what might be awkward. (Think "The Princess and the Pea.") Modal dialogs, for example, have the potential to hamper or impede the user's flow. As a designer myself, I was long familiar with this principle, but it took me many years to appreciate it on a visceral, intuitive level. You could say that my sensibilities were insufficiently delicate to perceive the embarrassment, and as a result I proposed a number of unwittingly awkward designs.

Hospitality vs. bureaucracy

Hospitality is the height of manners, its logic fiercely guest-centered. Contrast a night at the Ritz with a trip to the DMV, whose logic is that of a bureaucracy, i.e., system-centered.

When designing an interface, clearly you should aim for hospitality. Treat your users like guests. Ask yourself, what would the Ritz Carlton do? Would the Ritz send an email from [email protected]? No, it would be ashamed to — though the DMV, when it figures out how to send emails, will have no such compunction.

Hospitality is considerate. It's in the little touches. The most gracious hosts will remember and cater to their guests as individuals. They'll go above and beyond to anticipate needs and create wonderful experiences. But mostly they'll stay out of the way, working behind the scenes and minimizing guest effort.

Garry Tan calls our attention to a great example of interface hospitality: Chrome's tab-closing behavior. When you click the 'x' to close a tab, the remaining tabs shift or resize so that the next tab's 'x' is right underneath your cursor. This way you can close multiple tabs without moving your mouse. Once you get used to it, the behavior of other browsers starts to seem uncouth in comparison.

Breeding grounds

Western manners were cultivated in the courts of the great monarchs — a genealogy commemorated in our words courteous and courtesy.

It's not hard to see why. First, the courts were influential. Not only were courtly aristocrats more likely to be emulated by the rest of society, but they were also in a unique position to spread new inventions. Their travel patterns — coming to court for a time, then returning to the provinces (or to other courts) — made the aristocracy an ideal vector for pollinating Europe with its standards (as well as its fashions and STDs).

Second, court life was behaviorally demanding. Diplomacy is a subtle game played for high stakes. Mis-steps at court would thus have been costly, providing strong incentives to understand and develop the rules of good behavior. Courts were also dense, and density meant more people stepping on each other's toes (as well as more potential interactions to learn from). And the populations were relatively diverse, coming from many different backgrounds and cultures. Standards of behavior that worked at court would thus be more likely to work in many other contexts.

Behavior at table was under even greater civilizing pressures. Here there was (1) extreme density, people sitting literally elbow-to-elbow; (2) full visibility (everyone's behaviors were on full public display); and (3) the presence of food, which heightened feelings of disgust and délicatesse. A behavior, like spitting, might go unnoticed during an outdoor party, but not at dinner.

Finally, the people at court were rich. Money can't buy you love or happiness, but it makes high standards easier to maintain.

What can this teach us about the epidemiology of interface design?

Well if courts were the ideal breeding ground for manners, then popular consumer apps are the ideal breeding ground for UX standards, and for many of the same reasons. Popular apps are influential — they're seen by more people, and (because they're popular) they're more likely to be copied. They're also more likely to get a thorough public critique. They're 'dense', too, in the sense that they produce a lot of (human-computer) interactions, which designers can study, via A/B tests or otherwise, to refine their standards. They're also used by a wide variety of people (e.g. grandmas) in a wide variety of contexts, so an interface element that works for a million-user app is likely to work for a thousand-user app, but not necessarily the other way around.

So popular makes sense, but why consumer? You might think that enterprise software would be more demanding, UX-wise, since it costs more and people are using it for higher-stakes work — but then you'd be forgetting about the perversity of enterprise sales, specifically the disconnect between users and purchasers. A consumer who gets frustrated with a free iPhone app will switch to a competitor without batting an eyelash, but that just can't happen in the enterprise world. As a rule of thumb, the less patient your users, the better-behaved your app needs to be.

We also use consumer software in our social lives, where certain signaling dimensions — polish, luxury, conspicuous consumption — play a larger role than in our professional lives.

Thus we find the common pattern of slick consumer apps vs. rustic-but-functional enterprise apps. It's the difference between those raised in a diverse court or big city, vs. those isolated and brought up in small towns.

Manners on the frontier

Small towns may, in general, be less polished than the big city, but there's an important difference between the backwoods and the frontier — between the trailing and leading edges of civilization.

If people are moving into your town, it's going to be full of open-minded people looking to improve their lot. If people are moving away from your town, the evaporative-cooling effect means you'll be left a narrow-minded populace trying to protect its dwindling assets.

Call me an optimist, but I'll take the frontier.

Bitcoin and Big Data are two examples of frontier technology. They're new and exciting, and people are moving toward them. So they're slowly being subjected to civilizing pressures, but they're still nowhere near ready for polite society.

The Bitcoin getting started guide, for example, lists four "easy" steps, the first of which is "Inform yourself." (Having to read something should set off major alarm bells.) Step 2 involves some pretty crude software that will expose its private (implementation) parts to you: block chains, hashrates, etc. Step 3 involves a cumbersome financial transaction of dubious legality. Step 4: Profit?

Bottom line: it's a jungle out there.

Most users would be wise to steer clear of the frontier, which is understandably uncivilized, rough around the edges. But for an enterprising designer, the frontier is an opportunity — to tame the wilderness, spread civilization outward.

__

Further reading:- Principles of hospitality (PDF of a chapter from Remarkable Service)

- Gricean politeness maxims (Wikipedia)

- Startups are frontier communities (Melting Asphalt)

- The Milo Criterion (Ribbonfarm)

16 Comments

What I would like to see is a software architectural style that lets us escape from the point-and-click interface in case we find we have to do much more complex things. I sell books on Amazon and eBay, and there's an almost unbridgeable gap between their interfaces for the low volume user and their APIs. And nobody seems to know, anymore, how to write documentation for that sort of thing.

To give an idea of what I think is possible -- and something like this may well exist -- using point and click I go round and round in circles til I find just the sort of report or downloadable spreadsheet I want. Could I say "how did I get here" and the browser would cut out all the needless loops and find the efficient path from the main URL to this report buried deep in the system? Could I lift up the hood on the system and see the steps in code?

Broadly, I'd like to see a design style where there is always a ladder to show you how to move from operating at this beginner's level to the next level, and then to the next. I glimpsed an O'Reilly book called Ambient Findability, I think, that might be apropos. Anybody read it?

I watched a couple talks recently about designing good REST APIs. One question was what the server should do when an API URL is visited in the browser. Many APIs will just redirect the user to either the main page or the end-user version of the resource being asked for. The speaker said that what most developers prefer (i.e. the "polite" thing to do) is to show a bare-bones HTML representation of the resource: basically a human-readable version of what would otherwise be returned as JSON/XML. Maybe the convention will evolve toward something in-between the API and the end-user interface, sort of an application-human-interface for power users that's linked from the end-user interface.

Haven't read Ambient Findability, but the idea sounds promising. As always, though, I imagine the devil is in the details. A team I was on once proposed the idea of taking an artifact the user had created through the UI, and (with the push of a button) generating code that would produce an identical artifact. It would have successfully bridged that gap between UI and API, but the edge cases would have been almost impossible to nail down.

There's a good discussion of this issue and the rarely used HTTP verb OPTIONS here: http://zacstewart.com/2012/04/14/http-options-method.html

Flask implements OPTIONS for all your routes by default: http://flask.pocoo.org/docs/quickstart/#http-methods

Great points there, Kevin.

I especially like the attractor/disperser theme and the frontier/backwater town metaphor, very powerful and applicable to a lot of other contexts besides diplomacy

This post hits on exactly why ads on gratis web services can be so annoying: a lot of advertising is rude. The underlying reality is that the service is (at least in part) a machine for cultivating an audience to serve to its advertisers. The abstraction is that it's an e-mail client or an encyclopedia or a journal: you ask it for information, and it says "here you go". Ads range from saying "here you go" while wearing a t-shirt with some company's logo (small tasteful ads on the sidebar), to shoving leaflets in your face before you can even ask your question (popups, interstitials). The more ads there are, the more you realize that you're not the guest: you're dinner.

I think that's spot on. An abstraction is always a bit of a fiction, but sometimes it's an other-serving fiction (politeness), and sometimes it's a self-serving fiction. (Also often a murky gray mix of the two.) The fiction of YouTube is that it's a video library, but the reality -- of the decision-making process behind the product -- is that it's a device for luring eyeballs toward advertisers. The ads are where the underlying reality leaks through. Ads are rude almost by definition.

On a related note, I sat in on a public lecture last week and was subjected to 15 minutes of "advertising" before the lecture, as the various sponsors -- the university, donors, heads of various departments -- promoted themselves and each other in front of the captive audience. It was offensive, but also a good refresher illustration about some of the hidden functions of academia.

I find I get less angry at user interfaces that go wrong in obvious, stupid ways as

opposed to ones that attempt to "anticipate my needs" in some complicated way and

get it wrong. I think, this, too is analagous to human relations, where someone who

comes on confidently offering all sorts of help to someone is going to get more

grief when he fails, than someone who sets low expectations with relative indifference.

I find my self prefering interfaces that are harder to figure out , if I can follow a simple "a machine wouldn't know x, so it would probably try to do y' algorithm to figure it out. But the more human-like they attempt to become, their 'motives' (i.e. the motives of the designers) become more inscrutable.

Yeah that's interesting. Obviously both interfaces and humans should under-promise and over-deliver. But I agree it's also important for behavior to be intelligible and/or legible (https://www.ribbonfarm.com/2010/07/26/a-big-little-idea-called-legibility/). I know I've made design trade-offs in the past where I sacrificed near-term utility (to the average user) in favor of doing something simpler and more intelligible, in the hope of increasing long-term utility.

I've got a few tangentially relevant remarks:-

(1) There was a story doing the rounds a few years ago that Microsoft started taking security much more seriously after Steve Ballmer was at a wedding where he was asked by the father of the bride to look at a PC that had slowed to a crawl, and subsequently spent two days unsuccessfully trying to rid it of malware. The implication was that Ballmer losing face within his social circle had more impact than years of complaints from outside it.

(2) In Richard Sennett's "The Craftsman" there is a discussion of how, from the 18th century onward, moral qualities have been attributed to building materials, specifically contrasting "honest" brick with the artificiality of stucco ("the British social climber's material of choice"). It strike me that the computer equivalent of honest brick would be plain text. (That remark is probably a bit too cryptic to be useful, but it would take more unpacking than I can be bothered with right now. Sorry.)

(3) Regarding the difference between enterprise and consumer software, I think it's power rather than impatience that is key. Take high end CAD systems. They aren't bought by their users (if you believe Joel Spolsky their typical users don't even know how much they cost, see http://www.joelonsoftware.com/articles/CamelsandRubberDuckies.html). Moreover, they have high switching costs. You might expect them to be a usability disaster - but they're not. All I can say is that the impression I got from working at a company made such a system (not Catia, but that sort of thing) was that car makers take the opinions of their designers very seriously, and hence the role of designer is relatively high status within a car company.

"It strike me that the computer equivalent of honest brick would be plain text."

Not cryptic at all -- that makes a lot of sense. With brick and with plain text, you know what you're getting. There are no hidden downsides. It's not trying to be too clever or making promises it can't uphold. As I mentioned in another comment, I've favored designs in the past that traded theoretical-optimality for the benefit of simplicity, and in many ways that's an attempt to provide the user with a more honest interface.

"I think it’s power rather than impatience that is key."

I don't know. I'll readily admit that user impatience isn't the only factor coaxing teams to produce more usable software, but I don't see how power is a factor. There are a ton of dumb, simple apps that just do their jobs and do them well. And there are a ton of powerful apps that are bloated monstrosities. I don't see what mechanism could lead from power to usability -- but I'd be happy to learn of one.

Ah, I see I've explained myself badly. When talking about power, I meant the power relation between the user and the person making purchasing decisions. Bloated monstrosities don't just happen: they are the product of particular forces.

Once upon a time the canonical example of a bloated monstrosity was Microsoft Office. Back in, oh I don't know, 1996 or thereabouts, Sun Microsystems were promoting the idea that companies reduce their system administration costs by replacing desktop PCs running Office with network computers running Java apps. Their people made great play of some research showing that a high proportion of features that Office users requested were ones that it already had - it's just that they couldn't find them.

The response of Microsoft people to the accusation that applications in the Office suite had too many features was interesting: they thought that the criticism was just plain stupid, and that it was no wonder that companies like Sun had a tough time competing against Microsoft. The claim was that every feature was there because a customer had asked for it. If adding a feature helps make a sale of a ten thousand seat license, then you add the feature - this is Business 101. Ignoring to do so on airy fairy aesthetic grounds was clearly a losing proposition, as could be seen from Apple's declining fortunes.

Of course, that's just an example of the perversity of enterprise sales: the guy who decides which word-processing package a big company should buy is too important to do his own typing, but might get a kick out of making the vendor to jump through some hoops. My reason for mentioning CAD systems is that enterprise sales aren't _always_ perverse that being an aircraft designer is higher status than being a secretary; and that this has consequences for usability.

Oh yes, I see now. That makes much more sense. I will definitely have to mull on the idea that a high-power/-status user base within an enterprise can incentivize better UX from the vendor, relative to a low-power user base. It has to be the case, but I wonder how strong an effect it is.

Something to consider: the thing about poor usability is that it results in user error, and the thing about user error is that it can always be blamed on users. That's what makes dark patterns insidious: taking advantage of imperfections in human cognition creates huge scope for plausible denial. (Of course, the Ryanair example of putting the "No travel insurance required" option on a "country of residence" drop-down menu, in between Latvia and Lithuania, rather stretches the concept of plausibility. What, no "Beware of the Leopard" sign?) Whether it is the software or the user that actually gets the blame is, I'd argue, largely a matter of politics.

The reason I thought my remark about plain text might need some unpacking is that there is a view that usability is all about aping an established set of "friendly" manners. This view would sit very well with your suggestion of popular consumer apps being a vector of usability.

There is an argument (which became less popular with the rise of MacOS X) that people who are attached to the Unix-y, textual (http://theody.net/elements.html) style of dealing with computers are simply wrongheaded, and have failed to grasp the importance of usability. But different people use computers for different things, and therefore have different criteria for usability: sometimes a textual interface is best.

Consider, for example, the needs of someone doing a spot of numerical modeling and analysis. They could do it with a spreadsheet application such as Excel, or they could use a textual language such as MATLAB or R. Some would say that the spreadsheet wins, since it's easier to learn: although authoring a spreadsheet is a form of programming, you don't need to be a programmer to do it.

However, the user's real task may involve more than just authoring a model. If it is something important and complex, they may need for it to be reviewed. If it is something that will get revised over time, then they may need to compare different versions, for traceability. Although I can't claim any personal experience, but I'm guessing that the spreadsheet would be either be completely hopeless here, or would require specially written tools. Whereas generic text-based tools (editors, version control systems, diff) would be just the ticket.

(Why yes, I _am_ a grumpy old Unix programmer. However did you guess? Now, if you wouldn't mind getting off my lawn, I may have a nickel for you ... http://tomayko.com/writings/that-dilbert-cartoon)

Yeah that makes perfect sense. In fact I used your phrase ("honest brick") just yesterday, when critiquing a friend's book authoring website. The site has both a WYSIWYG editor, where you can see the formatting as you type, and a code editor, which shows you the raw HTML. Unsurprisingly, the WYSIWYG editor is a bit shady and will often lie or omit key details, like whether a space is regular or nonbreaking. So it's nice to have the "honest brick" editor to switch over to when the WYSIWYG editor starts misbehaving. (Most interfaces should probably make a choice between one mode or the other, but in this case, I'm glad to have both "honest" and "fancy" modes.)