Bay's Conjecture

A few years ago, I was part of a two-day DARPA workshop on the theme of "Embedded Humans." These things tend to be brain-numbing, so you know an idea is a good one if it manages to stick in your head. One idea really stayed with me, and we'll call it Bay's conjecture (John Bay, who proposed it, has held several senior military research positions, and is the author of a well-known technical textbook). It concerns the effect of intelligent automation on work. What happens when the matrix of technology around you gets smarter and smarter, and is able to make decisions on your behalf, for itself and "the overall system?" Bay's conjecture is the antithesis of the Singularity idea (machines will get smarter and rule us, a la Skynet - I admit I am itching to see Terminator Salvation). In some ways its implications are scarier.

The Conjecture

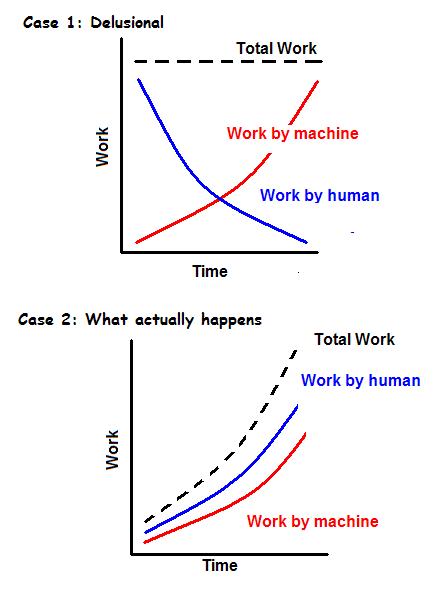

Bay's conjecture is simply this: Autonomous machines are more demanding of their operator than non-autonomous machines. The implication is this picture:

The point of the picture is this: when technology gets smarter, the total work being performed increases. Or in Bay's words, "force multiplication through accomplishment of more demanding tasks." Humans are always taking on challenges that are at the edge of the current capability of humans and machines combined. So like a muscle being stressed to failure, total capacity grows, but work grows faster. We never build technology that will actually relieve the load on us and make things simpler. We only end up building technology that creates MORE work for us.

The one exception is what we might call Bay's corollary: he asserts that if you design systems with the principle of "human override protection," total work capacity collapses back to the capability of humans alone. We are both too greedy and too lazy for that. We are motivated by the delusional picture in Case 1, and we end up creating Case 2.

Here's why this is the opposite of Skynet/Singularity. Those ideas are based (in the caricature Sci-Fi/horror version) on the idea that machines, once they get smarter than us, will want to enslave us. In the Matrix, humans are reduced to batteries. In the Terminator series, it is unclear what Skynet wants to do with humans, though I am guessing we'll find out and it will probably be some sort of naive enslavement.

The point is: the greed-laziness dynamic will probably apply to computer AIs as well. To get the most bang for the buck, humans will have to be at their most free/liberated/creative within the Matrix. So that's good news. But on the other hand, the complexity of the challenges we take on cannot increase indefinitely. At some point, the humans+machines matrix will take on a challenge that's too much for us, and we'll do it with a creaking, high-entropy worldwide technology matrix that is built on rotting, stratified layers of techno-human infrastructure. The whole thing will fail to rise to the challenge and will collapse, dumping us all back into the stone age.

10 Comments

Related Material: http://www.worldwatch.org/node/6008

It talks about system complexity and efficiency in general and not specifically about techno-human infrastructure in particular, but I think the concept resonates with the collapse you are predicting.

Thanks Kapsio: on a much less academic note... here's Slate's take on the possibility of Skynet, by P. W. Singer who helped write Obama's defense policy: Gaming the Robot Revolution.

I am surprised by how superficial and naive the treatment is...

The post is an interesting one and have thoughts to share on that.

re: nature of work

It is not so much quantity as it is quality/nature. Our job is more and more information transformation (cognitive) rather than physical labor. Both the scope of the job and the value propositions are infinite. We are trying to figure out how to cope with this new world. 'Information overload' is a common phrase to describe the lack of effective methods to deal with the new world.

But something else caught my attention - the reference to coffee at the bottom. I have a couple of quick questions. I will give you a coffee if you respond! :) (private message is just fine - [email protected]).

1. When and why did you add that link?

2. How many respond?

3. When and why do people actually respond?

Thanks for sharing your thoughts. I like what I read.

thanks!

Venkata

Venkata - the link is the 'buy me a beer' wordpress plugin (I use the cafe setting). It hooks to paypal. Not a major source of revenue on this blog, but people seem to occasionally use it :)

personally, I think skynet == humans backend + front end machines. For example, if I allow a robot to be controlled by an arbitrary person on the net, the person interacting with the robot will never know if he/she is interacting with a robot or a human controlled robot.

"We only end up building technology that creates MORE work for us."

I'd modify that statement to say "we end up building technology that allows us to achieve more, where the limits of achievement are our imagination alone".

People have often commented on the technology productivity illusion, where products that were touted to save us time (starting from the 1950's onwards with mass-consumer produced household appliances) instead morphed into the overworked, ever-capable and unflappable supermom. One cannot help but feel that, "back then", they didn't know any better, thus leading to the unknowingly incorrect claims that technology would save us time and allow us to do less (like the Jetsons).

--

"We are motivated by the delusional picture in Case 1, and we end up creating Case 2."

Is Case 2 the natural progression of technology's influence on humankind, or does it arise as a result of our motivation and desire to strive towards Case 1? We're at the point where we should be able to stare the beast in the eye and realize that Case 1 is not only silly and old-fashioned thinking, but that this motivation is slowly losing it's power as the model that everybody aims for.

As a lighter counter to the ultrashort diatribe written above, I have to admit that the Foreman Grill truly has saved me time and effort for smaller meals such as chicken breasts, beef and fish steaks. I can safely say that I haven't attempted to entertain larger parties with the Foreman Grill alone, somewhat countering the idea that combined man (me) + machine (kitchen grill) will aim for loftier grilling achievements.

I'd say Case 2 is really a manifestation of the laws of entropy-complexity. I do think the vast majority of people would actually prefer that Case 1 were true, but it is their very striving for Case 1 that sets in motion the thermodynamic processes that result in Case 2.

More baldly: all human striving is an effort to cut corners/steal bargains/something for nothing from mother nature. It always backfires.

Stuart Kauffman has a good line on this. His complexity-theoretic whimsical laws of thermodynamics are:

1. You cannot win

2. You cannot even break even

3. You cannot quit the game

---

4. The game gets more complex all the time

That idea btw, is in the book... haven't found time to work on the damn thing for 2 months, thanks to work pressures.

Re: George Foreman... brings up an interesting thought. Just like entropy _can_ be lowered locally, if not globally, there should be lots of such localized examples. My favorite is a little $1 plastic dish scraper that has really paid for itself a thousand times over.

Venkat

We should probably look at mechanical machines and information machines separately.

Better mechanical machines lead to clearly measurable increases in productivity such as the time taken to reach point B from point A or the number of flawless widgets produced per minute (and yes, grilled chicken breast or whatever). More human effort is then redirected to designing, operating and maintaining these machines. This also creates new needs for human ingenuity to come up with systems and means of organization to derive the advantages of such machines.

Information machines, in an obvious state of infancy compared to mechanical machines, are a different ballgame altogether. IMHO we have begun speculating but do not have a sense of their trajectory and impact, especially as they insinuate themselves in ever-smaller sizes into all kinds of mechanical machines. In this case, the discrepancy between increased productivity and more work (or more complexity) is clear.

We just about know how to use machines to crunch numbers and data trends on a scale far beyond human capability, we know how to use such capabilities to help humans collaborate on a global scale but there are huge gaps in our understanding of converting ideas to communication (witness the "improvement" in requirements gathering for a system) and of factoring human behavioral aspects into everything else we do. A typical example is how social engineering incidents always occur despite superior security technologies and control processes.

Perhaps, just like the convergence of computer and communications led to Information Technology, the convergence of information and human thought might lead to a new Idea Technology phase. This may entail new developments in the neurosciences and use of restricted subset languages.

With mechanical machines, the laws of physics seem to clearly indicate the limits of size and speed. With information machines (though their operations are ultimately realized through physical machines and therefore governed by physics) the limits are hazier and border on the philosophical.

Can you please provide some references/URLs for John Bay, I cannot find anything to expand on the content you have provided about him in the article.

I don't believe he has published this idea. It was just in a DARPA presentation I heard at Stanford.