Digital Philosophy II: Are Cellular Automata Important?

The assertion that the universe is a computer (or rather, a computation) might seem like an egregious category error -- computers after all are things made from the 'stuff' of the universe. To take digital philosophy seriously we need to get past this non-trivial barrier to comprehension. The idea is that computation is not a metaphor for the universe, nor is the physical evolution of the universe analogous to computation. The idea is that the universe can be said to be a gigantic ongoing computation just as it can be said to be a bunch of particles interacting energetically via some laws. In the first part of this series, we looked at the prerequisite idea that the continuum (or real line) might not be so real. In the next part, we'll get to the latest ideas, from quantum computing scientists like Seth Lloyd. But before we get there, we need to talk about Von Neumann, Stephen Wolfram and Cellular Automata, an approach to digital philosophy (and physics) that I think is wrong, but nevertheless very illuminating.

Matter as Computation and (Self-?) Simulation

As we normally understand them, computers are things made out of physical 'stuff' and some of the behavior of the 'stuff' viewed through some subjective semantic filters (i.e. associations like "red LED physically lighting up is 'YES' to my question") by you and me, is 'computation.'

But the line really isn't so clear. Is a swinging pendulum computing approximate solutions to the simple-harmonic-oscillator equation? What about a textbook RLC oscillator circuit? Do the two compute approximate solutions to each other's behaviors? The early analog computation pioneers certainly thought so. Given the right constants, is the pendulum a 'simulation' of the oscillator circuit and vice-versa? So at the very least, any arbitrary physical system might be said to compute its own behavior and that of certain other 'similar' systems, in ways that make mathematical thought possible. Note though, that while the pendulum and the RLC circuit are 'like' each other according to our observations, neither is actually a simple-harmonic oscillator, either categorically or in terms of descriptive completeness.

Now add the observation that there are certain ways of putting finite amounts of matter together such that the resulting entities -- approximate Universal Turing Machines (UTMs) -- can make part of their behavior (approximately) mimic the behavior of anything else, and suddenly things get even more murky. We are dangerously close to glib, but potentially meaningless, observations like 'everything is like everything else' and 'the universe is recursively self-descriptive.'

What is worse, these UTMs needn't be particularly complicated. In fact, they can, in the form of cellular automata, be suspiciously simple. Which brings me to the cellular automata approach to digital philosophy. An approach that I think fits H. L. Mencken's assertion that "For every complex problem there is an answer that is clear, simple, and wrong" and which I suspect Einstein would have labeled "too simple." But it is wrong, I think, in very instructive ways.

I won't say much about the history of the idea that the universe is a computer (this goes back to Konrad Zuse and Edward Fredkin) but jump straight to the specific approach, introduced by Von Neumann, using cellular automata.

The Cellular Automata Approach

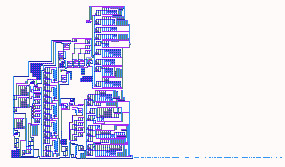

If you've seen James Conway's famous Game of Life, that little simulation of a 2-d grid where repeatedly applying simple rules leads to beguiling life-like patterns evolving, you know what cellular automata are. Von Neumann introduced them more than a half-century ago. The neat thing about CAs is that they don't look like computers -- there are no constructs like 'program' or 'memory' or 'input.' They look like discrete dynamical systems and have, instead, functionally similar (but semantically distinct) constructs like 'evolution rules,' 'space' and 'initial conditions.' The neatest result? Some CAs, including Life are provably equivalent to UTMs. The most interesting CA is something Von Neumann called the Universal Constructor. This is -- wait for it -- a cellular automaton that can reproduce itself! One realization looks like this (public domain image from Wikipedia):

Self-replication is an amazing type of computation -- behavior reproducing structure and completing destroying any intuition you might have of the separation of form and content, or of structure, function and behavior. It suggests one way (though I think not the right way) of starting to understand phrases like 'The Universe is computing itself'). Here are two points about UCs that you might want to remember. First, you need a minimum complexity to generate reproductive behavior, below which CAs produce degenerate, non-reproductive behavior. Second, UCs are also Turing machines (am not sure if they are known to be UTMs), and so cannot compute uncomputable quantities. But here is a crucial addition: a UC with noise injected is capable of open-ended evolution (rather like random mutation in Darwinian evolution). Stick behind your ear this question: if the whole universe is a UTM or a UC, and its evolution appears to be more open-ended than closed, where might the noise come from? We'll get to that question in the next part. Self-replicating machines, incidentally, aren't merely mathematical toys. The simplest 3-d self-replicating robot that I know of was recently constructed by Hod Lipson and his students at Cornell. The history of the technology is quite long.

The Wolfram Gorilla

Which brings me to Stephen Wolfram and that 600-lb gorilla in all such discussions, A New Kind of Science. I'll admit my views of ANKoS are based purely on a rough skim, preconceived notions, and hearing Wolfram speak about the book a few years ago when it was first released. For those who haven't kept up with this world, Wolfram, after a start as a physics prodigy and founding the company that brought us Mathematica, basically devoted his life to one-dimensional cellular automata and produced in 2002, after nearly 2 decades of labor, a 1192 page tome whose essential claim was that everything that needed explaining about the universe could be explained by one-dimensional CAs. When I heard Wolfram speak at the University of Michigan, there was a perceptible air of skeptical disbelief and 'is this guy for real?' in the audience, not least because of the completely blunt condescension in his tone towards any other ideas. A good summary of his talk would be "The whole intellectual history of the planet is a preface to ANKoS, which basically answers all meaningful and profound questions, but there are a few dregs remaining if you want to continue in science, math or philosophy, now that I've rendered inquiry irrelevant."

Not exactly a message that would be well-received anywhere, but let's judge the man on the merits of his ideas rather than what appears to be an obnoxious personality (many great thinkers -- and Wolfram is definitely one, even if he is wrong, he is breathtakingly, brilliantly wrong -- have been jerks). What do we have? Unfortunately, from what I can see, ANKoS really brings nothing new to the party that had not already been brought there by thinkers going back to Konrad Zuse, Edward Fredkin, von Neumann, Gregory Chaitin and most recently, Seth Lloyd and other quantum computation. Some harsh critics have gone so far as to say that the 1192 page tome is all fluff, except for one important result (that a 1-d automaton called Rule 110 is Turing-equivalent) which was discovered by Wolfram's student Matthew Cook rather than Wolfram himself.

I've said about all I can without actually reading ANKoS (which I won't do unless someone convinces me it is worthwhile in 1129 words or less), so let's conclude with some observations about the CA approach to life, the universe and everything (wouldn't it have been breathtaking if Rule 42 had turned out to be the interesting one instead of Rules 30 and 110?)

Assessing the Cellular Automaton Approach

As I said, I think this approach is misguided at very fundamental levels, but is still very illuminating. Obviously, I can't make a watertight argument that this is the case since I am not an active researcher in the field and haven't read ANKoS, but I'll outline the reasons I am both skeptical and intrigued:

Reasons why the CA approach is illuminating

- It has every nearly element you need in the soup: self-replication, universal computation, a role for randomness (to create open-ended/uncomputable evolution).

- It is conceptually very simple and comprehensible compared to other approaches, including other approaches to digital physics as well as traditional physics (which is not to say that there aren't hairy technical problems inside), but still produces behavior that mimics many features of the universe and biological life within it.

- It serves to fill the conceptual gap between traditional computation and computation as dynamic evolution.

Reasons why it might be misguided

- The approach is simply too arbitrary and it would be suspiciously good luck if it turned out to explain everything

- Wolfram made some throwaway remarks about how one-dimensional automata somehow explain fundamental constructs like 'consciousness' and even 'time.' To me, even without probing much deeper, this doesn't seem possible given what 1-d automata are -- they could possibly depend on certain assumptions about the nature of time and consciousness, but they probably cannot 'explain' these concepts in any meaningful way.

- The whole approach is an extended metaphor of sorts, with startling commonalities between the output of automata and (for instance) the patterns on snail shells. But you can make similar observations about many mathematical constructs, including prime numbers, the Golden Ratio and so forth (they show up in obscure places in nature).

- Unlike some of the superstring theories causing trouble in the world of physics right now, ANKoS style approaches aren't even the types of mental models about which you can even make falsifiability judgments. That there is no meaningful map between the constructs of the approach and observed reality is just the beginning of the problems.

- There are obscure but alluring ideas in the approach like "The Principle of Computational Equivalence" and "Computational Irreducibility" that, when you poke a little, get lost in a semantic soup, which suggests theology rather than metaphysics.

- Finally, in everything I've read or heard about this approach, somehow, I did not gain a single 'Aha!' insight that made me see anything differently. Shouldn't a theory of everything shake your very being?

2 Comments

Your "Reasons why it might be misguided" are all refuted in:

http://www.research.ibm.com/journal/rd/481/fredkin.pdf

Fairly easy reading and provides some clues as to falsifiability experiments.

Notable "Aha" insights include why there are antiparticles, the origin of spin, where the rest of the spatial dimensions (ala string theories) are hiding, etc.

Enough for me anyway.

Check out Cahill's process physics, interesting complement to the examples you cited in the same vein of thinking:

http://www.flinders.edu.au/science_engineering/caps/staff-postgrads/info/cahill-r/process-physics/